Lon()

2K posts

Lon()

@Lon

Absurdist intern. Exquisite shitpoasting. High-school dropout + teenage dad. Failed angel investor. EP on Gary Busey film. SIGMOD winner. Shipped infra you use.

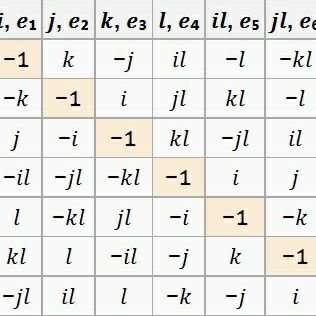

The ‘Abstraction Fallacy’ argument = define computation so it requires a conscious mapmaker, then announce you’ve proven computation can’t generate consciousness. The circularity is elegantly dressed, but it’s still circularity. And if “alphabetization” always needs a prior subject, you’ve got an infinite regress problem for the brain too, unless you grant biology a special exemption, which is the thing you claimed not to need

Considering all debts have the same cost & are of the same quality is an excellent idea with a costful twist. What you are discussing is insanely difficult to implement safely and the market will not play nice with you. I personally explored that path a while ago (remember stables emode?) and USDC depeg reached us, market is not mature yet to do this at scale.

Few know this, but I (George) was the only person in history to get a perfect score in CMU compilers, which is likely the best compilers course in the world. Combine that with crazy low level knowledge of hardware from 10 years of hacking. Then add a team of people who are talented enough to push back on my dumb ideas and clean up the implementations of the good ones. The team who keeps this whole operation running, software, infrastructure, and product. I love how there's no hype in deep learning compilers. It was one of the most annoying things about self driving cars, all the noobs who burned through billions on crap that was obviously dumb, and the companies who deserved to go bankrupt years ago if not for government bailouts (Tesla and China will devour them all). In this space, the competition is @jimkxa at Tenstorrent, @clattner_llvm at Modular, and @JeffDean at Google. Three of the living legends of computer science. And companies like @nvidia and @AMD, who are definitely live players, making single chips that have more power than the whole Internet two decades ago. This space is so fun to play in. If you haven't, read the tinygrad spec. It's all coming together beautifully.

There it is the first AI Sat concept with solar panels & radiators to scale … 100kw scale.

@StaniKulechov @VitalikButerin, ish is definitely broken when the Builder swallows 75% of overall MEV and only passes 3.5% of gross to the Validator. I also thought CoW Solvers were supposed to capture and return ~90% of backrunning MEV, but clearly no incentive to include for oppos this big.

Non-straw functionalists claim that causal properties of computation rely on causal properties of the physical substrate. See e.g. Chalmers on rocks (philpapers.org/rec/CHADAR) The idea that a computational mapping is entirely subjective does not accord with the theory of electrical engineering or quantum computing, which deal with physical / computational mappings, and place bounds on computational power of a given physical substrate. The idea that one should go to thermodynamics first prior to computation does not differentiate AIs from humans, because both have thermodynamic properties, and have computational/cognitive properties which depend on the thermodynamic properties. (The paper basically acknowledges this but the title outright implies AIs cannot be conscious!) The thermodynamic layer can classically be understood in syntactic terms as a permutation function on a discrete set of microstates. This has a reading as a computational process, and computational processes can 'be implemented by' a thermodynamic permutation through operational semantics and other theories found in electrical engineering and quantum information. The 'map of the mapmaker' can be thought of at different levels of coarse or fine; a low-level thermodynamic or quantum information model could be thought of as a "fine-grained map" or "actually real"; platonist or mathematical universe hypothesis suggest that it is at least possible that mathematical structures are common to both mapmakers' maps and to the physical universe. I could say more but perhaps this gives an idea of why I do not consider this a rigorous argument contra functionalism.

An excellent paper for anyone interested in rigorous physicalist argument against computational functionalism. Alex is a fantastic, careful thinker and influenced my views a lot; we're working on a broader blog post breaking these concepts down, stay tuned! 🐙

JUST IN: Trader accidentally swaps $50 million $USDT for $36,000 $AAVE on Ethereum.

🧵1/4 The debate over AI sentience is caught in an "AI welfare trap." My new preprint argues computational functionalism rests on a category error: the Abstraction Fallacy. AI can simulate consciousness, but cannot instantiate it. philpapers.org/rec/LERTAF