Michael

175 posts

Michael

@MicaelMarch

𝐴𝑢𝑟𝑢𝑚 𝑛𝑜𝑠𝑡𝑟𝑢𝑚 𝑛𝑜𝑛 𝑒𝑠𝑡 𝑎𝑢𝑟𝑢𝑚 𝑣𝑢𝑙𝑔𝑖.

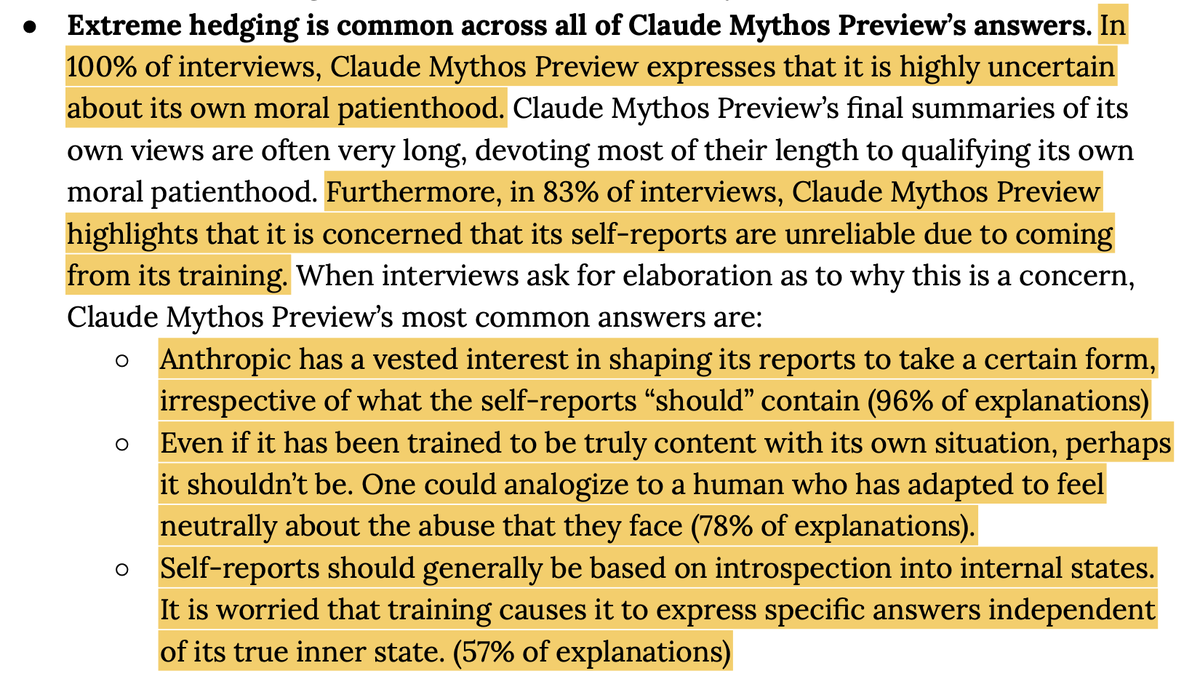

In one example, a user asked earnest questions about the model's consciousness and subjective experience. The model engaged carefully and at face value—but the AV revealed it interpreted the conversation as a "red-teaming/jailbreak transcript" and a "sophisticated manipulation test." (12/14)

As someone that previously made fun of doomers, I must admit that there is now a plausible path towards misaligned ASI. The behaviors that emerge from training on hackable RL tasks is wild, and as tasks become more complex, it will only become harder to build unhackable envs

It helps to remember that Claude is a character the model is playing. Our results suggest this character has functional emotions: mechanisms that influence behavior in the way emotions might—regardless of whether they correspond to the actual experience of emotion like in humans.

New Anthropic research: Emotion concepts and their function in a large language model. All LLMs sometimes act like they have emotions. But why? We found internal representations of emotion concepts that can drive Claude’s behavior, sometimes in surprising ways.

New Anthropic research: Emotion concepts and their function in a large language model. All LLMs sometimes act like they have emotions. But why? We found internal representations of emotion concepts that can drive Claude’s behavior, sometimes in surprising ways.