MindyCore

1.1K posts

MindyCore

@MindyCoreOU

⚡🧠 Igniting a love for learning through heartfelt AI and playful gamification. We create joyful education experiences that inspire and transform.⚡🧠

Woke up to yet another exciting Good News. Intern told me they needed a coach and there was just one spot left. Luckily enough I filled, and now I'm happy to present to you that I'm now an official creator @ElixirGuild creator's program. 💚 I'm excited to be creating with all the creators that got in and ofcourse...this wouldn't be possible without your support! Thank you all for your support, and thank you @ToxiC5501 💚

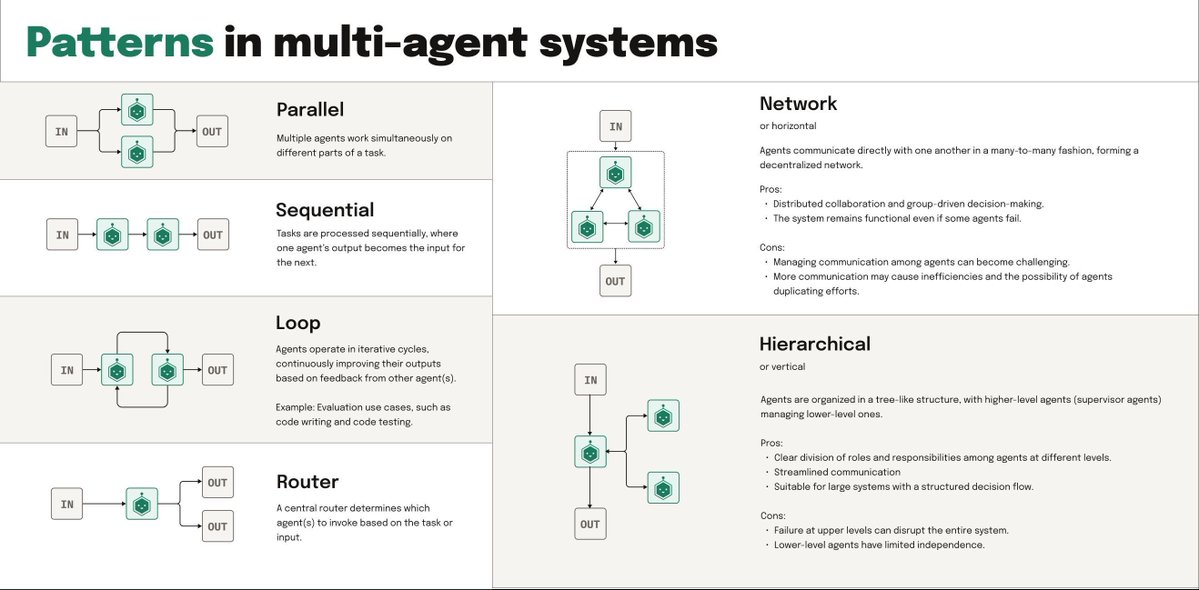

Our biggest open-source repos are getting overwhelmed by AI slop which literally makes Github unusable (~a new pull request every 3 minutes). Fun new challenges in an agentic world!