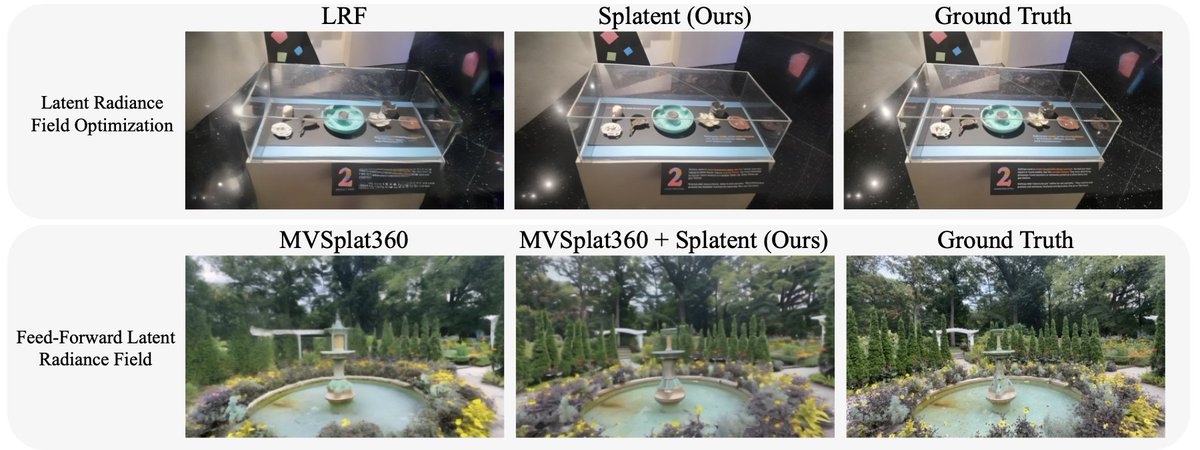

Happy to share Splatent, a new research done during my internship at Amazon @PrimeVideo! 🎬 We tackle a key issue in 3D generation: getting sharp reconstructions directly from diffusion latent space. 📄 Paper: arxiv.org/abs/2512.09923 🌐 Page: orhir.github.io/Splatent/