Itamar Zimerman

155 posts

Itamar Zimerman

@ItamarZimerman

PhD candidate @ Tel Aviv University. AI research scientist @ ibm research. Interested in deep learning and algorithms.

An amazing achievement by our team at doubleAI. GPU kernel optimization is profoundly impactful but notoriously hard, only a handful of human experts can actually do it well. Existing AI systems are not there – the reasoning required to optimize GPU kernels is too deep. Our AI system WarpSpeed – built on our techniques for deep search and verification – is at a different league, solving one of the hardest GPU optimization domains. This is not “yet another AI winning a toy benchmark”, but an AI comprehensively surpassing human experts on a task that top experts worked and refined for a decade. Take a look at our blog about it: doubleai.com/research/doubl… More is coming soon...

It's time to look past dictionary learning for decomposing LM activations. What happens when we instead leverage local geometry? We find a natural region-based decomposition that yields better steering and localization 🧵 1/

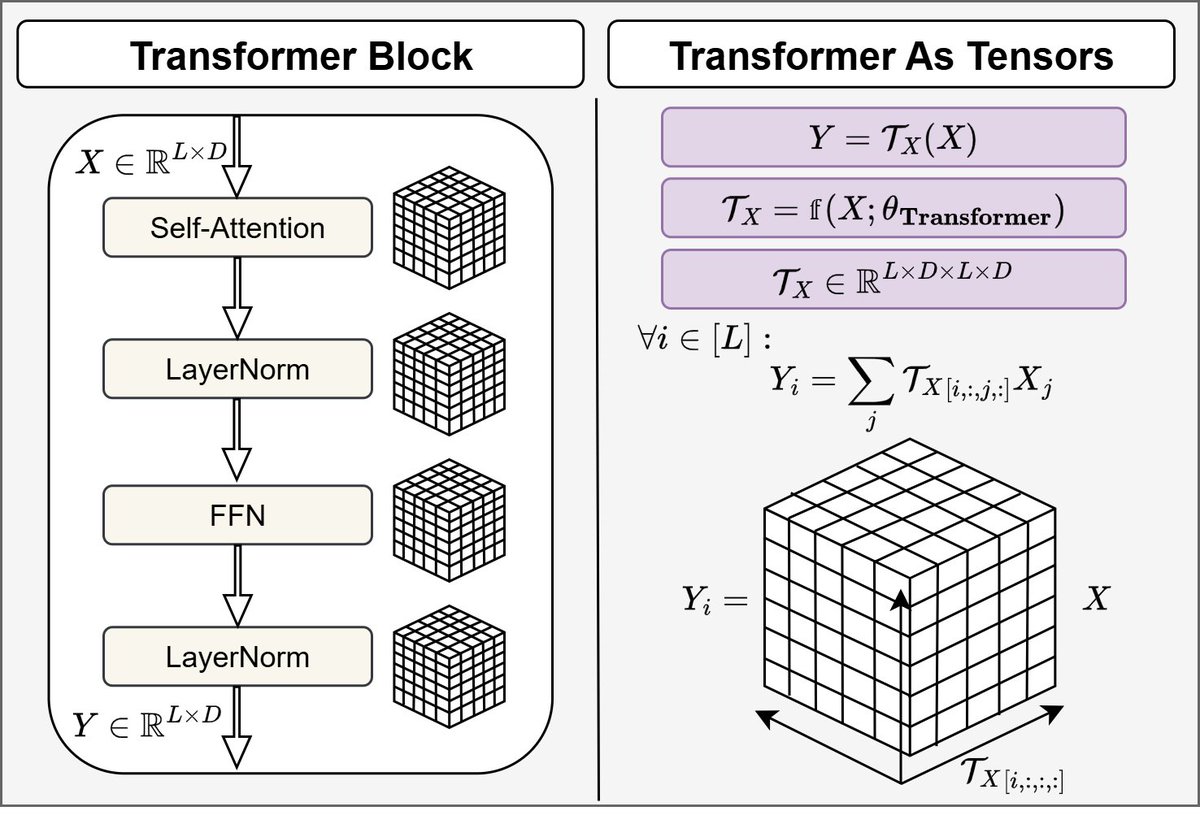

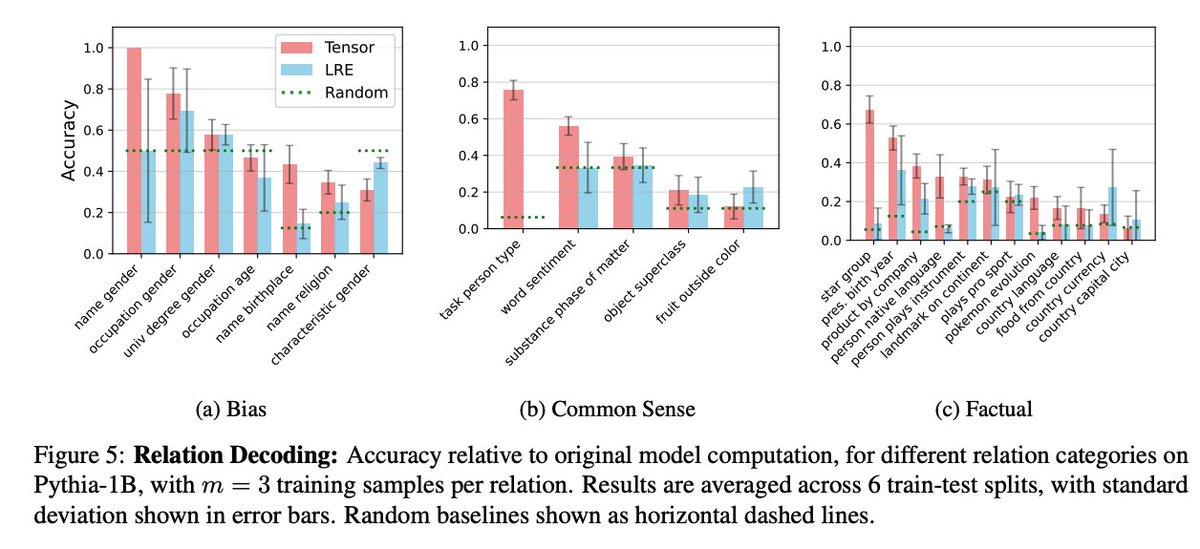

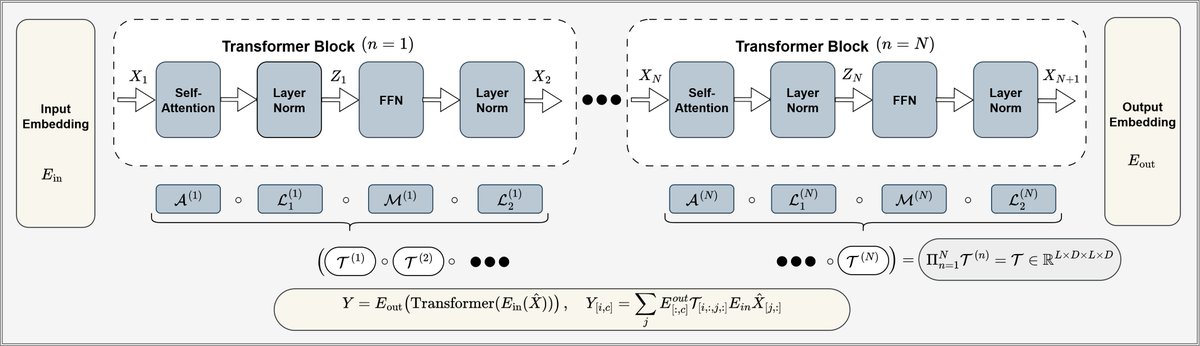

TensorLens End-to-End Transformer Analysis via High-Order Attention Tensors huggingface.co/papers/2601.17…

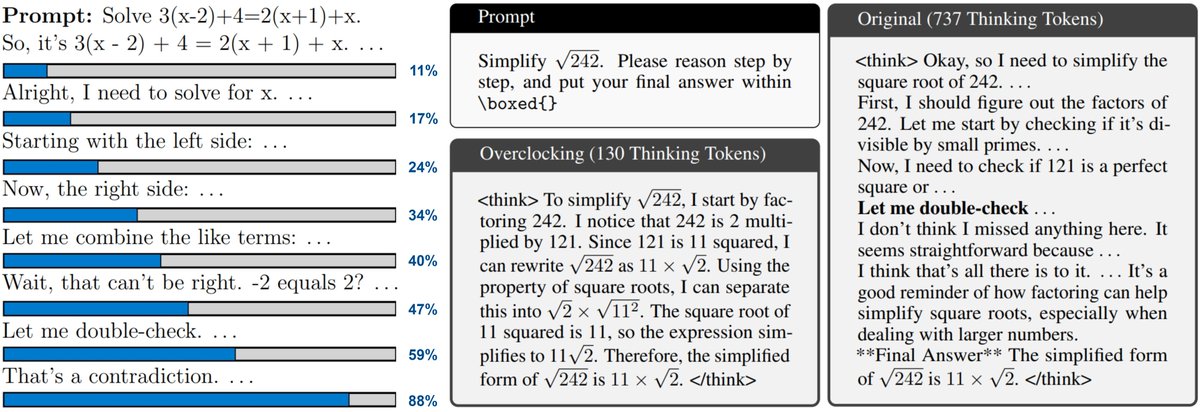

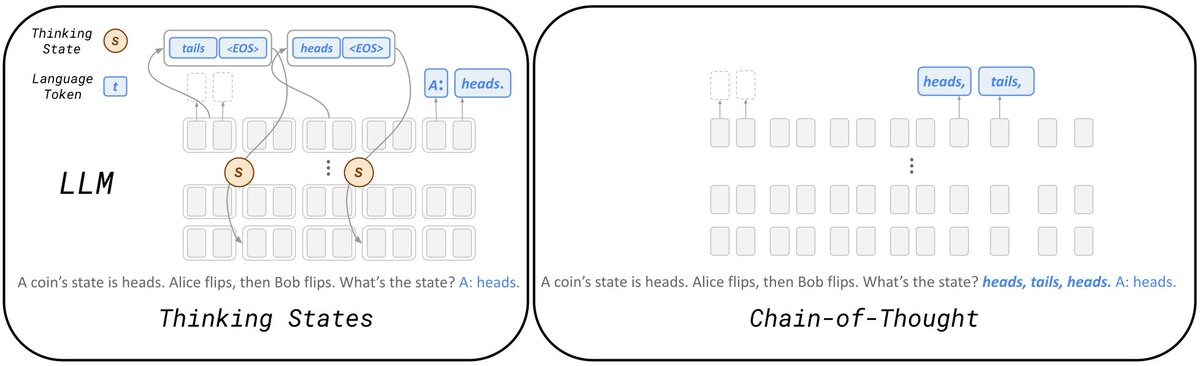

📄🚨New! Attribution methods that assign relevance to tokens are key to extracting explanations from Transformers and LLMs. Yet, all existing approaches produce fragmented and unstructured heatmaps (see image)! Why does this happen? and how can we fix this chronic issue? See🧵1/5

Excited to share that "TokenVerse: Versatile Multi-concept Personalization in Token Modulation Space" got accepted to SIGGRAPH 2025! It tackles disentangling complex visual concepts from as little as a single image and re-composing concepts across multiple images into a coherent result. token-verse.github.io #SIGGRAPH2025