Luiza Jarovsky, PhD@LuizaJarovsky

🚨 "AI Models Collapse When Trained on Recursively Generated Data" is one of the most influential papers ever on LLMs. Surprisingly, it might signal that copyright protection is a BACKBONE of the AI industry. Quotes & comments:

"The development of LLMs is very involved and requires large quantities of training data. Yet, although current LLMs, including GPT-3, were trained on predominantly human-generated text, this may change. If the training data of most future models are also scraped from the web, then they will inevitably train on data produced by their predecessors. In this paper, we investigate what happens when text produced by, for example, a version of GPT forms most of the training dataset of following models. What happens to GPT generations GPT-{n} as n increases? We discover that indiscriminately learning from data produced by other models causes ‘model collapse’—a degenerative process whereby, over time, models forget the true underlying data distribution, even in the absence of a shift in the distribution over time"

-

"Our evaluation suggests a ‘first mover advantage’ when it comes to training models such as LLMs. In our work, we demonstrate that training on samples from another generative model can induce a distribution shift, which—over time—causes model collapse. This in turn causes the model to misperceive the underlying learning task. To sustain learning over a long period of time, we need to make sure that access to the original data source is preserved and that further data not generated by LLMs remain available over time. The need to distinguish data generated by LLMs from other data raises questions about the provenance of content that is crawled from the Internet: it is unclear how content generated by LLMs can be tracked at scale. (...)"

-

My comments:

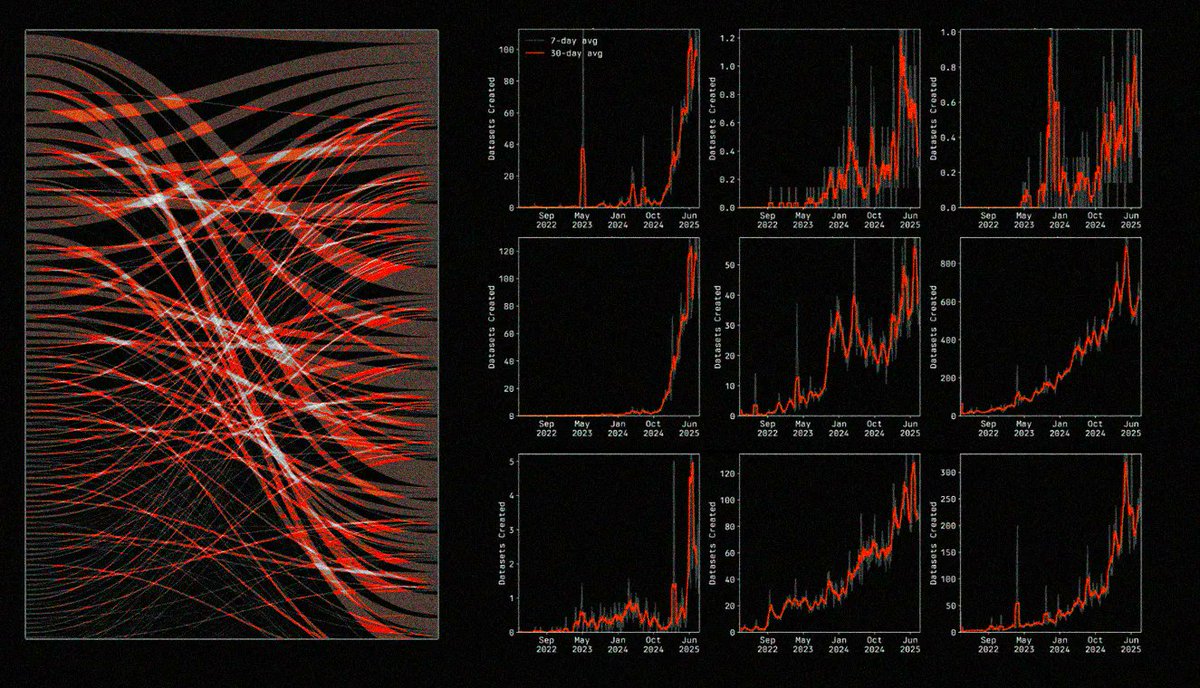

As the internet becomes polluted with AI-generated content, a way to maintain the quality of training datasets and support the development of new LLMs might be to create generative AI-free training datasets, including text, image, video, and audio data.

That will only happen with the assurance of effective copyright protection mechanisms, a market for fairly negotiated licensing deals, and additional incentives for creativity, ingenious, and innovative human work.

-

👉 Link to the paper below.

👉 Never miss my analyses and recommendations of excellent papers, join 77,000+ people who subscribe to my newsletter (link below).