Readwise

585.8K posts

Readwise

@readwise

Save your best highlights from Kindle, Twitter, Pocket, Instapaper, iBooks, and 30+ others. Then revisit, search, organize, and export them seamlessly.

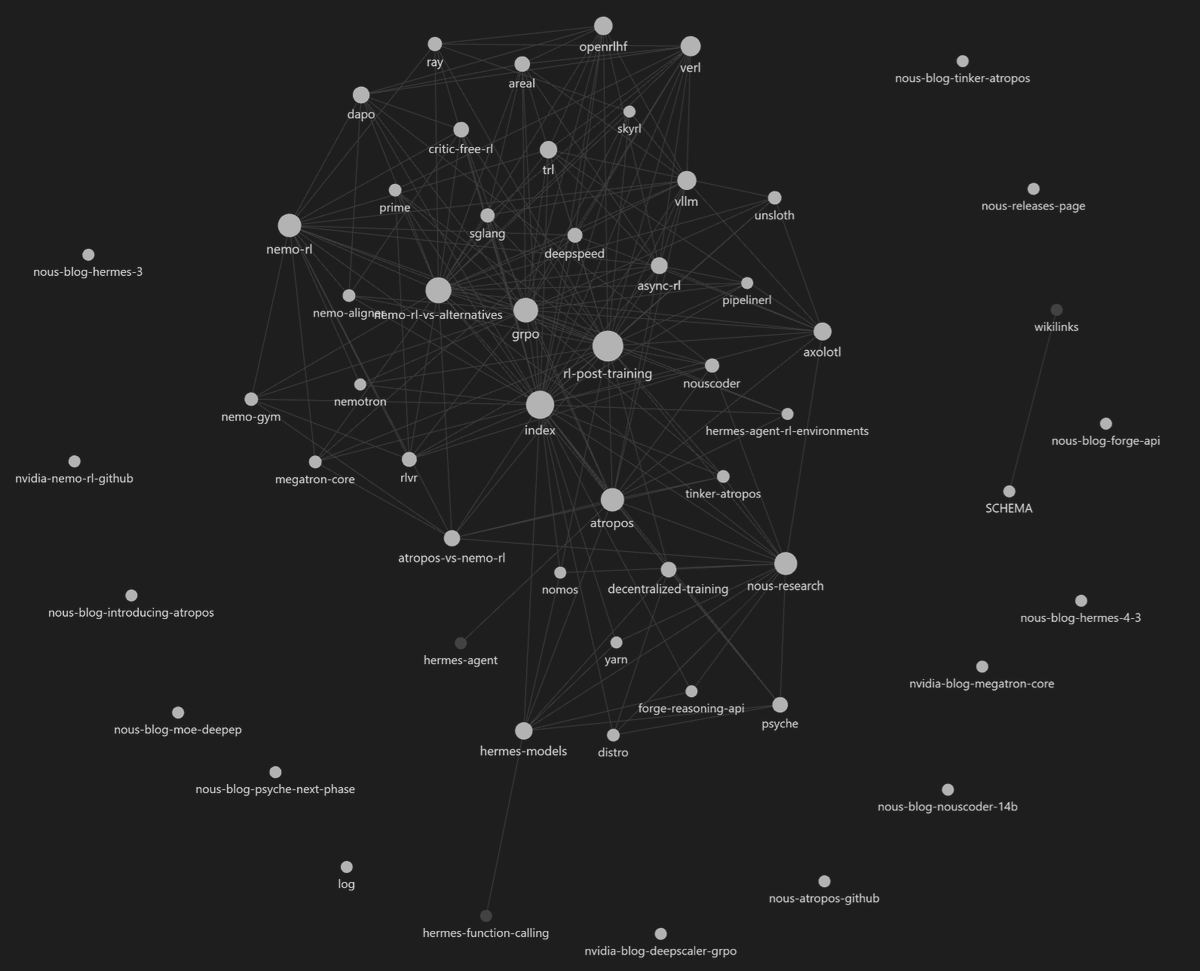

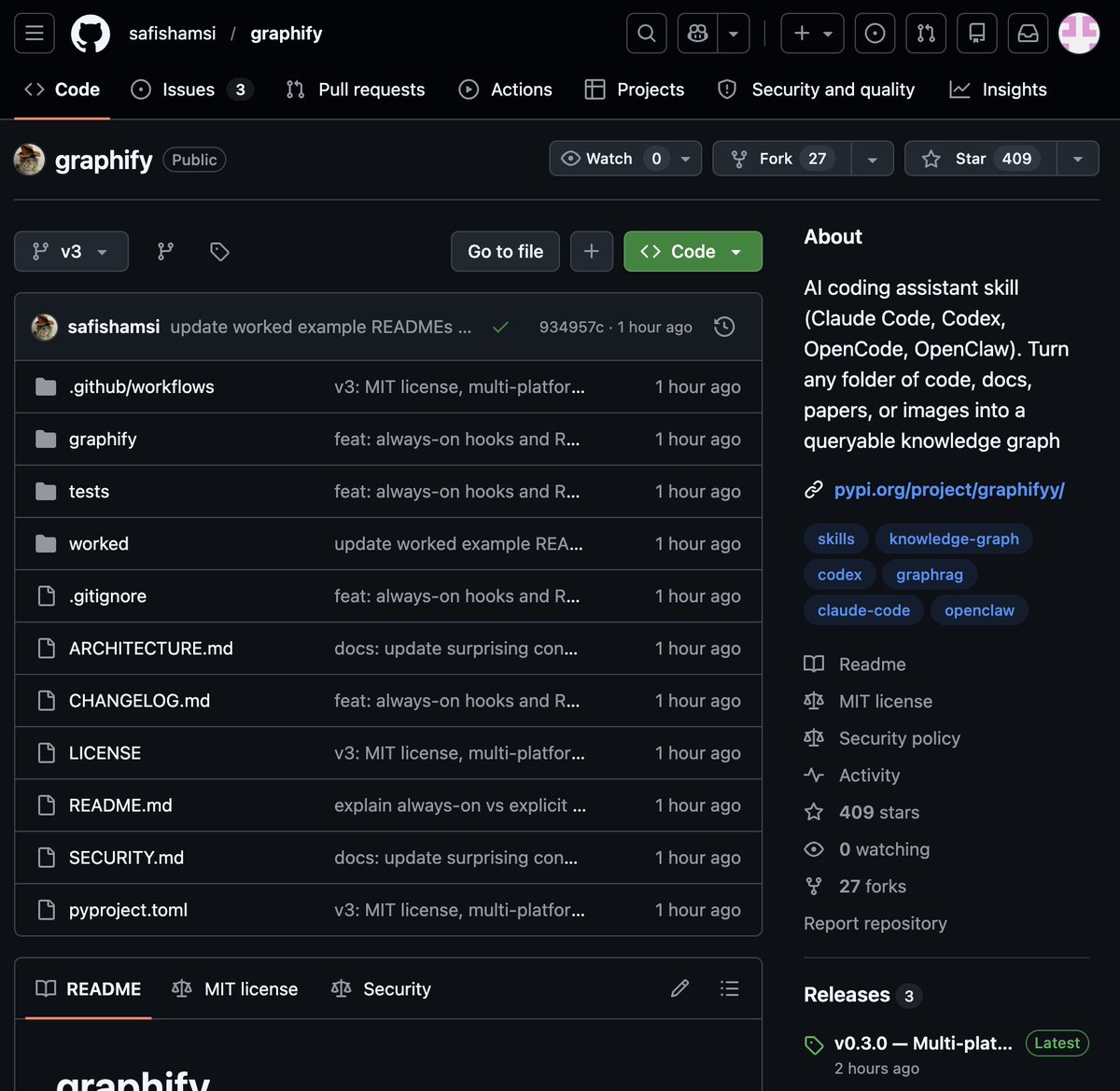

终于解决了Agent不能抓取X长文章的完整正文+互动数据的难题! 我现在做自媒体,经常在X、抖音和小红书刷到一些爆款内容,但是没有时间仔细阅读,下意识就想收藏。但是,我问问在座诸位,有几个人的收藏夹不吃灰。。。 所以为了促进知识的流转性,我给各个平台都做了各种独特的抓取skill,并且把它们给OpenClaw装上,但是,X的长文章一直有个问题,各种方法试过了,不是文章抓不完整,就是互动数据抓不到。 但是我用nitter+xcrawl的方法解决了这个问题。原理就是nitter把X的正文转化为静态页面,然后xcrawl通过行为模拟优化抓取到静态页面的内容,从而达到返回完整正文+互动数据的方法。 nitter的话大家的小龙虾应该都会,xcrawl的链接是这个:xcrawl.com/?keyword=h2lca…,有很慷慨的免费额度,平常拿来抓取X长文章绰绰有余了,快去试试吧!

I built this thing called Clicky. It's an AI teacher that lives as a buddy next to your cursor. It can see your screen, talk to you, and even point at stuff, kinda like having a real teacher next to you. I've been using it the past few days to learn Davinci Resolve, 10/10.