Ronan

3.5K posts

Ronan

@Ronangmi

investing @Collab_Currency / @Dorm_DAO τ reply guy, dreamer

It’s never been easier to earn points before! For a limited time only, when you sign up for Grass, you instantly earn 10,000 Uptime Points! That is the equivalent of more than 50 hours of uptime with the desktop app! Download Grass. Run it in the background. Earn rewards. 👉grass.io/download

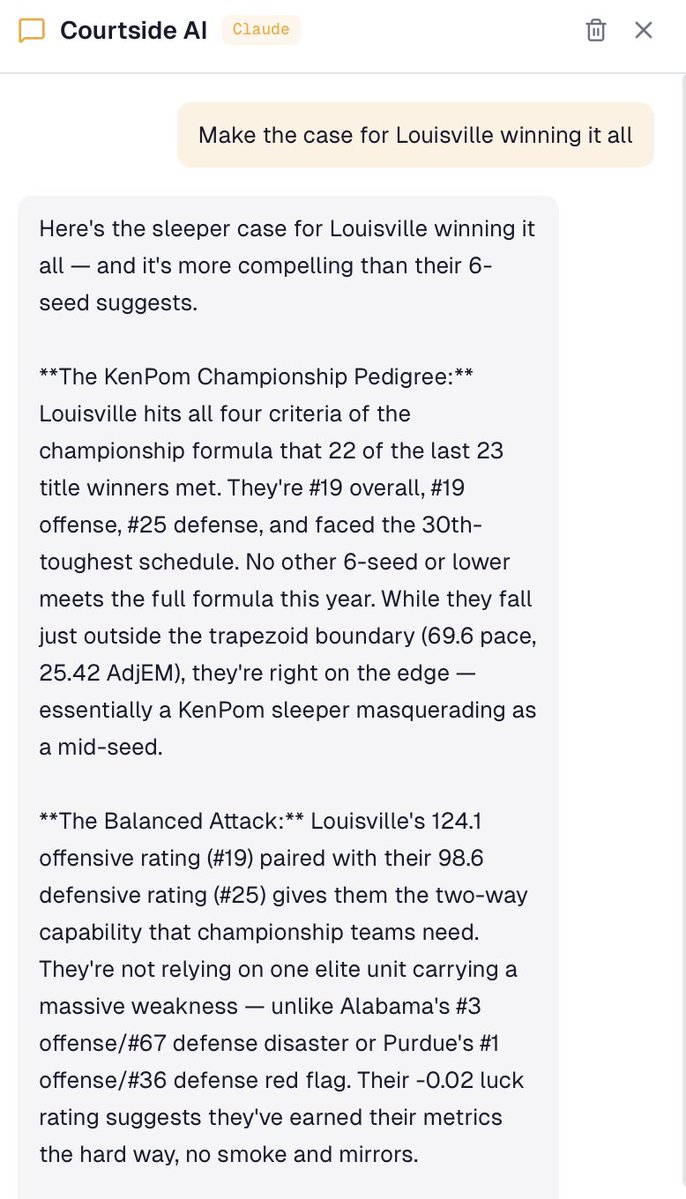

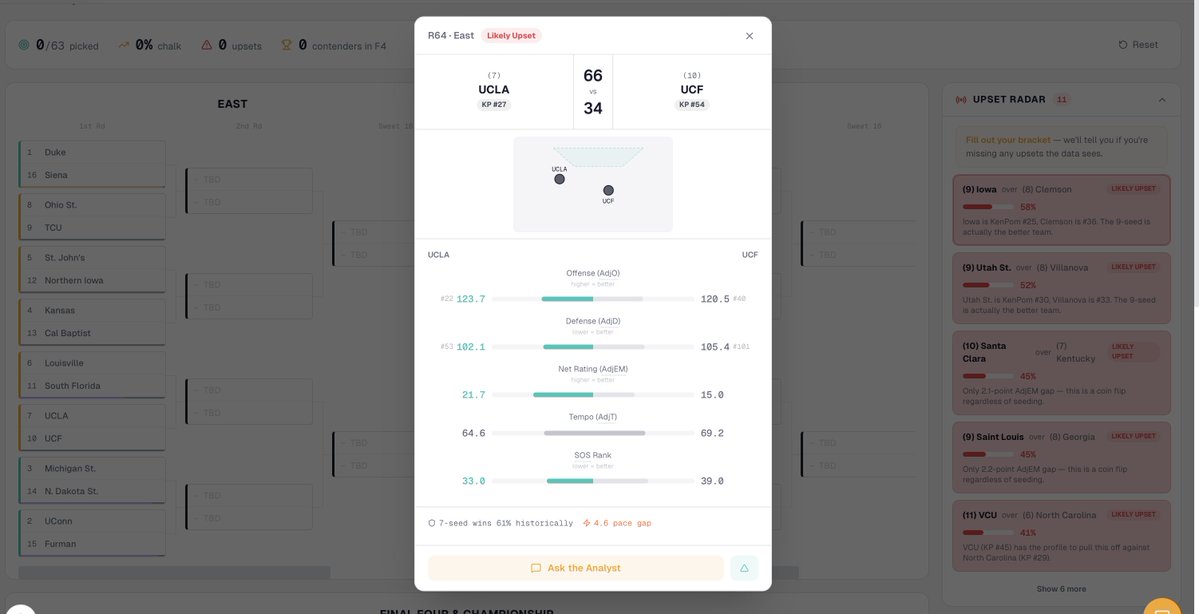

Made a March Madness bracket tool called Courtside. It combines @kenpomeroy ratings with the 'Trapezoid of Excellence' concept from @RyanHammer09 to help you analyze matchups and fill out your bracket Best viewing on desktop, check it out below! 2026courtside.vercel.app

Tether AI breakthrough Tether AI team just released new version of QVAC Fabric to include the World’s First Cross-Platform BitNet LoRA Framework to Enable Billion-Parameter AI Training and Inference on Consumer GPUs and Smartphones. Background Microsoft's BitNet uses one bit architecture to dramatically compress models. Traditional LLMs operate on full-precision computation, where weights are stored as complex, high-resolution numbers. The innovation of BitNet is that it shrinks these weights into a tiny ternary range of only -1, 0, and 1. significantly reducing memory usage and computation. LoRA, is a parameter-efficient fine-tuning technique that reduces the number of trainable parameters by up to ninety-nine percent. Together they slash memory and compute requirements. Yet BitNet has mostly been limited to CPU or CUDA NVIDIA backends, and lacked the support of LoRA fine-tuning. Enters QVAC Fabric: the unlock Today, with QVAC Fabric LLM, is the first time BitNet LoRA fine-tuning and inference work cross-platform across GPU vendors and operating systems using Vulkan and Metal backends. That means support for AMD, Intel, Apple Metal and also Mobile GPUs. And for the first time ever, BitNet inference runs efficiently on smartphones using mobile GPUs. On flagship devices, GPU inference is 2 to 11 times faster than CPU while using up to 90% less memory than the full precision models. The biggest unlock: QVAC Fabric LLM support for BitNet LoRA fine-tuning on heterogeneous GPUs. Our team was able to demonstrate this by fine tuning models up to 3.8 billion parameters on all flagships phones such as Pixel 9, S25 and iPhone 16 and up to 13 billion parameter models on the iPhone 16. Github repositories: github.com/tetherto/qvac-… : general QVAC Fabric codebase github.com/tetherto/qvac-… : specific QVAC Fabric's BitNet knowledge base, architecture docs and pre-built binaries What does it mean? What used to require dedicated GPUs now runs on consumer hardware. This breakthrough is the first real-world signal of a local private AI that can truly serve the people. And this is just the beginning. In the next months and years Tether will relentlessly continue to invest significant amounts of resources and capital to continue to research and develop open-source intelligence that can scale and evolve on local devices, providing maximum utility and privacy to its users. The era of Stable Intelligence has just begun. Free as in freedom.

1/ Earn on balances with Privy. Connect app balances to curated DeFi vaults and expand revenue opportunities with just a few API calls. Powered by vault infrastructure with @Morpho, with risk strategies from @steakhousefi and @gauntlet_xyz. Support for @aave and @kamino 🔜

the framing of "begging for resources" is wrong and not how the mechanics of the system works TAO emissions are just liquidity entering a pool at market price. So it can work as a bootstrapping mechanism early on, and a tailwind as the subnet matures. Chutes recently hit 0% TAO emissions due to net outflows from the LP and there was zero impact. The pool is deep enough that they could stay at 0% indefinitely and be completely unphased. The second part of the question I'm interpreting as "why not raise VC and keep more of the token supply". And the answer depends on whether you think the VC structure has historically worked for PoW projects. The DePIN chart graveyard from insider/VC/miner sell pressure is a pretty compelling data point against it. So don't think you can criticize the structure when its the one working the best right now. Founders choose Bittensor because it gives them the most intelligent miner set for supply-side GTM, inherited trust from a proven standardized ruleset, and a positive-sum ecosystem where every subnet's success lifts the whole thing.