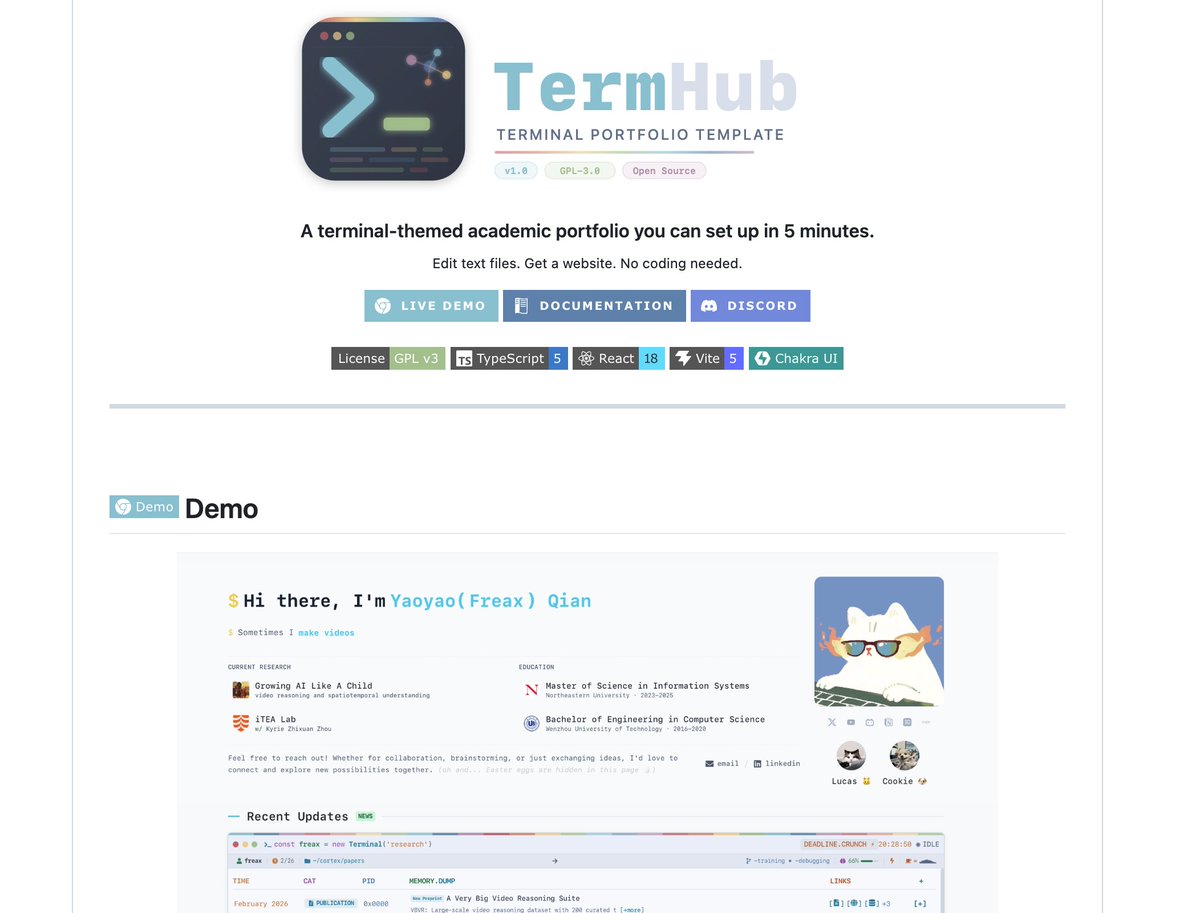

❓ How do you solve grasping problems when your target object is completely out of sight? 🚀 Excited to share our latest research! Check out ThinkGrasp: A Vision-Language System for Strategic Part Grasping in Clutter. 🔗 Site: h-freax.github.io/thinkgrasp_page