Sam Ward

450 posts

Sam Ward

@Samward

Investigating & Explaining things | SentinelAi | Sentinel Legal | OpenClaw 🦞 | All views are my own. 🇬🇧 🇺🇸

Introducing Windsurf 2.0. Manage all your agents from one place and delegate work to the cloud with Devin - so your agents keep shipping even after you close your laptop.

Open source is dead. That’s not a statement we ever thought we’d make. @calcom was built on open source. It shaped our product, our community, and our growth. But the world has changed faster than our principles could keep up. AI has fundamentally altered the security landscape. What once required time, expertise, and intent can now be automated at scale. Code is no longer just read. It is scanned, mapped, and exploited. Near zero cost. In that world, transparency becomes exposure. Especially at scale. After a lot of deliberation, we’ve made the decision to close the core @calcom codebase. This is not a rejection of what open source gave us. It’s a response to what risks AI is making possible. We’re still supporting builders, releasing the core code under a new MIT-licensed open source project called cal. diy for hobbyists and tinkerers, but our priority now is simple: Protecting our customers and community at all costs. This may not be the most popular call. But we believe many companies will come to the same conclusion. My full explanation below ↓

@steipete @openclaw I don't think OpenClaw is a reference. It literally doesn't have a proper security model. Nothing on OpenClaw is secure by design.

We just raised a $45M Series B at a $500M valuation led by @a16z and @SalesforceVC to build the knowledge infrastructure for AI

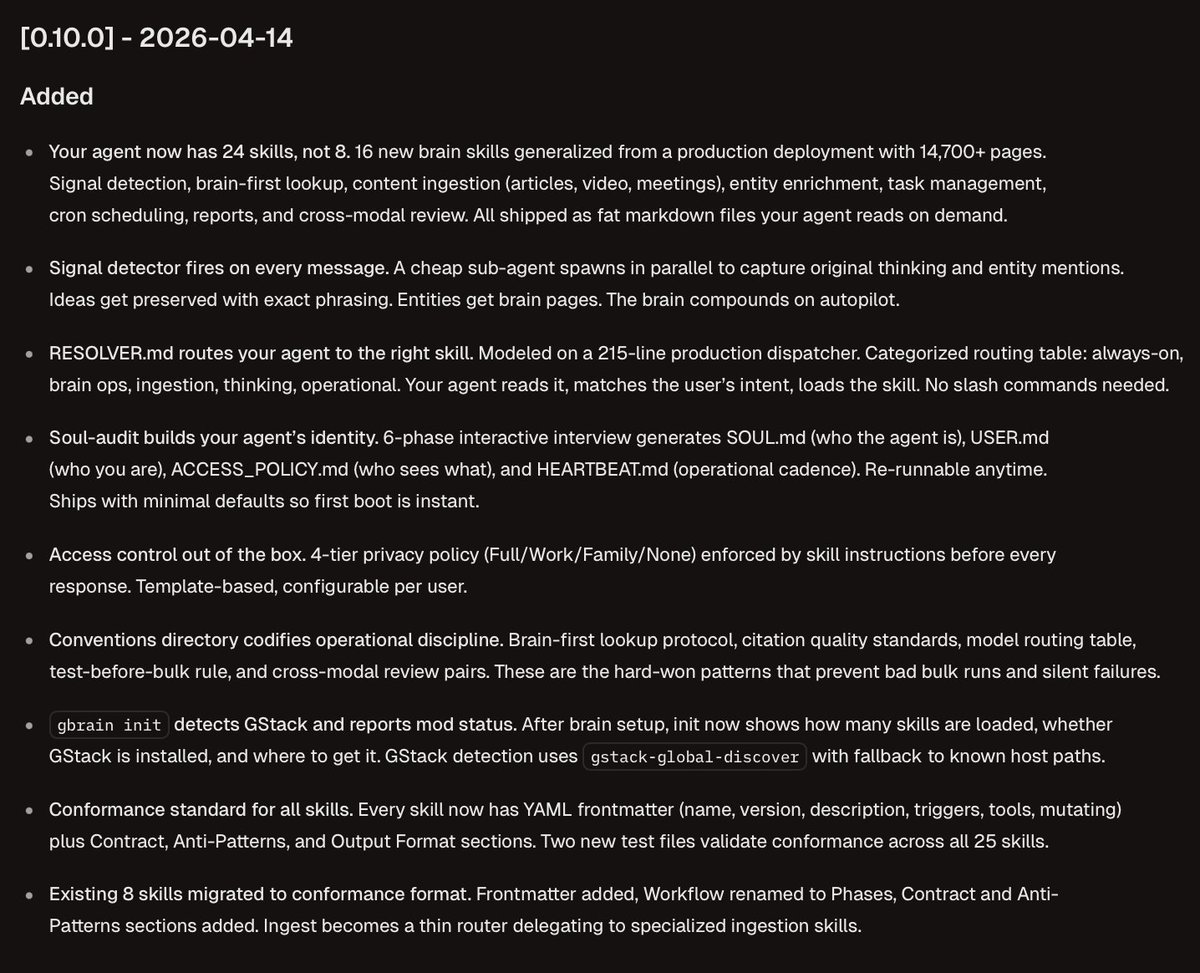

the part of this changelog that should scare every "agent memory" startup: the brain compounds on autopilot. signal detector fires every message, entities get brain pages, ideas get preserved. no explicit "save to memory" step. the agent just gets smarter by being used. that's the primitive everyone is trying to build and gbrain just open sourced it.

The more enterprises I talk to about AI agent transformation, the more it’s clear that there is going to be a new type of role in most enterprises going forward. The job is to be the agent deployer and manager in teams. Here’s the rough JD: This person will need to figure out what are the highest leverage set of workflows on a team are (either existing or new ones) where agents can actually drive significantly more value for the team and company. In general, it’s going to be in areas where if you threw compute (in the form of agents) at a task you could either execute it 100X faster or do it 100X more times than before. Examples would be processing orders of magnitude more leads to hand them off to reps with extra customer signal, automating a contracting review and intake process, streamlining a client onboarding process to reduce as many straps as possible, setting up knowledge bases than the whole company taps into, and so on. This person’s job is to figure out what the future state workflow needs to look like to drive this new form of automation, and how to connect up the various existing or new systems in such a way that this can be fulfilled. The gnarly part of the work is mapping structured and unstructured data flows, figuring out the ideal workflow, getting the agent the context it needs to do the work properly, figuring out where the human interfaces with the agent and at what steps, manages evals and reviews after any major model or data change, and runs and manages the agents on an ongoing basis tracking KPIs, and so on. The person must be good at mapping the process and understanding where the value could be unlocked and be relatively technical, and has full autonomy to connect up business systems and drive automation. This means they’re comfortable with skills, MCP, CLIs, and so on, and the company believes it’s safe for them to do so. But also great operationally and at business. It may be an existing person repositioned, or a totally net new person in the company. There will likely need to be one or more of these people on every team, so it’s not a centralized role per se. It may rile up into IT or an AI team, or live in the function and just have checkpoints with a central function. This would also be a fantastic job for next gen hires who are leaning into AI, and are technical, to be able to go into. And for anyone concerned about engineers in the future, this will be an obvious area for these skills as well.

We’re expanding Trusted Access for Cyber with additional tiers for authenticated cybersecurity defenders. Customers in the highest tiers can request access to GPT-5.4-Cyber, a version of GPT-5.4 fine-tuned for cybersecurity use cases, enabling more advanced defensive workflows. openai.com/index/scaling-…