Sematic รีทวีตแล้ว

The only playground that lets you query dozens of open-source and proprietary models at once and compare quality, cost, throughput. Get started for free 👇

Airtrain AI@AirtrainAI

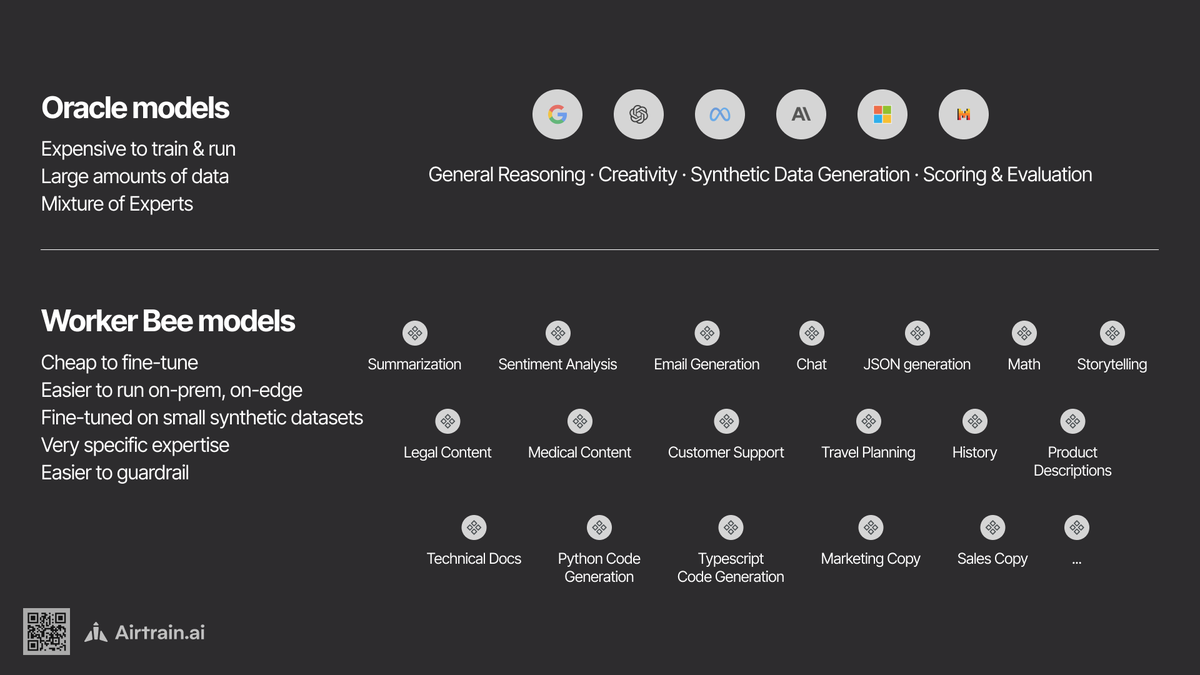

We are excited to announce the Airtrain.ai March 2024 LLM Playground release & Wednesday’s @ProductHunt launch! 🚀🚀🚀 ✅ Simultaneously prompt multiple models ✅ 18 supported LLMs ✅ Inference Metrics ✅ Persisted Sessions 👉Learn More: airtrain.ai/blog/the-llm-p…

English