Sergio Martínez

74K posts

Sergio Martínez

@SuperSerch

Java developer with a twist in security. DevOps & OpenStack enthusiast. OWASP member. Opinions my own.

47.743017, -86.934128 เข้าร่วม Mayıs 2009

550 กำลังติดตาม1.2K ผู้ติดตาม

Sergio Martínez รีทวีตแล้ว

Sergio Martínez รีทวีตแล้ว

Sergio Martínez รีทวีตแล้ว

NEW POST

Modern hardware is fast, but software often fails to leverage it. Caer Sanders guides his work with mechanical sympathy. He distills this into principles: predictable memory access, awareness of cache lines, single-writer, natural batching

martinfowler.com/articles/mecha…

English

Sergio Martínez รีทวีตแล้ว

🎙️🔴 Jetzt 𝐥𝐢𝐯𝐞: Die Pressekonferenz aus dem Bernabéu mit Vincent Kompany.

👉 youtube.com/live/NeZfrOS0K…

YouTube

Deutsch

Sergio Martínez รีทวีตแล้ว

🚨 Holy shit… Deloitte was charged $1.6 million for a healthcare report filled with AI-hallucinated citations.

This is the second time in two months they’ve been caught.

First an Australian government agency. Now a Canadian province’s Department of Health.

And their response? They “stand by the conclusions.”

Let me translate that for you: “The AI made up the sources, but trust us, the advice is still good.”

That’s a $1.6 million report. For a healthcare system. With fake citations that nobody at Deloitte bothered to verify before submitting.

Not an intern’s draft.

The final deliverable.

The Australian incident was supposed to be a wake-up call.

Deloitte even partially refunded that government for the errors.

You’d think after publicly embarrassing themselves once, someone would have implemented a basic fact-checking step before hitting send on the next million-dollar engagement.

They didn’t.

And here’s what makes this story bigger than Deloitte.

Every major consulting firm is racing to integrate AI into their workflows. McKinsey, BCG, Bain, Accenture.

They’re all doing it. Because AI lets them produce reports faster with fewer junior analysts, which means higher margins on the same $500/hour billing rates.

But the entire consulting business model is built on one thing: trust. You’re paying for credibility.

You’re paying so that when you hand the report to your board or your minister, nobody questions the sources. The moment that trust breaks, the math changes completely.

Why pay $1.6 million for AI-generated analysis with fake citations when you could run the same prompts yourself for $20/month and at least know to check the sources?

That’s the real disruption nobody’s talking about. AI isn’t going to replace consulting firms by being smarter than them.

It’s going to replace them by revealing that a huge percentage of consulting work was always just expensive research and formatting.

And now the clients have access to the same tools.

Deloitte’s problem isn’t that they used AI. It’s that they used AI the way most people use AI: paste in a request, take the output at face value, ship it.

No verification layer.

No human review of citations.

No system.

The firms that survive this era won’t be the ones who use AI the fastest. They’ll be the ones who build actual verification systems around AI output. The ones who treat AI as a first draft, not a final product.

$1.6 million. Fake citations. Twice in two months. And they stand by the conclusions.

The consulting industry’s biggest threat isn’t AI.

It’s clients realizing they don’t need to pay someone else to hallucinate.

English

Sergio Martínez รีทวีตแล้ว

Sergio Martínez รีทวีตแล้ว

Estamos viviendo la era donde entras a Twitter, y un astronauta tuitea una foto desde el espacio mientras va camino a la luna.

What a moment to be alive ✨

Reid Wiseman@astro_reid

There are no words.

Español

Sergio Martínez รีทวีตแล้ว

Sergio Martínez รีทวีตแล้ว

Or better use your computer work just for work!

SwiftOnSecurity@SwiftOnSecurity

Or recommendations

English

Sergio Martínez รีทวีตแล้ว

Sergio Martínez รีทวีตแล้ว

Germany mandates all men ages 17-45 who want to leave Germany for longer than 3 months must now obtain a permit.

"Drastic change to conscription: Men who want to leave Germany for longer periods will need approval"

"All men over 17 and under 45 years old who want to leave Germany for longer than three months must obtain a permit from the Bundeswehr (German Armed Forces). It doesn't matter whether someone has planned a semester abroad, wants to take up a job abroad, or is planning a backpacking trip around the world: Above all, there is a mandatory visit to the Bundeswehr's career center.

Sascha 🍉|🇻🇦✝️🌹🕊️@Pasolinis_Asche

"Freiheit"

English

Sergio Martínez รีทวีตแล้ว

Hahaha outlook inbox not working on earth OR in space.

Marcus House@MarcusHouse

Yes... In case anyone was wondering, Microsoft still sucks in space.

English

Sergio Martínez รีทวีตแล้ว

Siempre tienen otros datos, jamás se hacen responsables.

Y aún así hay muchos que les siguen aplaudiendo y justificando 🤦🏻♀️🤦🏻♀️🤦🏻♀️🤦🏻♀️🤦🏻♀️🤦🏻♀️🤦🏻♀️🤦🏻♀️🤦🏻♀️🤦🏻♀️

Latinus@latinus_us

Gobierno de Sheinbaum arremete contra comité de la ONU: llama "tendencioso" al informe que pide investigar las desapariciones. #Latinus #InformaciónParaTi latinus.us/mexico/2026/4/…

Español

Sergio Martínez รีทวีตแล้ว

Q: Why does the US need refugees?

A: Because America, unlike most countries, is a country of "principles". It says so in the Declaration of Independence and the Constitution.

"Principles" are the things you defend even when they don't serve your immediate best interest. For example, you defend "free-speech" not for yourself, but for speech you hate.

America doesn't "need" refugees, but we accept refugees because we have principles. We help those suffering from oppression.

It's the same with all the other western democracies. We do so because we are the good guys with principles.

Now, we can have legitimate conversation about whether immigrants were gaming the system, or the impact letting in too many refugees has, or even the problem when refugees are can't fit in with our country.

But we still let in some refugees because we are principled.

Tain Wailller@taylo54034

@jaddy789987 @robertgraham Why does the US need refugees? White people, as a percent of the population, has been steadily decreasing and will become a minority group. You might think that is a good thing, while others might not.

English

Sergio Martínez รีทวีตแล้ว

Sergio Martínez รีทวีตแล้ว

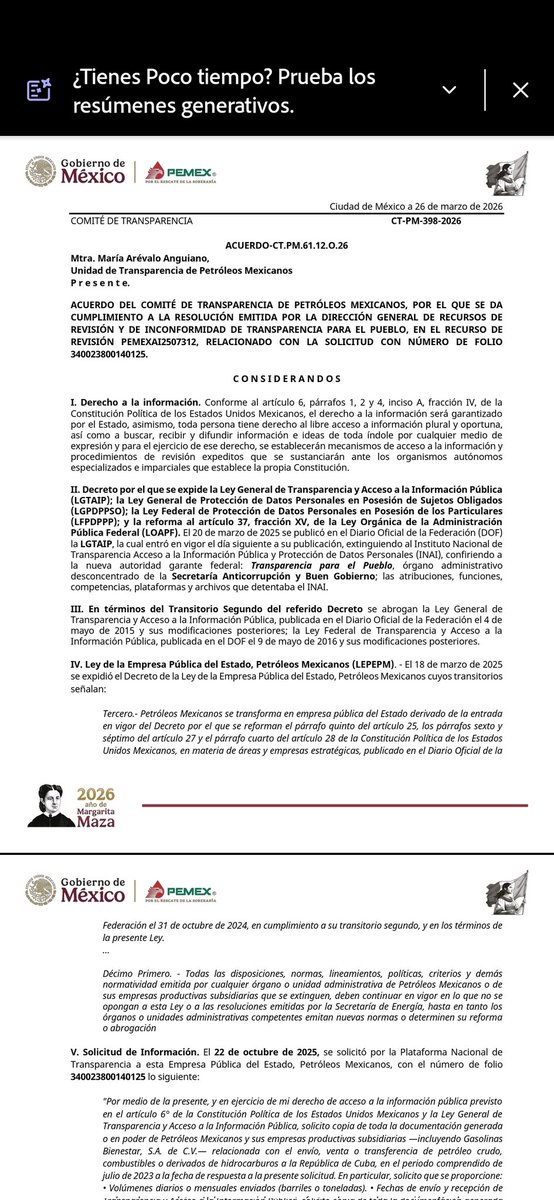

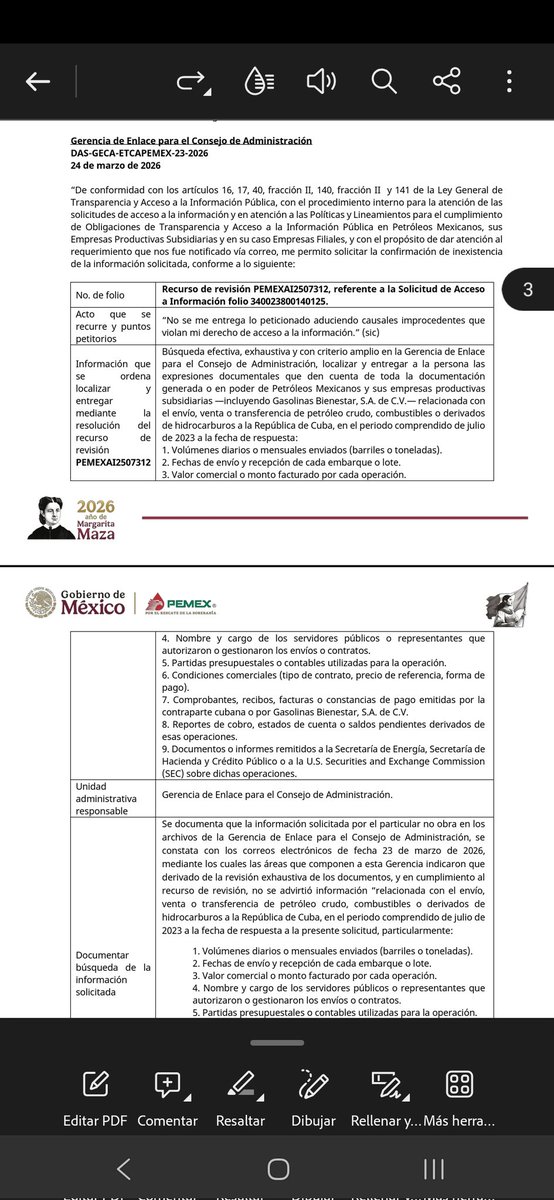

PEMEX negó transparentar toda la documentación sobre envíos de crudo y combustibles a Cuba de julio 2023 a la fecha, incluyendo Gasolinas Bienestar. Dice que "No encuentra la información" (volúmenes, fechas, valores, autorizaciones, contratos, pagos y reportes)

@drrocefi

Español