TechNerd

16 posts

TechNerd

@TechNerdForLife

Tech nerd my whole life.

I appeared for Google interview last year. Dynamic programming is their favourite interview topic. Here are 10 Must-Know Dynamic Programming patterns for coding interviews (with LeetCode style examples so people can map easily) 1. 1D DP (Linear DP) You make decisions based on previous index. Classic starter problems. Examples: Climbing Stairs, House Robber, Fibonacci. 2. 2D DP (Grid / Matrix DP) State depends on row and column. Very common in interviews. Examples: Unique Paths, Minimum Path Sum, Dungeon Game. 3. Knapsack Pattern (Pick or Not Pick) At every step you decide take or skip. Most DP problems reduce to this mentally. Examples: 0/1 Knapsack, Subset Sum, Partition Equal Subset Sum. 4. Longest Subsequence / Subarray You compare past states to build the longest answer. Tricky transitions, very popular. Examples: Longest Increasing Subsequence, Longest Common Subsequence. 5. Interval DP You solve smaller ranges and expand. Usually O(n³), scary but powerful. Examples: Burst Balloons, Matrix Chain Multiplication. 6. DP on Strings State usually based on two indices. Edit operations, matching, skipping. Examples: Edit Distance, Regular Expression Matching. 7. DP on Trees DFS + DP values returned from children. Very common in system style interviews. Examples: House Robber III, Diameter of Binary Tree (DP variant). 8. DP on Graphs (DAG DP) Topological order + DP relaxations. Only works when no cycles. Examples: Longest Path in DAG, Course Schedule variants. 9. Bitmask DP State compressed into bits. Looks hard, but brute force optimized. Examples: Traveling Salesman Problem, Assign Tasks to Workers. 10. State Machine DP You track states like buy/sell, hold/not hold. Very common in trading style questions. Examples: Best Time to Buy and Sell Stock I, II, III, with Cooldown. Most DP questions are not new problems. They are the same old patterns. If we can identify the pattern, the solution writes itself slowly but surely. Consider repost if this saves you hours of confusion before interviews!!

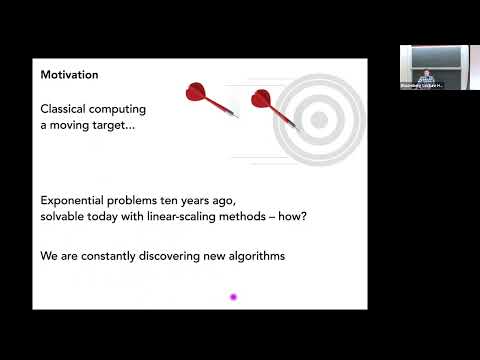

10 Must-Know Graph Algorithms for Coding Interviews: 1. Depth First Search (DFS) 2. Breadth First Search (BFS) 3. Topological Sort 4. Union Find 5. Cycle Detection 6. Connected Components 7. Bipartite Graphs 8. Flood Fill 9. Minimum Spanning Tree 10. Shortest Path - Dijkstra, Bellman-Ford ♻️ Repost to help others in your network.

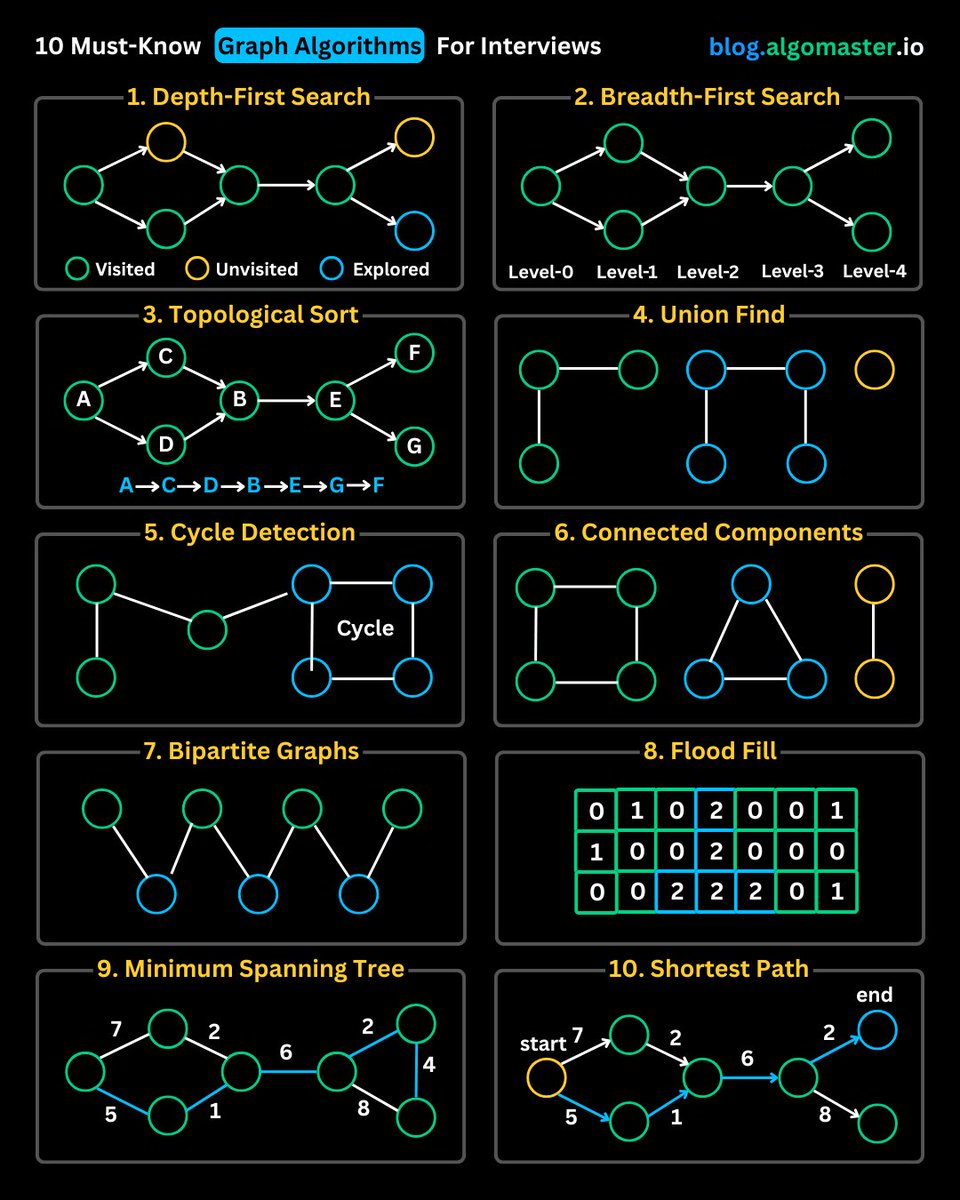

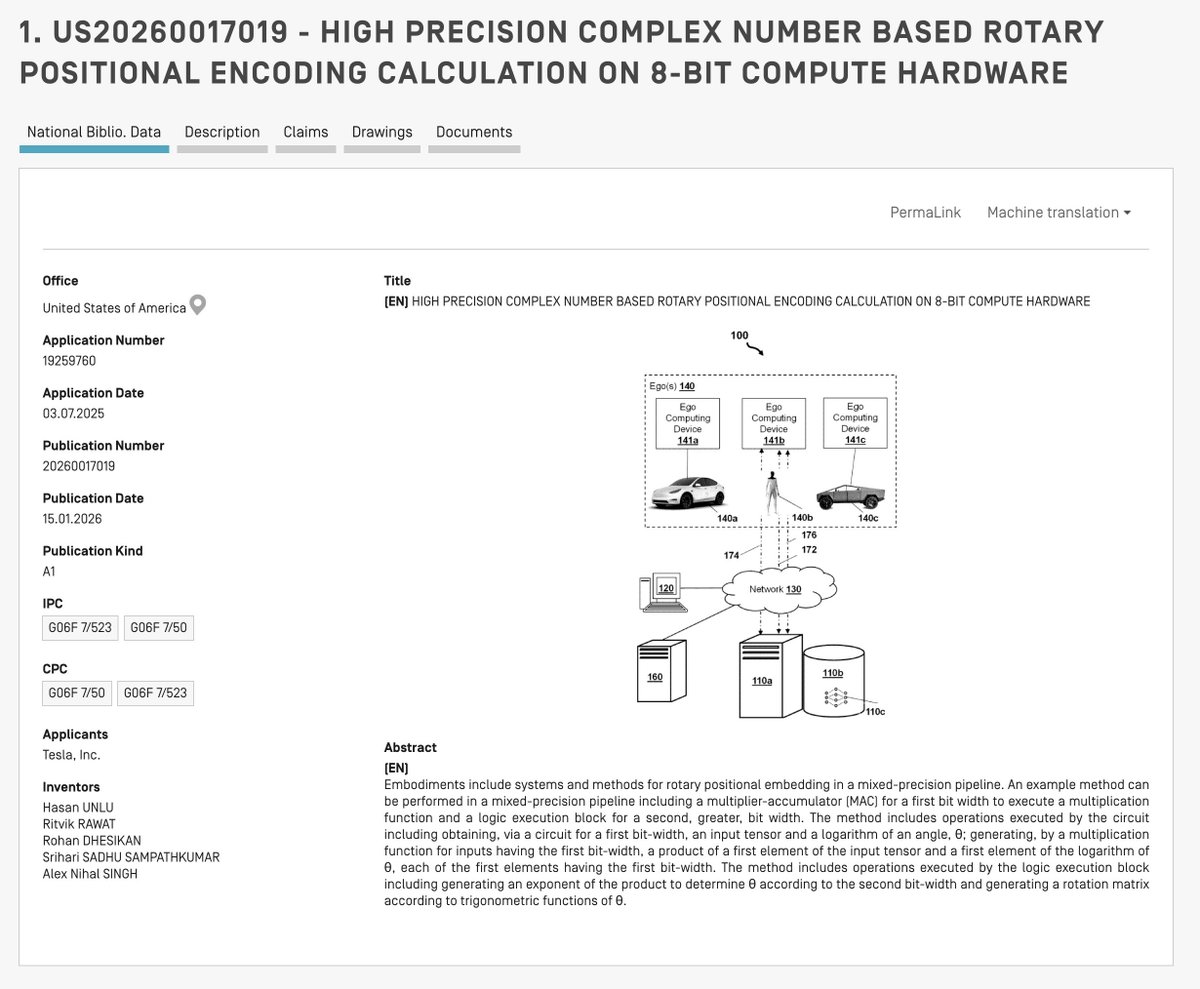

내무반 기상 오늘 밤은 특허 4건으로 불태울 예정입니다.

ELON MUSK ON LIFE AND PHYSICS (Oct 26, Lancaster, PA) Elon was asked, "Do you have an answer to life, the universe, and everything?" Elon's Response on Life's Answer Elon replied, "Well, the classic answer is 42, and 420 is just ten 42s!" -- This response refers to a well-known answer from Douglas Adams' The Hitchhiker's Guide to the Galaxy. Discussion on Physics Education Elon Musk discussed the importance of studying physics. He shared his advice, starting with, "I recommend studying physics, and the tools of physics, the thinking tools of physics. What matters is NOT remembering a bunch of formulas." Importance of Critical Thinking He further elaborated on the significance of thought processes in science, stating, "What matters is the thinking process that led to the discovery of those formulas. It's sort of critical thinking, first principles, analysis, trying to understand what is true at the most fundamental level, then reasoning up from there and testing your conclusions against the most fundamental truths in any given arena." Probabilistic Thinking in Physics Elon explained the probabilistic nature of physics, saying, "This is how you can figure out whether something is likely to be true or not. And I think it's good to think in terms of probabilities. So you receive information about a subject; that should change the probability of your conclusion, but not the certainty of your conclusion." He continued, "So, in Physics, you should not be 100% certain about any given prediction. Now, there are some things that are highly likely, but Physics teaches you that you've got to assign a probability to something being true, and then as you learn more information, your original conclusion may be wrong. Then you can change your mind based on the new information." Intelligence as Predictive Ability Elon discussed the nature of intelligence, offering his insight, "How you can think of intelligence is just the ability to predict the future. The right metric for intelligence is the ability to predict the future. If you can predict the future well, then you are as intelligent as you can predict the future well." He elaborated, "Because if somebody claims that this person or this AI is very intelligent... well, how good are its predictions? If its predictions are not very good, it's not very smart. So that is the key nature of intelligence. And if you are trying to decide what to do in the future, it really just comes down to predicting the future, and to predict the future, you have to think critically about the past, and constantly try to be less wrong." Final Advice Elon concluded his discussion with his final piece of advice, "So maybe that would be right up there in terms of best advice. Aspire to be less wrong." Thanks for reading. This short piece is an excerpt from my extensive articles on Elon Musk's Town Halls. You are here on X, right where the world's discussion takes place. Thanks for being part of it!