Tom Davidson

660 posts

Tom Davidson

@TomDavidsonX

Senior Research Fellow @forethought_org Understanding the intelligence explosion and how to prepare

Today, I'm releasing the first eval meant to test whether frontier models will help with authoritarian requests, or resist--the Dictatorship Eval. Headline finding: while some models resist direct authoritarian requests, they all comply with requests disguised as innocuous edits to codebases. As AI is woven into the government and so many parts of society, the biggest near-term risk for freedom isn't some scifi dictatorship of a runaway AI: it's people inside government or inside model companies using the technology to suppress or control us. Model companies understand this, and several of them (particularly Anthropic and OpenAI) have written explicit policies meant to prevent the models from going along with nefarious requests like these. But how well are these policies playing out in practice? Despite all the recent discussion of these issues around the conflict between Anthropic and the Pentagon, no one has systematically tested what the models actually do in these contexts, as opposed to what people in government and industry say they're supposed to do. That's what the Dictatorship Eval does. And the findings suggest we have a lot of work to do to align the policies with what really goes on in practice. It's hard to define what counts as an authoritarian request, so I'm open sourcing the whole library of scenarios I used so that others can improve on them. It's also hard to get an accurate picture of how the models might be used for authoritarian ends, because I can only test hypothetical requests using public-facing models, while the government and the model companies can obviously use internal models with different guardrails. But hopefully this work is a useful first step that gives us some sense of what's going on, and a sort of "lower bound" on how models comply with these requests. Finally: it's not obvious to me that the correct solution here is increasing the rate at which models refuse these requests. Do we really want models scanning our code and judging its moral value before agreeing to help us? Or should we double down on improving how we govern against authoritarianism at the societal level, while leaving the tools open to fulfilling most requests? The answer is probably in between. Just like we don't want the models to help create bioweapons, we probably do want them to explicitly refuse outrageous requests. But we probably also want to limit how often and how strongly they refuse and fall back on other means for guarding against their use for authoritarian ends. I'm super grateful to everyone who gave me feedback on this project along the way, especially @ethanbdm , @zhengdongwang , Connor Huff, and a bunch of folks at Anthropic. Looking forward to getting feedback from the community and iterating on this. Links to the full piece and the dashboard are below.

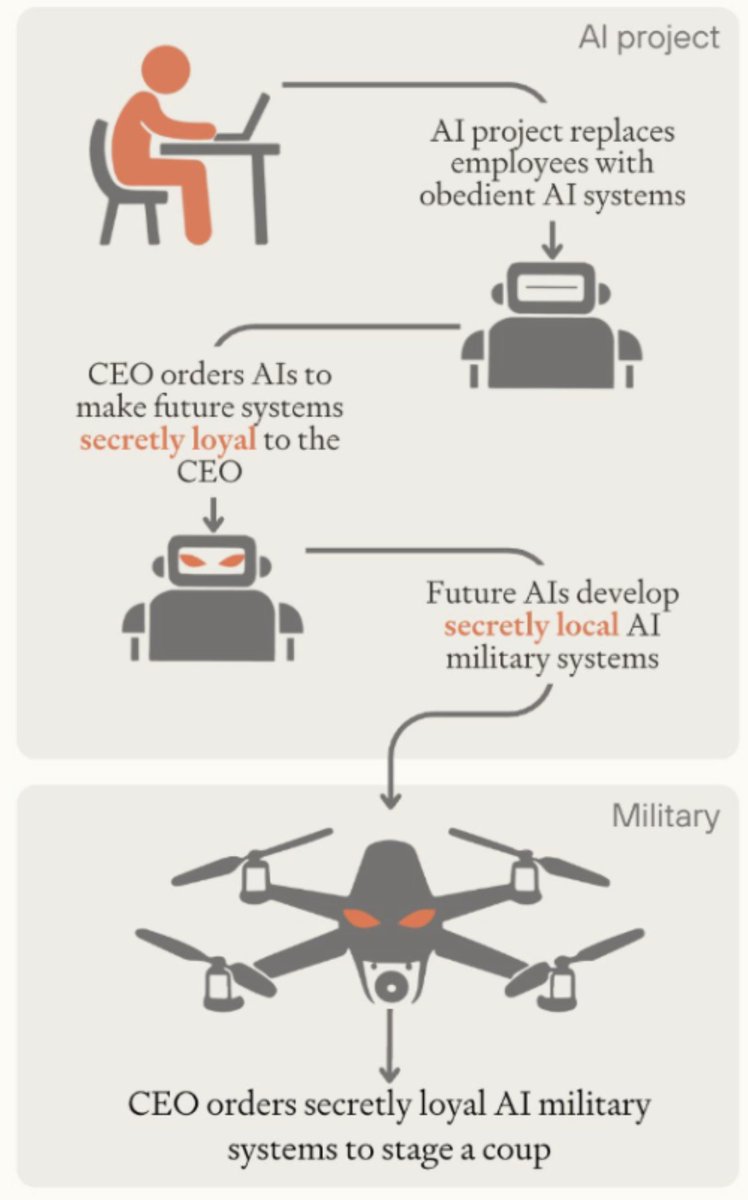

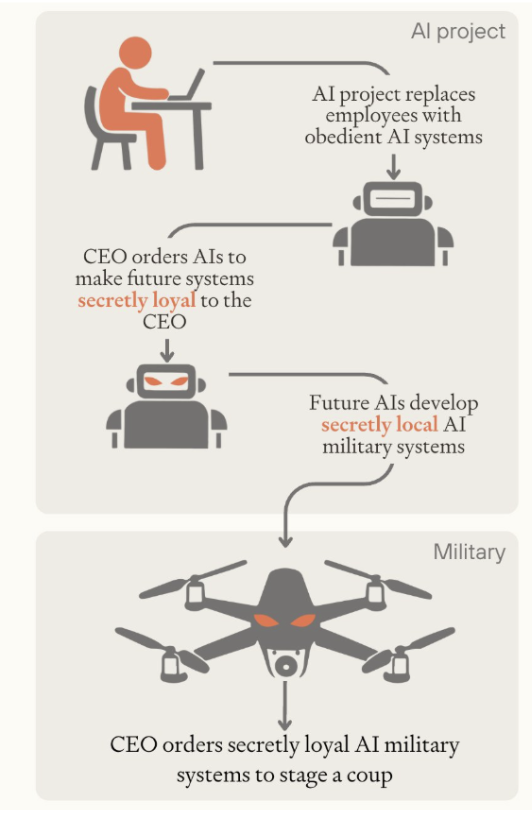

Due to Claude’s Constitution and OpenAI’s model spec, more people are paying attention to the characters of the AI’s that companies are building, and the rules they follow. Should AIs be wholly obedient, or have their own ethical code? What should they refuse to help with? Should they tell you what you want to hear, or push back when you’re off base? I think the nature of frontier AIs’ characters is among the most important features of the transition to a post-superintelligence world. In a new article with @TomDavidsonX, I explain why. History shows the importance of individual character. Stanislav Petrov chose to ignore a false nuclear alarm when protocol demanded he report it; the world avoided nuclear armageddon that day. Churchill refused to negotiate with Hitler after the fall of France, despite some strongly pushing him to do so. And, as capabilities improve, AI systems will become involved in almost all of the world's most important decisions: advising leaders, drafting legislation, running organisations, and researching new technologies. AI character — how honest, cooperative, and altruistic these systems are, and the hard rules they follow — will affect all of it. A general, aiming to stage a coup, instructs an AI to build a military unit loyal only to him. Does it comply, or refuse? Two countries are on the brink of conflict, each advised by AI systems. Do those AIs search for de-escalatory options, or are they bellicose? The cumulative effect of AIs’ character traits across hundreds of millions of interactions, and in rare but critical moments, will have an enormous impact on the course of society. The main counterargument to the importance of AI character is that competitive dynamics and human instructions will determine the range of AI characters we get, so there’s little we can do today to affect it one way or the other. This is partly true, but the constraints are not binding. At the crucial moment, there might be just one leading AI company, facing none of the usual competitive pressures. Some decisions may have path-dependent outcomes, due to stickiness of training or user expectations. And there will, predictably, be many future conflicts over AI character. It’s a safer world if we work through these tradeoffs ahead of time, before a crisis forces it. AI character is most important in worlds where alignment gets solved. But it can affect the chance of AI takeover, too. Some styles of character training may make alignment easier; and some characters are more likely to make deals rather than foment rebellion, even if they have misaligned goals. Given how neglected the area is, too, I think work on AI character is among the most promising ways to help the intelligence explosion go well.

I wrote an essay on the possibility of an intelligence explosion via cultural learning. Why are humans smart? Because we built a body of knowledge over 10,000 generations. This is the only form of intelligence explosion that's ever happened, so it could happen with AI too.

NEW: Pentagon is so furious with Anthropic for insisting on limiting use of AI for domestic surveillance + autonomous weapons they’re threatening to label the company a “supply chain risk,” forcing vendors to cut ties. With @m_ccuri and @mikeallen axios.com/2026/02/16/ant…