Zichen Wang

49 posts

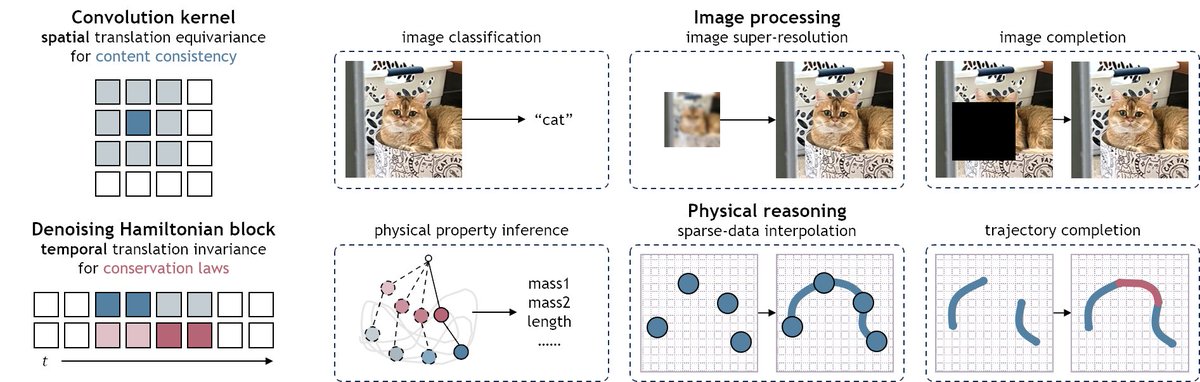

Most multi-view reconstruction models need full supervision. We show they can self-improve without any ground truth labels. Introducing SelfEvo: Self-Improving 4D Perception via Self-Distillation. Up to +36.5% in video depth, +20.1% in camera estimation, zero annotation.

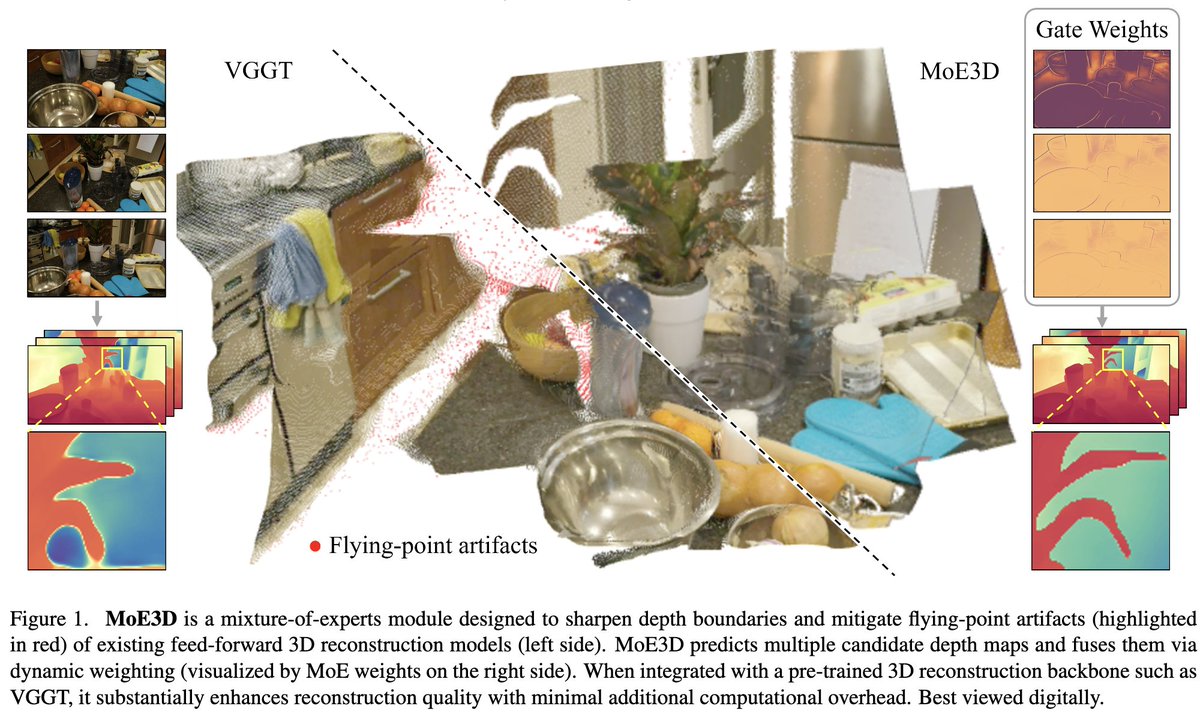

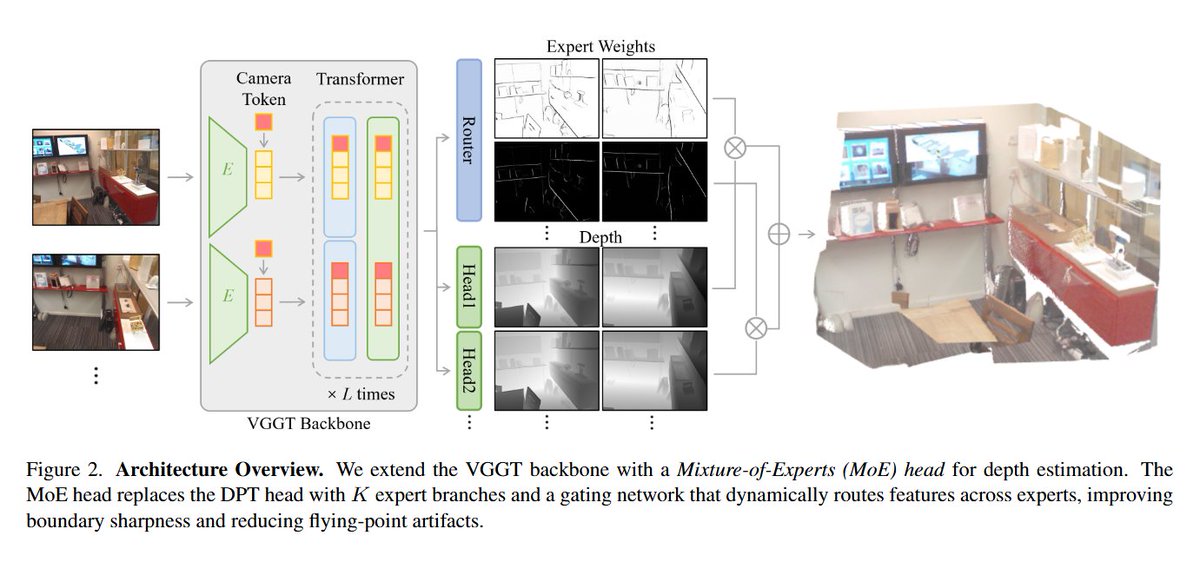

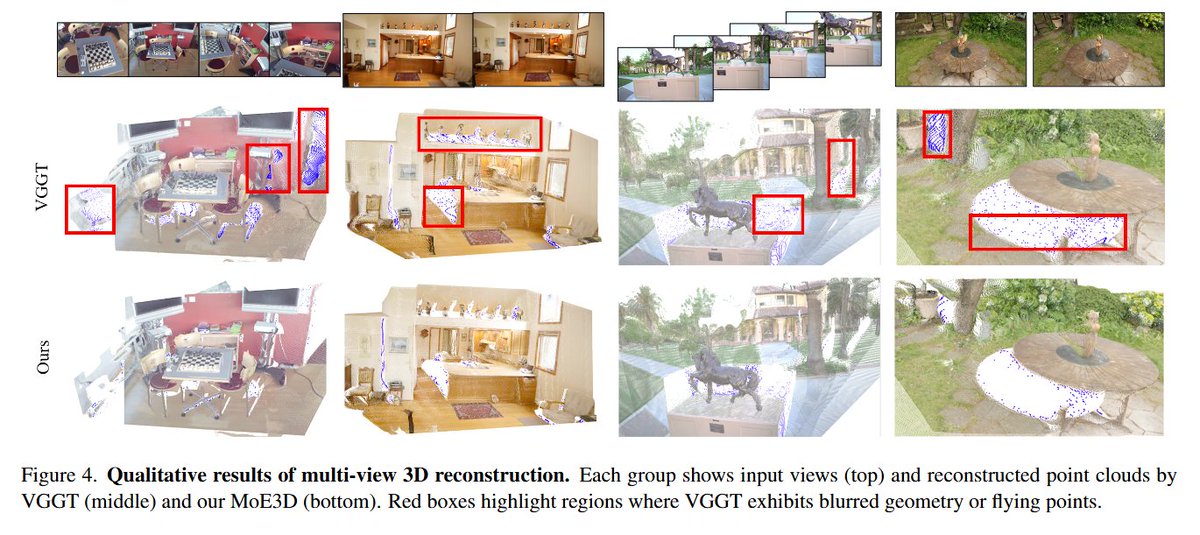

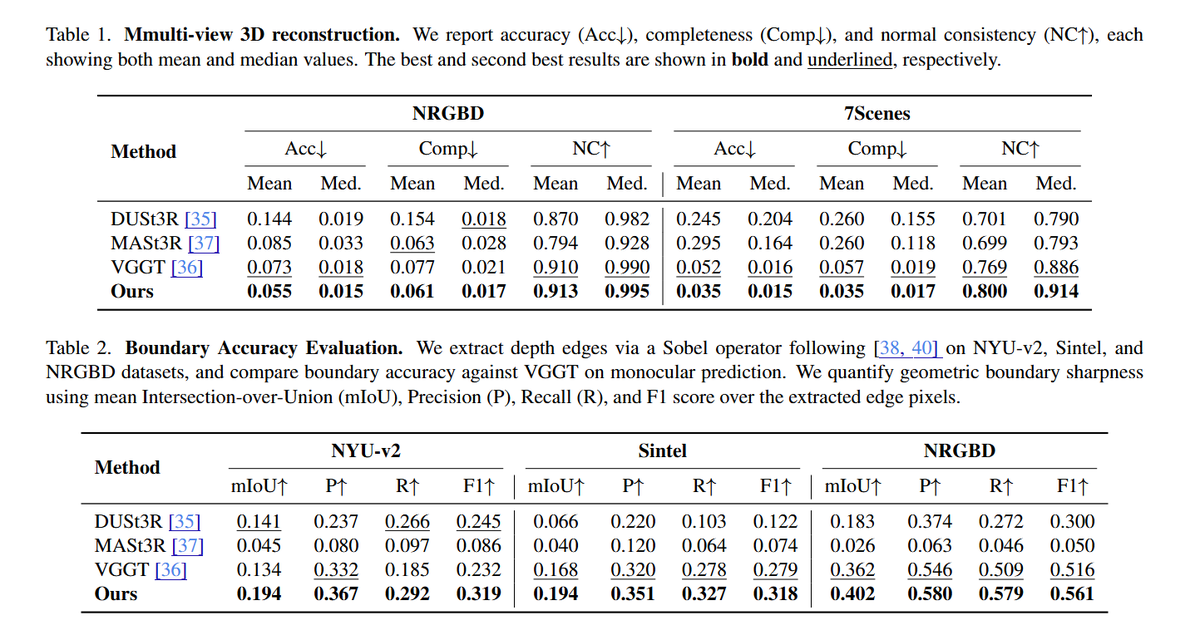

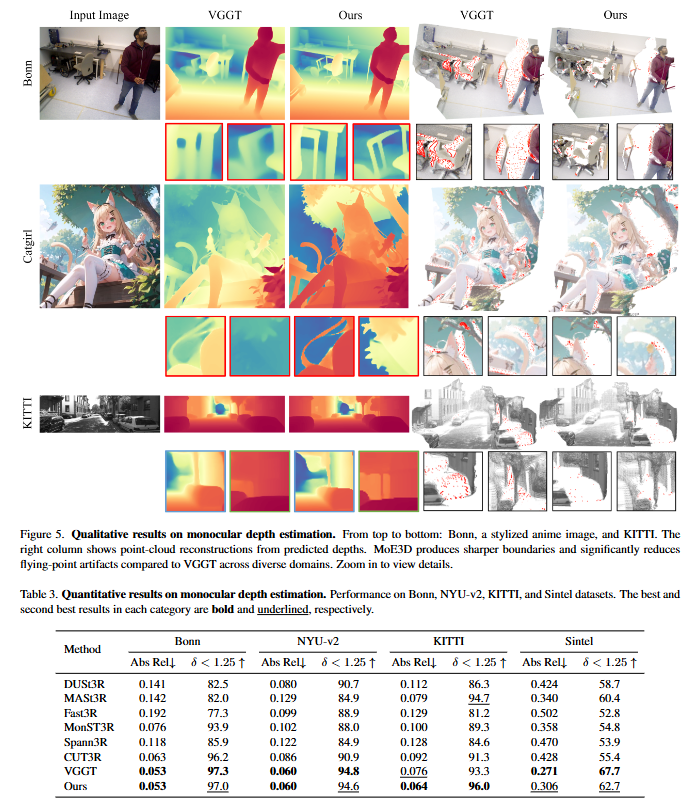

MoE3D: A Mixture-of-Experts Module for 3D Reconstruction @Zichen2501, @AngCao3, Liam J. Wang, @jjpark3D tl;dr: multiple depth predictions and weights->softmax weighting-based fusion->depth estimation arxiv.org/abs/2601.05208

🎉 We're excited to announce the 2025 Google PhD Fellows! @GoogleOrg is providing over $10 million to support 255 PhD students across 35 countries, fostering the next generation of research talent to strengthen the global scientific landscape. Read more: goo.gle/43wJWw8

We've released our paper "Generating 3D-Consistent Videos from Unposed Internet Photos"! Video models like Luma generate pretty videos, but sometimes struggle with 3D consistency. We can do better by scaling them with 3D-aware objectives. 1/N page: genechou.com/kfcw

The latest development version of Dr.Jit now provides built-in support for evaluating and training MLPs (including fusing them into rendering workloads). They compile to efficient Tensor Core operations via NVIDIA's Cooperative Vector extension. Details: drjit.readthedocs.io/en/latest/nn.h…

Volumetric Surfaces: Representing Fuzzy Geometries with Layered Meshes Stefano Esposito, Anpei Chen, Christian Reiser, Samuel Rota Bulò, Lorenzo Porzi, Katja Schwarz, Christian Richardt, Michael Zollhöfer, Peter Kontschieder, Andreas Geiger (University of Tűbingen, Meta Reality Labs) Paper: arxiv.org/abs/2409.02482 Project: autonomousvision.github.io/volsurfs/ Code (CC BY4.0) github.com/autonomousvisi… Abstract: High-quality view synthesis relies on volume rendering, splatting, or surface rendering. While surface rendering is typically the fastest, it struggles to accurately model fuzzy geometry like hair. In turn, alpha-blending techniques excel at representing fuzzy materials but require an unbounded number of samples per ray (P1). Further overheads are induced by empty space skipping in volume rendering (P2) and sorting input primitives in splatting (P3). We present a novel representation for real-time view synthesis where the (P1) number of sampling locations is small and bounded, (P2) sampling locations are efficiently found via rasterization, and (P3) rendering is sorting-free. We achieve this by representing objects as semi-transparent multi-layer meshes rendered in a fixed order. First, we model surface layers as signed distance function (SDF) shells with optimal spacing learned during training. Then, we bake them as meshes and fit UV textures. Unlike single-surface methods, our multi-layer representation effectively models fuzzy objects. In contrast to volume and splatting-based methods, our approach enables real-time rendering on low-power laptops and smartphones.

I ran this experiment to show that duality-based optimizers like Muon are not only *fast* but also *numerically different* to vanilla gradient descent. In particular, the weights move a qualitatively different amount in the same number of training steps. (1/4)