Andy Coenen

5.4K posts

@_coenen

AI controllability @GoogleDeepMind | ex The M Machine | opinions my own

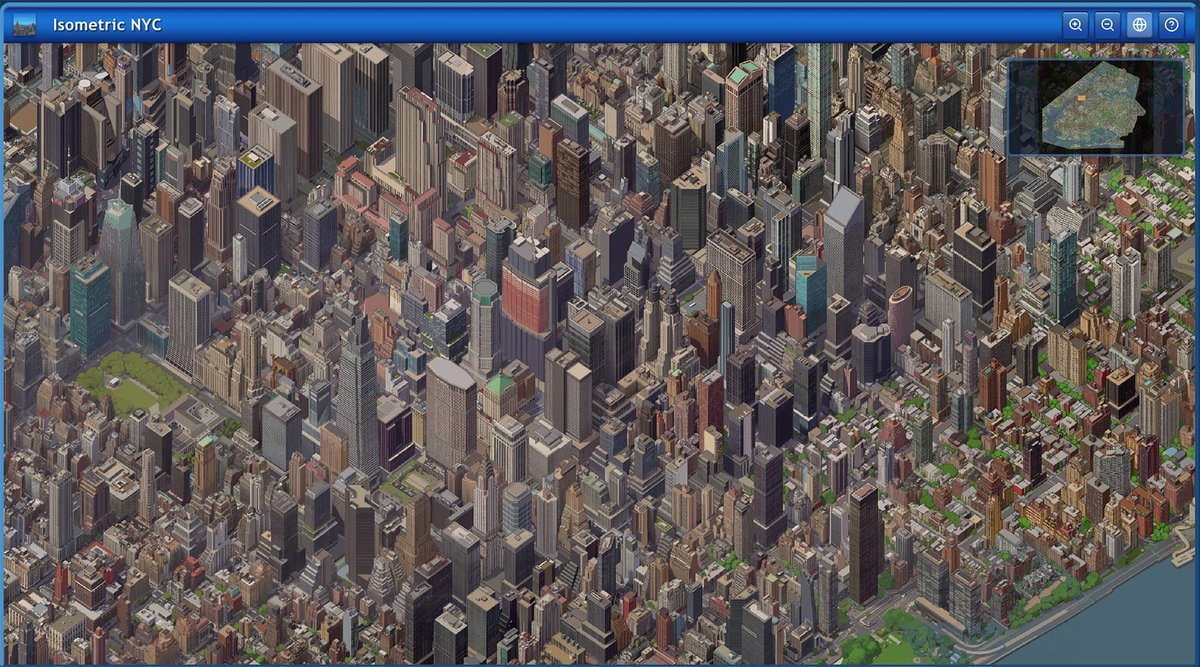

On a NOT Unbound note, here is a spare-time experiment I always wanted to try out: Making physically plausible and interesting worlds *without* using Perlin noise and *without* using regular grids. Here is how it works:

@gregwedow beatiful. now make it more minimal.

Every software engineer I know using coding agents is busier than they’ve ever been. Code is cheaper, but there’s no shortage jobs to be solved.

Introducing TigerFS - a filesystem backed by PostgreSQL, and a filesystem interface to PostgreSQL. Idea is simple: Agents don't need fancy APIs or SDKs, they love the file system. ls, cat, find, grep. Pipelined UNIX tools. So let’s make files transactional and concurrent by backing them with a real database. There are two ways to use it: File-first: Write markdown, organize into directories. Writes are atomic, everything is auto-versioned. Any tool that works with files -- Claude Code, Cursor, grep, emacs -- just works. Multi-agent task coordination is just mv'ing files between todo/doing/done directories. Data-first: Mount any Postgres database and explore it with Unix tools. For large databases, chain filters into paths that push down to SQL: .by/customer_id/123/.order/created_at/.last/10/.export/json. Bulk import/export, no SQL needed, and ships with Claude Code skills. Every file is a real PostgreSQL row. Multiple agents and humans read and write concurrently with full ACID guarantees. The filesystem /is/ the API. Mounts via FUSE on Linux and NFS on macOS, no extra dependencies. Point it at an existing Postgres database, or spin up a free one on Tiger Cloud or Ghost. I built this mostly for agent workflows, but curious what else people would use it for. It's early but the core is solid. Feedback welcome. tigerfs.io

Marcus Aurelius, Meditations, X.16: “To stop talking about what the good man is like, and just be one.”

We architected the plugin using JUCE/C++ and React/Typescript - a clever idea presented by @spencersalazar at ADC 2020. This approach ensures latency sensitive operations (audio streaming and processing) happen in a compiled and unmanaged language with a tight clock, while leveraging web technologies for rapid prototyping of the UI (@reactjs, @shadcn, and @tailwindcss). State is synced between Typescript and C++ using Zustand’s state management and nlohmann json. The based frontend also makes it easier for developers to open the codebase in Antigravity (or similar LLM coding tool) and vibecode new interfaces and functionality.

🏎️ gemma-webgpu: a zero-dependency, blazing fast Gemma 1B running entirely in your browser. Full vibe coded from my cell phone. 🔥 136.8 tok/s on M4 Mac (3.3x faster than transformers.js) 📱 101 tok/s on iPhone 17 (270M), 34 tok/s (1B) What we built from scratch: • 18 hand-written WGSL compute shaders with fused ops (fusedNormAdd saves 36 GPU dispatches per forward pass) • Q8_0 dequantization directly on GPU — higher quality than q4 AND faster • Range request streaming loads weights layer-by-layer (~44MB chunks), uploads to GPU, frees JS memory immediately. Peak heap: ~50MB even for the 1GB model • That streaming trick is what makes 1B run on iPhone. it never holds the full model in RAM 12KB gzipped. Zero dependencies. npm install gemma-webgpu