Bethge Lab

324 posts

Bethge Lab

@bethgelab

Perceiving Neural Networks

🚨Current data curation results in the creation of static datasets and the use of model-based filters that induce many biases. Can we fix this? We propose ✨CABS✨, a flexible concept-aware online batch curation method that improves CLIP pretraining! arxiv.org/abs/2511.20643 🧵👇

🚨 New paper at #NeurIPS2025! A systematic fixation-level comparison of a performance-optimized DNN scanpath model and a mechanistic cognitive model reveals behaviourally relevant mechanisms that can be added to the mechanistic model to substantially improve performance. 🧵👇

🚨Current data curation results in the creation of static datasets and the use of model-based filters that induce many biases. Can we fix this? We propose ✨CABS✨, a flexible concept-aware online batch curation method that improves CLIP pretraining! arxiv.org/abs/2511.20643 🧵👇

🚀 New paper! arxiv.org/abs/2511.16655 Recently, Cambrian-S released models & two benchmarks (VSR & VSC) for “spatial supersensing” in video! We found: 1️⃣ Simple no-frame baseline (NoSense) ~perfectly solves VSR! 2️⃣ Tiny sanity check collapses Cambrian-S perf to 0% on VSC! 🧵👇

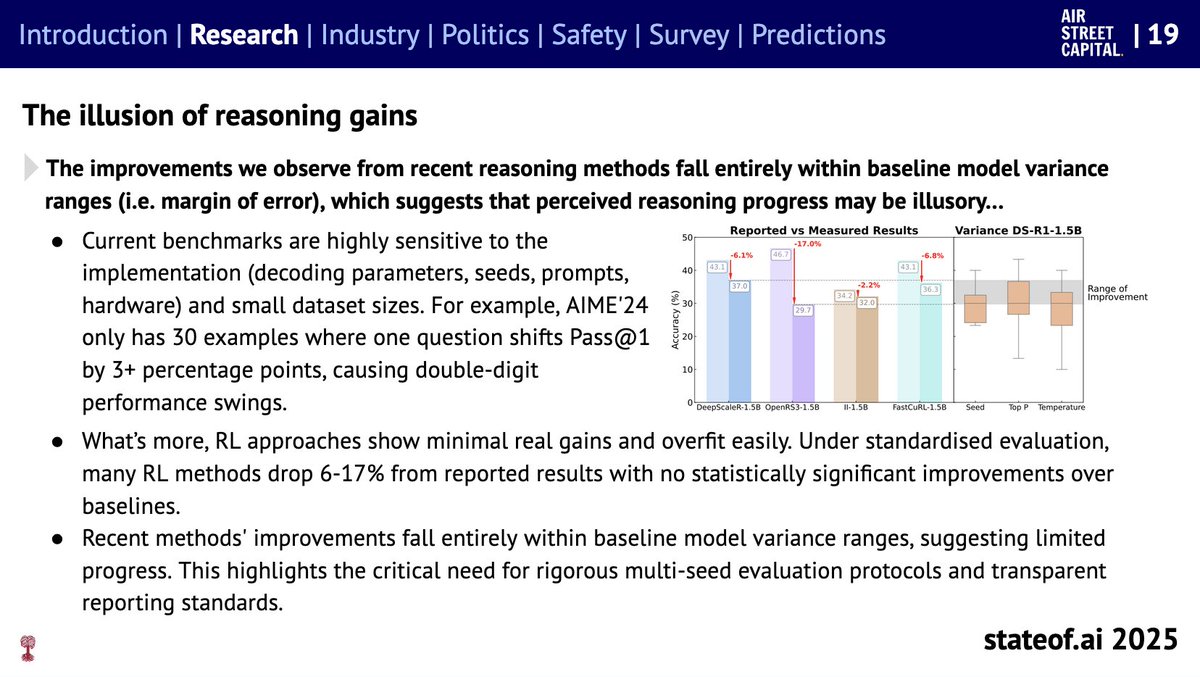

🪩The one and only @stateofai 2025 is live! 🪩 It’s been a monumental 12 months for AI. Our 8th annual report is the most comprehensive it's ever been, covering what you *need* to know about research, industry, politics, safety and our new usage data. My highlight reel:

🪩The one and only @stateofai 2025 is live! 🪩 It’s been a monumental 12 months for AI. Our 8th annual report is the most comprehensive it's ever been, covering what you *need* to know about research, industry, politics, safety and our new usage data. My highlight reel:

🚀New Paper! arxiv.org/abs/2504.07086 Everyone’s celebrating rapid progress in math reasoning with RL/SFT. But how real is this progress? We re-evaluated recently released popular reasoning models—and found reported gains often vanish under rigorous testing!! 👀 🧵👇