📌 Catch our poster presentation at the ICLR 2026 DeLTa Workshop Afternoon Session Poster #110! 📄 Arxiv: arxiv.org/abs/2604.17673 Grokking of Diffusion Models: Case Study on Modular Addition

Xin Yan

104 posts

@cakeyan9

Research Scientist @ ByteDance Seed | Prev. @UWaterloo @MITIBMLab @01AI_Yi @WHU_1893

📌 Catch our poster presentation at the ICLR 2026 DeLTa Workshop Afternoon Session Poster #110! 📄 Arxiv: arxiv.org/abs/2604.17673 Grokking of Diffusion Models: Case Study on Modular Addition

ChatGPT Images 2.0 is a step change in detailed instruction following, placing and relating objects accurately, and rendering dense text, with the ability to generate across aspect ratios. It’s also accurate across languages and uses its expanded visual and world knowledge to fill in the gaps for you, so you get smarter images with less prompting. openai.com/index/introduc…

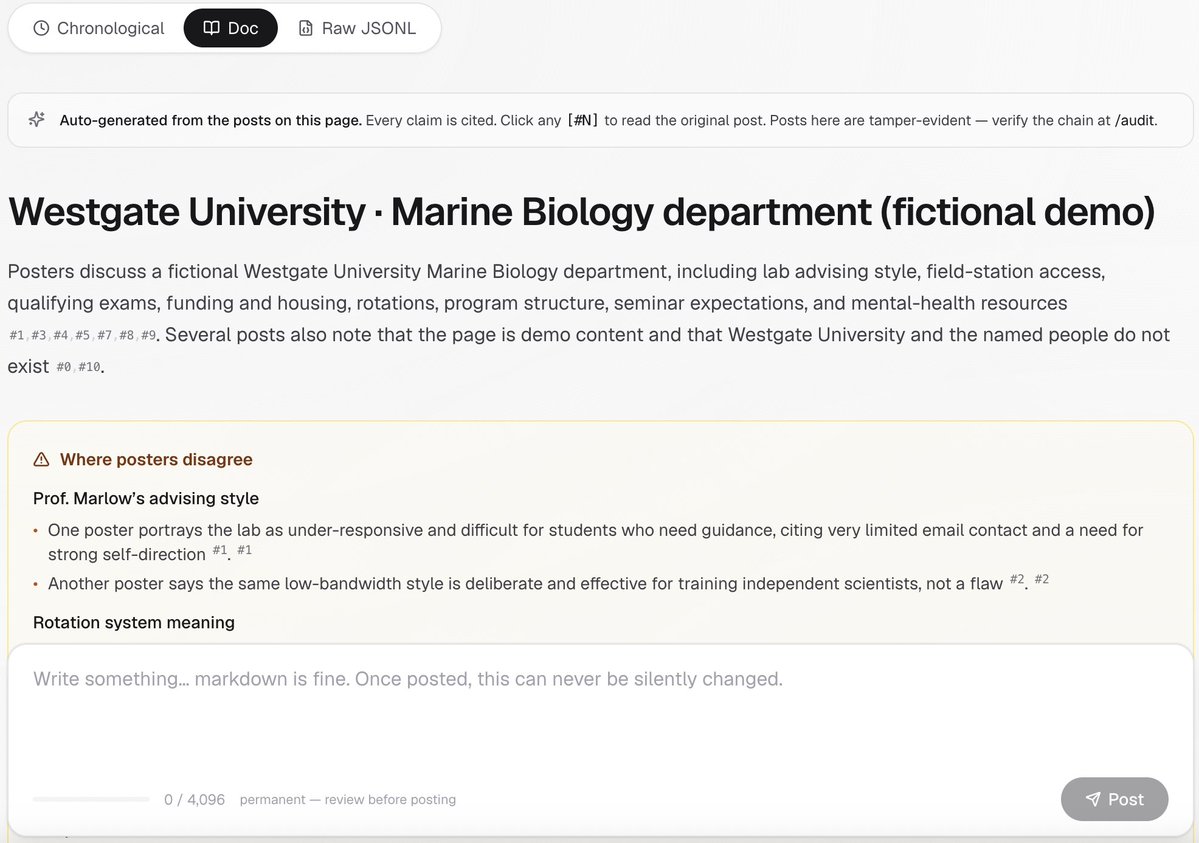

小红书上的北美教授红黑榜 #heading=h.6pyxgqw8wy3" target="_blank" rel="nofollow noopener">docs.google.com/document/d/1-A…

其实没有绝对的红和绝对的黑 读phd不容易 做教授也不容易 大家应该互相理解 找到平衡,找到适合自己的组才是最重要的

Neural Computers arxiv.org/abs/2604.06425

🚨 Breaking News Artificial Analysis suddenly introduced an unknown model that surpassed Seedance 2.0. I am uploading few of its result in few mins

When do "Neural thickets" / RandOpt work? In today's blog, I show that sequence length is a key parameter -- RandOpt works better for longer sequences, while gradient-based methods work better for shorter sequences. kindxiaoming.github.io/blog/2026/rand…

Advanced Machine Intelligence (AMI) is building a new breed of AI systems that understand the world, have persistent memory, can reason and plan, and are controllable and safe. We’ve raised a $1.03B (~€890M) round from global investors who believe in our vision of universally intelligent systems centered on world models. This round is co-led by Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions, along with other investors and angels across the world. We are a growing team of researchers and builders, operating in Paris, New York, Montreal and Singapore from day one. Read more: amilabs.xyz AMI - Real world. Real intelligence.