Milan

72 posts

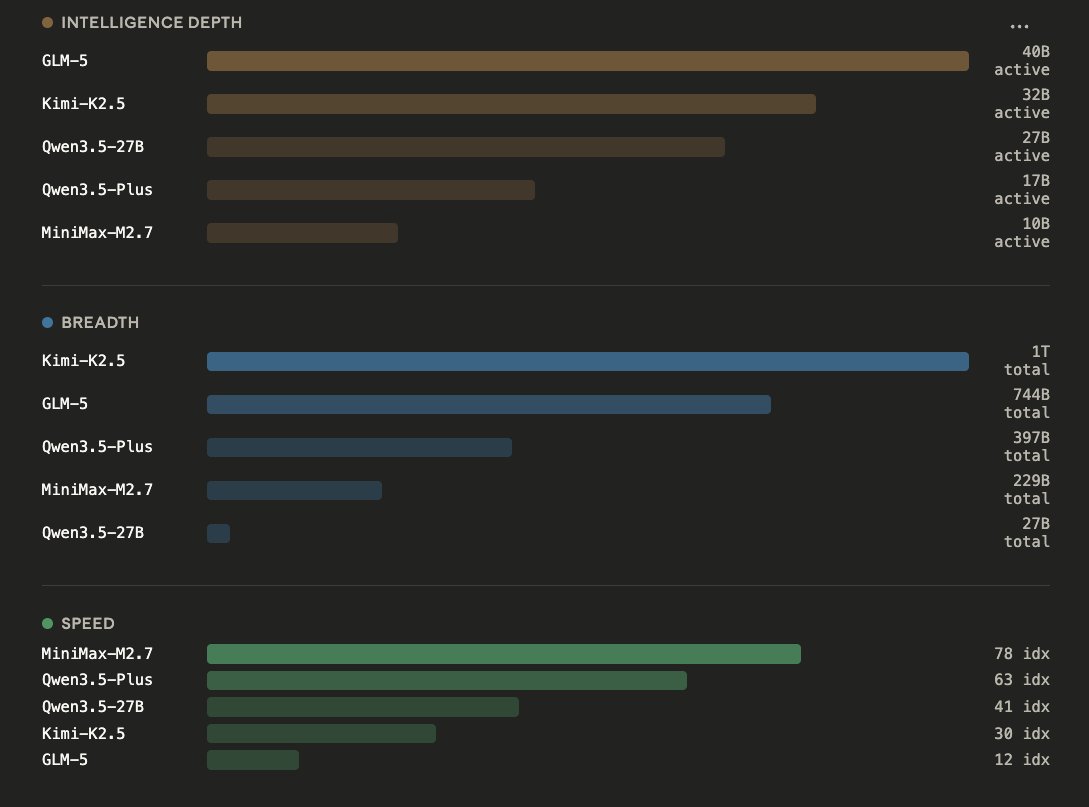

People are not lying when they say Qwen3.5-27B is incredibly capable. 1. Bubble size = total params - World Knowledge, Languages, Skills 2. X axis = active params - Raw Intelligence per token 3. Y axis = tokens/s - Speed of prefill and generation (decode) GLM-5 | 744B params | 40B active Kimi-K2.5 | 1T params | 32B active Qwen3.5-27B | 27B active params Qwen3.5-Plus | 397B params | 17B active MiniMax-M2.7 | 229B params | 10B active MoEs can store much more world knowledge, and breadth of information. For a Mixture-of-Expert, you can stack it up to 1T params, so you can give it 20 Trillion tokens or more of training data, it learns more. But during runtime, only a small portion of that gets activated. Taking MiniMax-M2.5 as an example: Only 10B are active at a time, so while you use it you get the speed and closer intelligence to nemotron-8B it's just MiniMax-M2.5 can know much more, and thus perform better.

How can a 3B parameters model reach this quality? 👀