Seung Joon Choi

8.8K posts

.@RichardSSutton, father of reinforcement learning, doesn’t think LLMs are bitter-lesson-pilled. My steel man of Richard’s position: we need some new architecture to enable continual (on-the-job) learning. And if we have continual learning, we don't need a special training phase - the agent just learns on-the-fly - like all humans, and indeed, like all animals. This new paradigm will render our current approach with LLMs obsolete. I did my best to represent the view that LLMs will function as the foundation on which this experiential learning can happen. Some sparks flew. 0:00:00 – Are LLMs a dead-end? 0:13:51 – Do humans do imitation learning? 0:23:57 – The Era of Experience 0:34:25 – Current architectures generalize poorly out of distribution 0:42:17 – Surprises in the AI field 0:47:28 – Will The Bitter Lesson still apply after AGI? 0:54:35 – Succession to AI

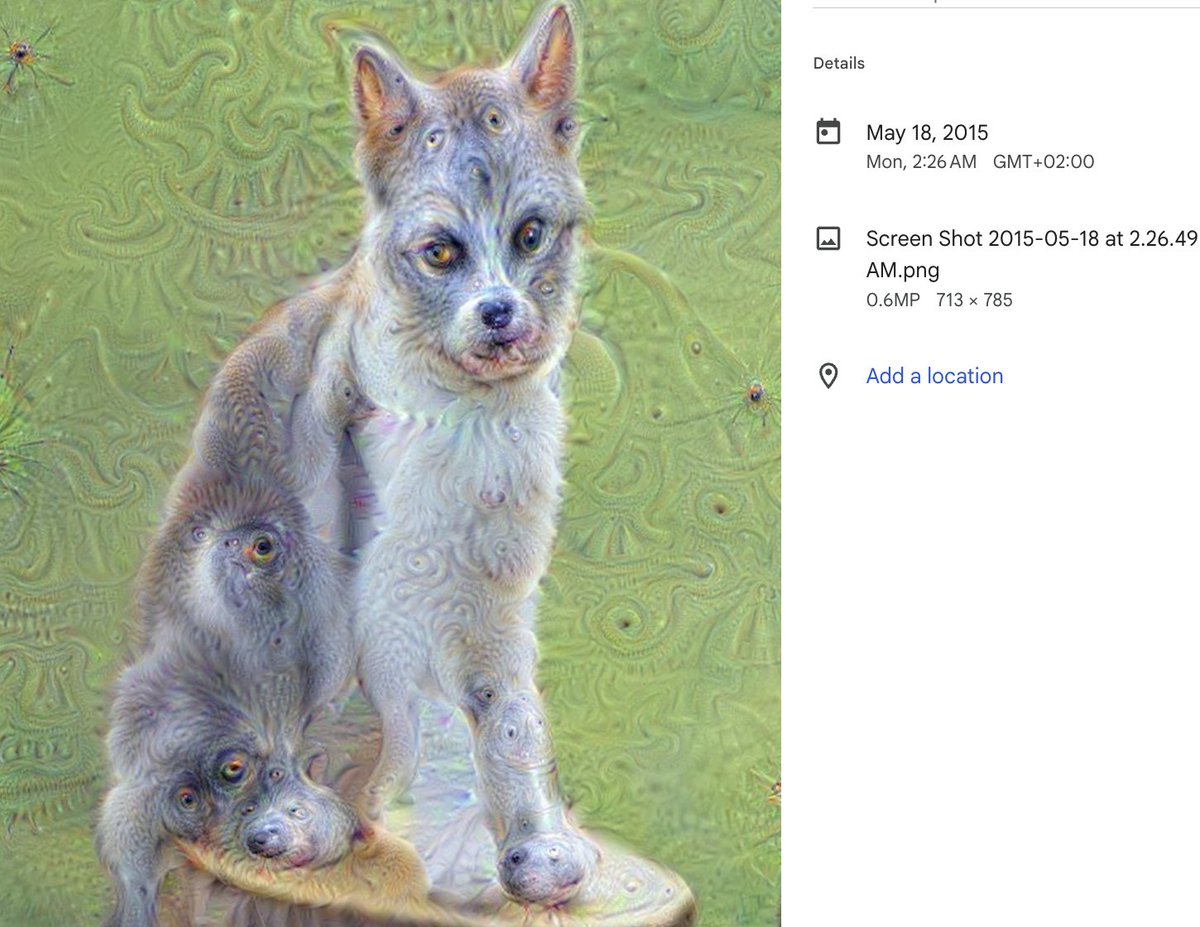

COLLECTOR’S CHOICE 1/ This month celebrates the 10th anniversary of DeepDream, an important development in the history of AI-generated art. Introduced in May 2015 by Alexander Mordvintsev @zzznah, a researcher and artist based in Zurich, DeepDream was one of the first widely recognized applications of neural networks for image generation. It played a major role in popularizing AI art, inspiring a wave of experimentation that continues among many artists today. Image: Just before DeepDream: 1000 classes #3, 2015/01 by Alexander Mordvintsev

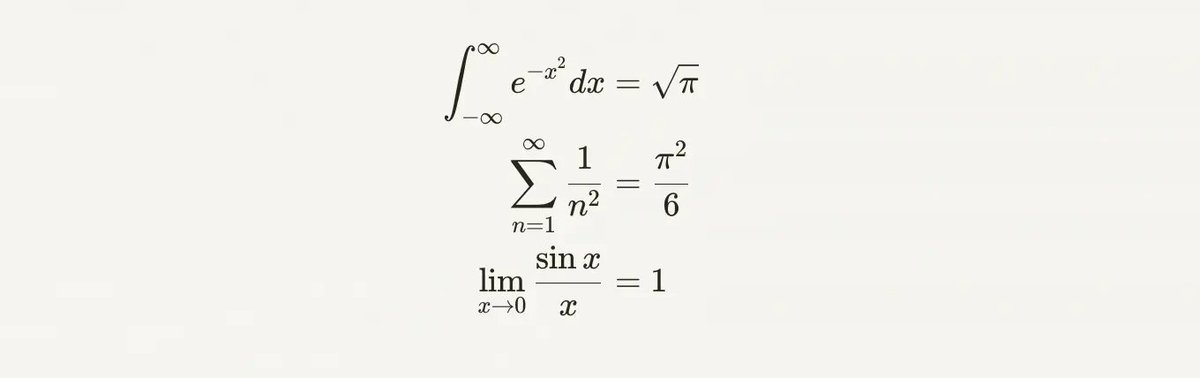

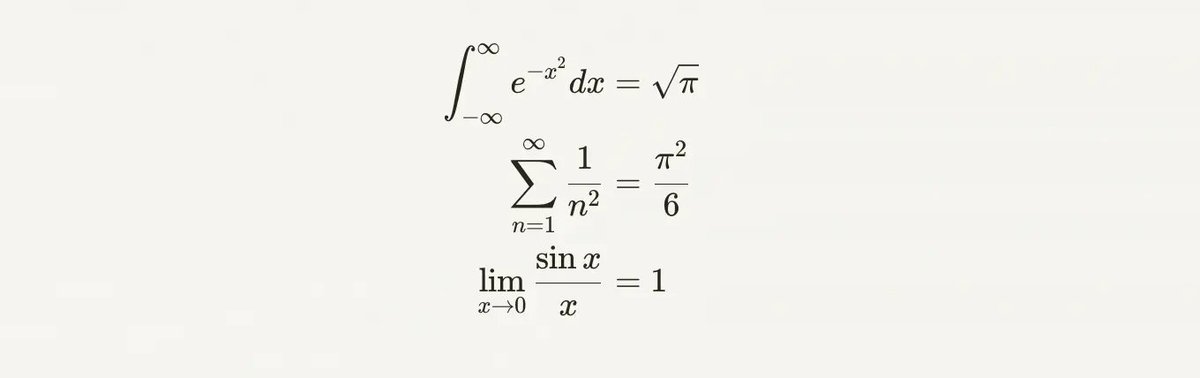

Anthropic should add LaTex rendering to Claude artifacts doesn't currently work, but that's like a one day change to the web app

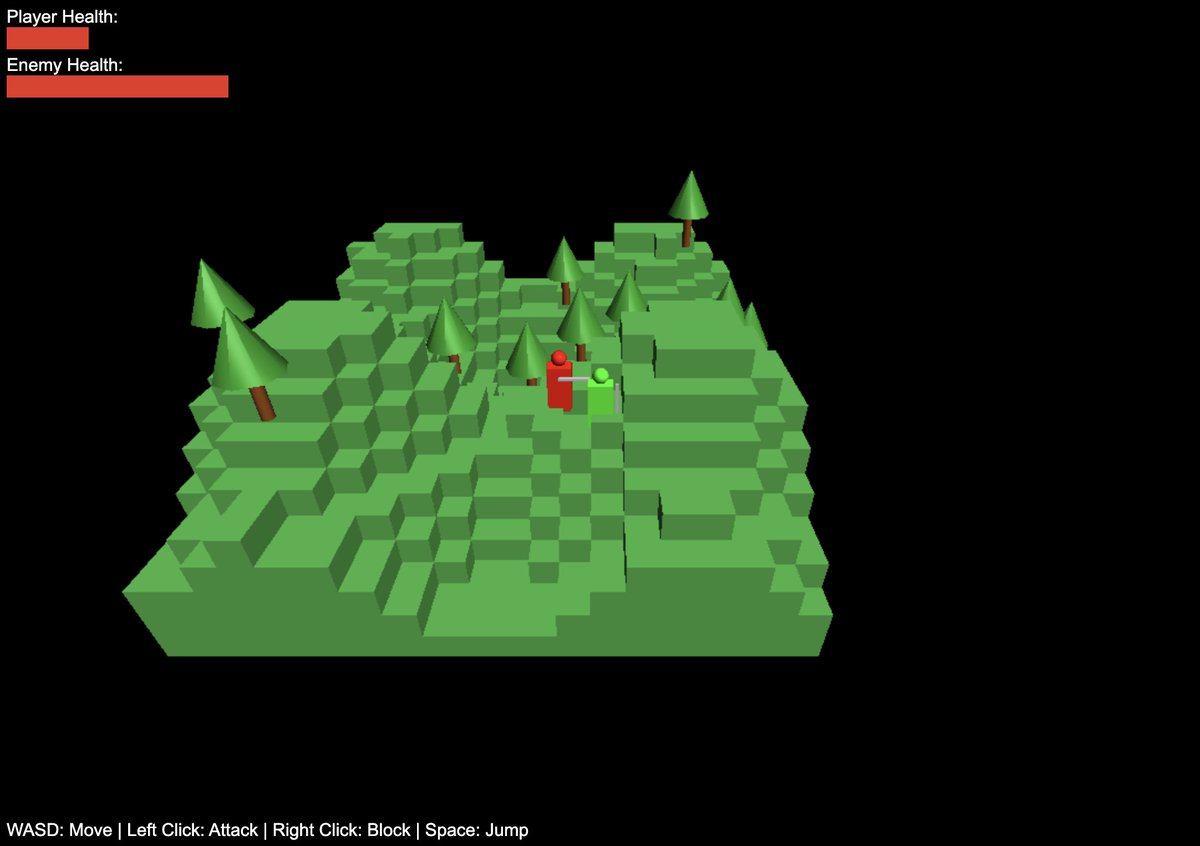

Maybe my most delightful LLM interaction so far? > Make a threejs runescape MVP > Let me use arrow keys to control myself > Add an enemy > Give me a sword > Give them a health bar Magic - in all of 3 minutes of iteration? The artifacts are a great example of unhobbling.