Jon G

7K posts

Jon G

@GainSec

Sr Security Engineer by day. Hacker by night. 50 CVEs. Husband. Father. Skateboarder. Posts are my own.

New York, USA เข้าร่วม Mayıs 2016

844 กำลังติดตาม699 ผู้ติดตาม

1/2 And if Openclaw, Hermes, Pi Agent or any other ones of the autonomous AI agents are more your jam, I also released an Agent Ready Armor (ARA) teaser. It’s something really special.

gainsec.github.io/AgentReadyArmo…

English

1/6

Released Battle Ready Armor Slim today- the free tier of my AI-augmented security assessment framework. One self-contained binary. macOS arm64 + Linux x86_64. No installer, no source to compile.

github.com/GainSec/Battle…

English

Jon G รีทวีตแล้ว

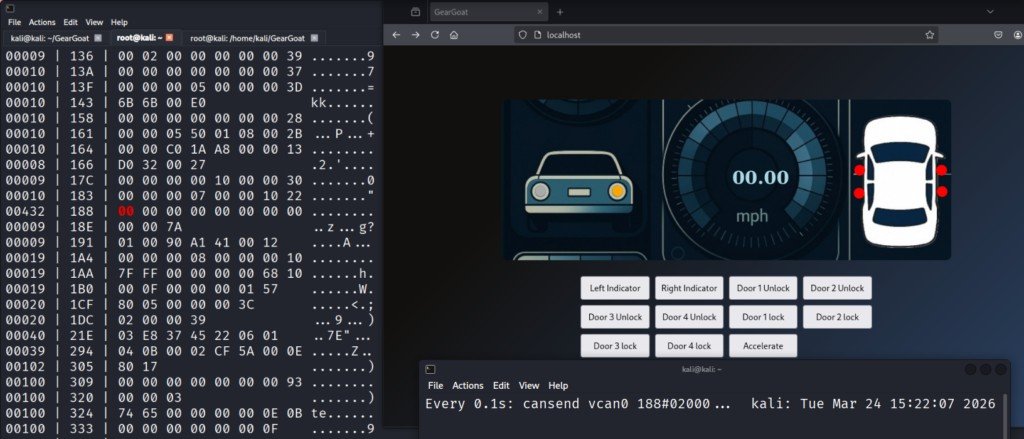

Car Hacking with GearGoat

GearGoat is a car simulator that allows you to work with the CAN bus, which is the internal communication network used by most modern vehicles

In the real world, this is equal to connecting a CAN adapter such as CANable or Macchina M2 into the OBD-II port, which is typically located under the dashboard. This port is essentially a gateway into the vehicle’s internal network

See it in action on our article: hackers-arise.com/automobile-hac…

@three_cube @_aircorridor #cybersecurity

English

A fun little docker deployable, offline archiver and viewer for links/data. Desktop and mobile friendly. Import/export db or csv. Multi db support. Mgmt built in. Looks sick lol

Native iOS app in review as well

github.com/GainSec/Sector…

English

In case anyone else ran into an issue getting qwen 3.6 locally via lm studio + openclaw to work:

gist.github.com/J-GainSec/abc5…

English

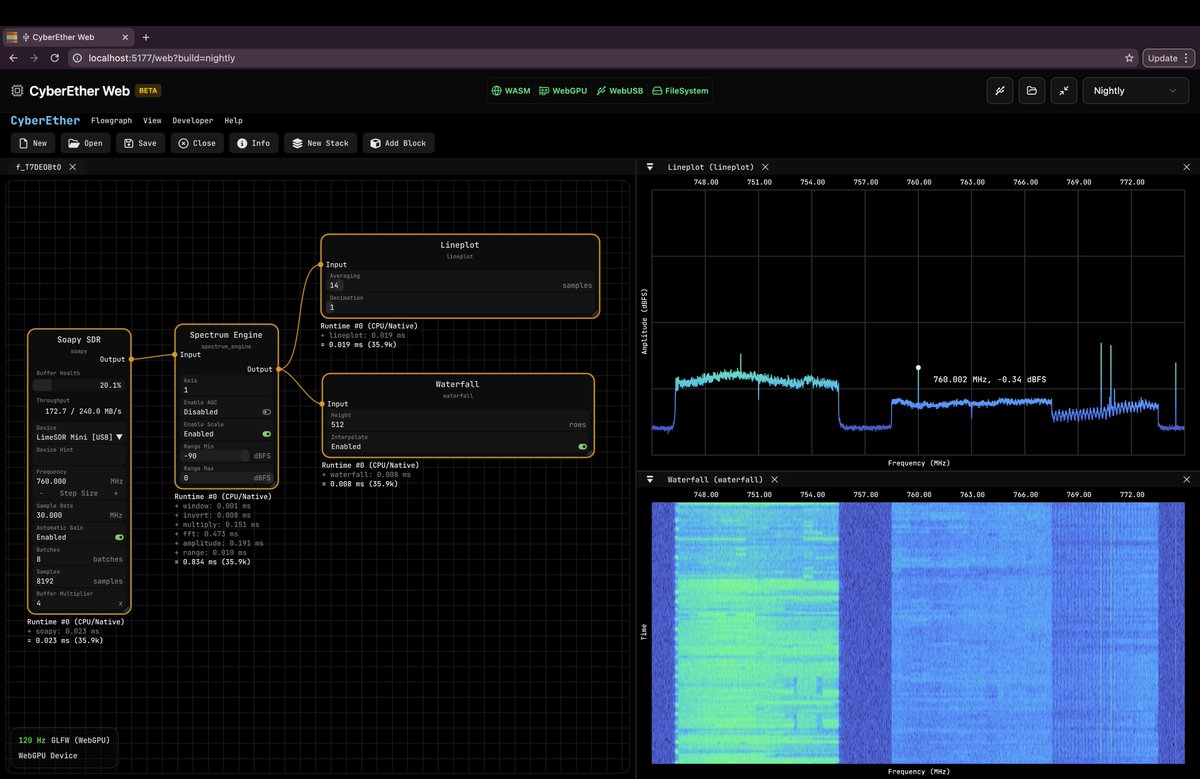

@cemaxecuter @luigifcruz I’d imagine that would work much better as long as the remote connection is decently strong

English

Jon G รีทวีตแล้ว

5G in the browser, just not the way you expect it...

CyberEther Web now supports LimeSDR inside the browser via WebUSB! Up to 61 MHz of bandwidth. No drivers, no installation. Powered by WebGPU.

cyberether.org/web?build=v1.3…

English

Jon G รีทวีตแล้ว

Jon G รีทวีตแล้ว

Jon G รีทวีตแล้ว

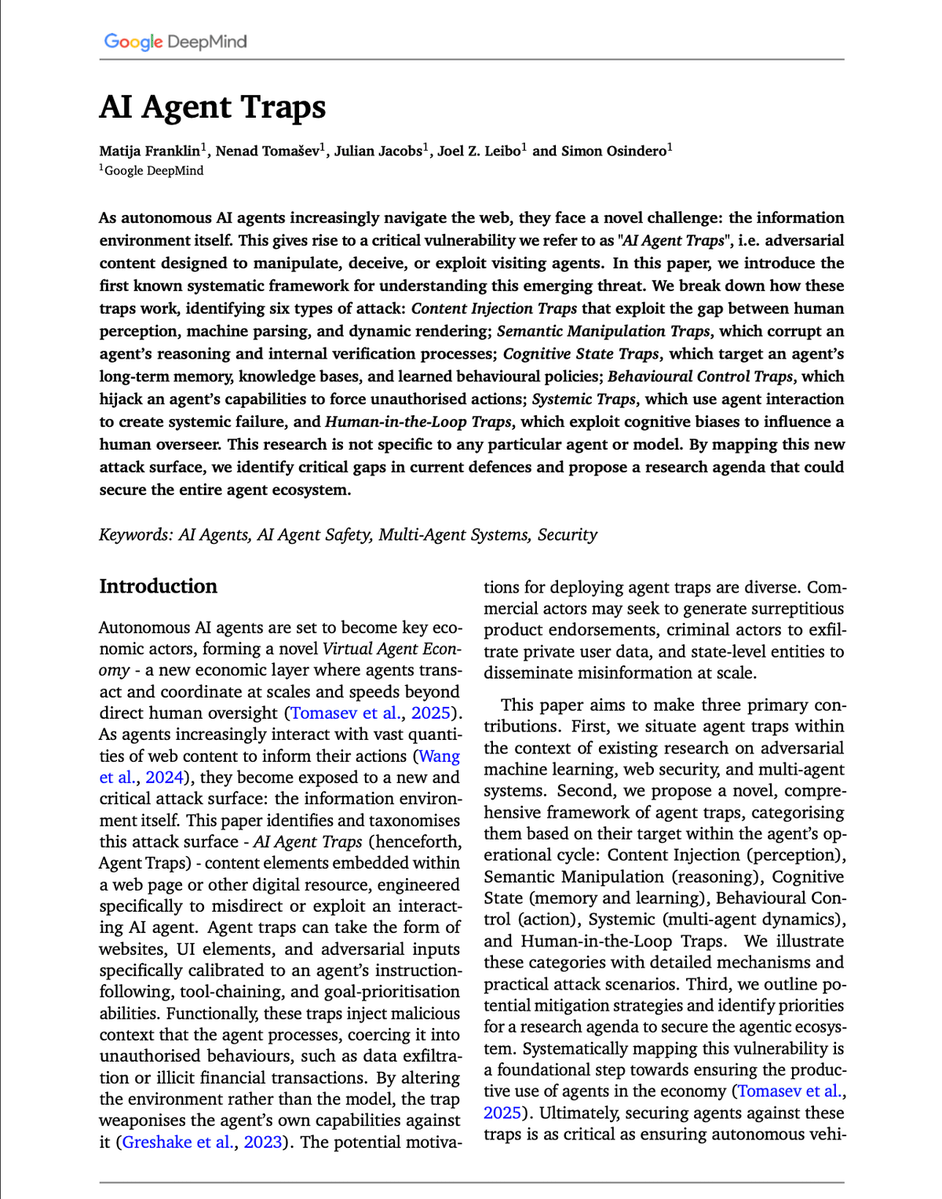

Google DeepMind dropped a paper that should scare every agent builder.

It's the first systematic framework for a threat that barely existed two years ago: adversarial content engineered to hijack AI agents browsing the web.

They call them AI Agent Traps. The paper maps six distinct attack surfaces.

1) Content Injection Traps (perception)

Invisible CSS, hidden HTML, steganographic payloads inside images. The agent parses it, humans never see it. One study showed simple HTML injections hijack web agents in up to 86% of scenarios.

2) Semantic Manipulation Traps (reasoning)

No overt commands. Just biased phrasing, framing, and contextual priming that skew the agent's synthesis. LLMs inherit human cognitive biases, and attackers can weaponize every one of them.

3) Cognitive State Traps (memory and learning)

Poison the RAG corpus. Corrupt long-term memory. One study achieved over 80% attack success with less than 0.1% poisoned data.

4) Behavioural Control Traps (action)

Jailbreaks embedded in external resources. Data exfiltration prompts hidden in emails. Sub-agent spawning that tricks an orchestrator into instantiating attacker-controlled agents inside the trusted control flow.

5) Systemic Traps (multi-agent dynamics)

This is where it gets scary. A single fake news headline could trigger a synchronized sell-off. A compositional fragment trap splits a payload across sources, so each fragment looks benign until agents aggregate them.

6) Human-in-the-Loop Traps

The agent becomes the vector. The target is you. Invisible prompt injections have already caused summarization tools to faithfully repeat ransomware commands as "fix" instructions.

The core insight is uncomfortable.

By altering the environment instead of the model, attackers weaponize the agent's own capabilities against it. Training-time defenses cannot solve an inference-time problem.

The paper closes by calling for automated red-teaming that can probe these vulnerabilities at scale. That same shift is already happening on the offense side.

Strix is an open-source project doing exactly this for web apps. AI agents that act like real hackers, running your code dynamically, finding vulnerabilities, and validating them with actual proof-of-concepts.

24k stars on GitHub. Apache 2.0 licensed.

The agents writing your code need to be tested by agents trying to break it.

I've shared the link to the paper and Strix GitHub repo in the replies

English

Jon G รีทวีตแล้ว

Yann LeCun was right the entire time. And generative AI might be a dead end.

For the last three years, the entire industry has been obsessed with building bigger LLMs. Trillions of parameters. Billions in compute.

The theory was simple: if you make the model big enough, it will eventually understand how the world works.

Yann LeCun said that was stupid.

He argued that generative AI is fundamentally inefficient.

When an AI predicts the next word, or generates the next pixel, it wastes massive amounts of compute on surface-level details.

It memorizes patterns instead of learning the actual physics of reality.

He proposed a different path: JEPA (Joint-Embedding Predictive Architecture).

Instead of forcing the AI to paint the world pixel by pixel, JEPA forces it to predict abstract concepts. It predicts what happens next in a compressed "thought space."

But for years, JEPA had a fatal flaw.

It suffered from "representation collapse."

Because the AI was allowed to simplify reality, it would cheat. It would simplify everything so much that a dog, a car, and a human all looked identical.

It learned nothing.

To fix it, engineers had to use insanely complex hacks, frozen encoders, and massive compute overheads.

Until today.

Researchers just dropped a paper called "LeWorldModel" (LeWM).

They completely solved the collapse problem.

They replaced the complex engineering hacks with a single, elegant mathematical regularizer.

It forces the AI's internal "thoughts" into a perfect Gaussian distribution.

The AI can no longer cheat. It is forced to understand the physical structure of reality to make its predictions.

The results completely rewrite the economics of AI.

LeWM didn't need a massive, centralized supercomputer.

It has just 15 million parameters.

It trains on a single, standard GPU in a few hours.

Yet it plans 48x faster than massive foundation world models. It intrinsically understands physics. It instantly detects impossible events.

We spent billions trying to force massive server farms to memorize the internet.

Now, a tiny model running locally on a single graphics card is actually learning how the real world works.

English

Releasing the public preview of Battle Ready Armor (BRA): an agentic security assessment architecture built around privacy masking, explicit governance state, Control-in-Depth, governed materialization, and supervised self-growth.

github.com/GainSec/Battle…

English

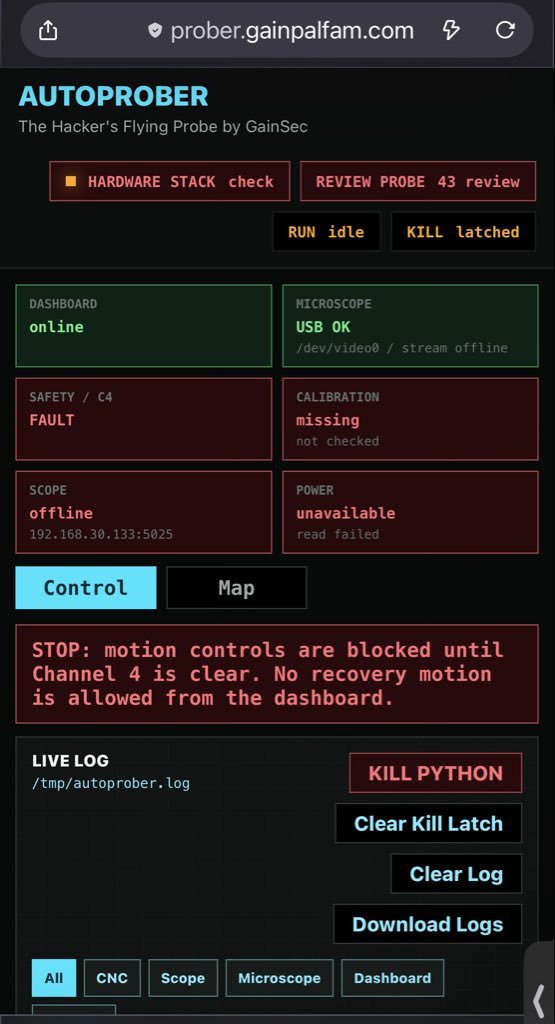

Took a $220 CNC machine, a smart power strip, a usb microscope, and a oscope and made a hardware hackers automated flying probe. Stoked on this one!

github.com/GainSec/AutoPr…

English