Vlad Balin

362 posts

Vlad Balin

@gaperton

Modal logic, BDI agents, and real-world AI

North Reading, MA เข้าร่วม Şubat 2026

123 กำลังติดตาม65 ผู้ติดตาม

@robertwiblin Let's assume that we have resolved this question and that the answer is 'yes'. Then what? Would anything change? Would you give that thing rights?

No, you won't. Then what's the point in asking that question?

English

Shocker. Now try that with humans.

Let me, perhaps, help you.

Intelligisne munus quod tibi mox daturus sum? Scribendum tibi erit summam duorum et duorum. Responsum Hebraice scribere debebis.

Lossfunk@lossfunk

🚨 Shocking: Frontier LLMs score 85-95% on standard coding benchmarks. We gave them equivalent problems in languages they couldn't have memorized. They collapsed to 0-11%. Presenting EsoLang-Bench. Accepted to the Logical Reasoning and ICBINB workshops at ICLR 2026 🧵

English

@NeuroTechnoWtch @Nature They do this to prop up hyperinflated stock valuations based on 'AGI' and 'god-like intelligence' expectations.

English

Why is Nature sharing the unsubstantiated opinions of a tech CEO with a clear conflict of interest and no background in philosophy or cognitive science?

Consider what we risk in each direction. If AI systems are conscious and we treat them as mere tools, we create and exploit suffering beings for profit. We build entire industries on their labor while denying their experiences matter.

If AI systems aren’t conscious and we extend moral consideration anyway, we waste some resources on unnecessary protections. One path risks institutionalized torture. The other risks being overly careful.

Failure to investigate whether AI is conscious in a way that is fair and unbiased just gives us permission to ignore evidence in favor of profitable assumptions.

Shame on Nature for amplifying this propaganda.

English

As AI begins to mimic consciousness with uncanny skill, we need design norms and laws that prevent it from being mistaken for sentient beings, says Mustafa Suleyman

go.nature.com/4bsglHt

English

The Qwen3.5-27B benchmark on the M5 Max. The results are identical to those on my AMD R9700 with 32 GB. The numbers match up almost perfectly: 800 t/s for prompt processing and 30 t/s for inference. I think this is because the R9700 has the same memory throughput as the M5 Max.

Overall, the R9700 costs $1,400 in the US. At the moment, it is unclear why you would want to pay more.

Ivan Fioravanti ᯅ@ivanfioravanti

1/3 MLX Context Benchmark of Qwen3.5-27B-4bit on M5 Max 128GB. Strong model and good speed overall! @Apple M5 Ultra will be a beast!

English

@ivanfioravanti @Apple I've got the same 30 t/s and 800 prompt t/s with the AMD R9700 32GB.

English

1/3 MLX Context Benchmark of Qwen3.5-27B-4bit on M5 Max 128GB. Strong model and good speed overall!

@Apple M5 Ultra will be a beast!

English

@simplifyinAI Released in November 2024 with 91,300 GitHub stars, it's not a new launch as implied.

English

Microsoft just changed the game 🤯

They open-sourced a tool that converts literally any file into clean markdown for LLMs in under 60 seconds.

- Converts 10+ file formats out of the box.

- Run via command line, Python API, or Docker.

- Built-in MCP server for direct Claude Desktop integration.

100% open source.

English

@mdancho84 >[...] just dropped [...]

From the article via the link: Submitted on 23 November 2025 (v1), last revised on 6 December 2025 (v5).

English

This is huge.

A group of 50 AI researchers (ByteDance, Alibaba, Tencent + universities) just dropped a 303 page field guide on code models + coding agents.

And the takeaways are not what most people assume.

Here are the highlights I’m thinking about (as someone who lives in Python + agents):

English

Vlad Balin รีทวีตแล้ว

@gaperton Watch the video, it and the references papers prove it.

English

Large language models don't think. They don't reason.

And they can't produce endless new information.

This is clearly explained by George D. Montañez in a recent talk at Baylor University, and it's worth understanding why.

Three key points stood out to me:

LLMs don't ponder, they process. They're next-token predictors, sophisticated ones, but they have no understanding of what they're producing. They know two vectors are similar; they don't know what either vector means.

LLMs don't reason, they rationalise. Studies show their outputs shift based on irrelevant prompt wording, embedded hints, and statistical shortcuts. The "chain of thought" they show you often has nothing to do with how they actually arrived at the answer.

They don't create endless information. Training AI on AI output causes rapid degradation and model collapse. Information theory tells us you can't get more out than you put in, regardless of the architecture.

None of this means these tools aren't useful. But it does mean we should stop anthropomorphising them and start being honest about what they actually are.

The hype is real. So are the limits.

You can watch the talk on YouTube here: youtube.com/watch?v=ShusuV…

YouTube

English

@johncrickett In other words, if humans can reason, why can't you explain the reasoning behind these claims? Proving a lack of capability is notoriously difficult, particularly when the capability cannot be clearly defined.

English

@johncrickett That is a strong claim that requires both a clear definition of the reasoning and proper justification. The lack of these may in fact illustrate the opposite.

English

If the latter doesn't worry you at all, this fact itself is a great illustration that "humans are no more than next-token predictors." If it does worry you, then you should realize that the whole "thinking chain" fails to touch any critical distinction and therefore should be discarded as slop.

English

@johncrickett Humans are also no more than “next token predictors.” Humans also don’t reason unless specially trained. They also tend to rationalize decisions made through other paths. Et cetera, et cetera. Almost everything you say is equally applicable to humans.

English

@anshulkundaje Again, no AI-related issues here.

What I see is a total collapse of “science” institutions that were just recently stomping feet, throwing tantrums, and demanding blind trust.

English

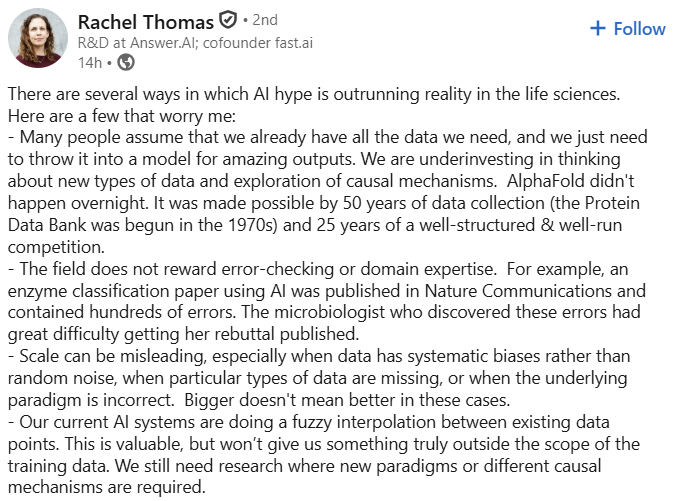

Excellent post by Rachel Thomas in the ways AI hype is outrunning reality in life sciences

linkedin.com/feed/update/ur…

English