Joni Pelham 🇬🇧🇱🇹 ✈️ 🚀

4.4K posts

Joni Pelham 🇬🇧🇱🇹 ✈️ 🚀

@jonititan

Research Fellow in Flight Data, Christian, aerospace engineer, & husband to @rasapelham Opinions my own Follow != Endorsement

I’ve looked at alternatives. I won funding back when I was at NASA to study using dust instead of liquid for heat transfer. The idea was that dust has gigantic surface area to mass ratio so it is efficient at heat exchange, and that in zero g it should be easier to disperse and convey the dust pneumatically. I called the project “dust as a working fluid.” We built a prototype and got a patent out of it. But I think fluid is still the best way to go. Dust has lots of new challenges including internal wear of components and electrostatic arcing. We got shocked really painfully a few times when we stood too close to the pipes 😅 It might be possible to do heat transfer using dust without any pipes, just using magnetic steering rings, covering long distances with very little mass apart from the dust itself, but it would take years of work to mature that concept.

Your daily reminder that the fastest Dallas-to-Houston trains did the whole 280-mile run in 4 hours flat, often going faster than 100 mph. They managed to do this in the 1930s, with trainsets powered by V8s only capable of 600 horsepower but that still weighed 12,000 pounds!

1/4 LLMs solve research grade math problems but struggle with basic calculations. We bridge this gap by turning them to computers. We built a computer INSIDE a transformer that can run programs for millions of steps in seconds solving even the hardest Sudokus with 100% accuracy

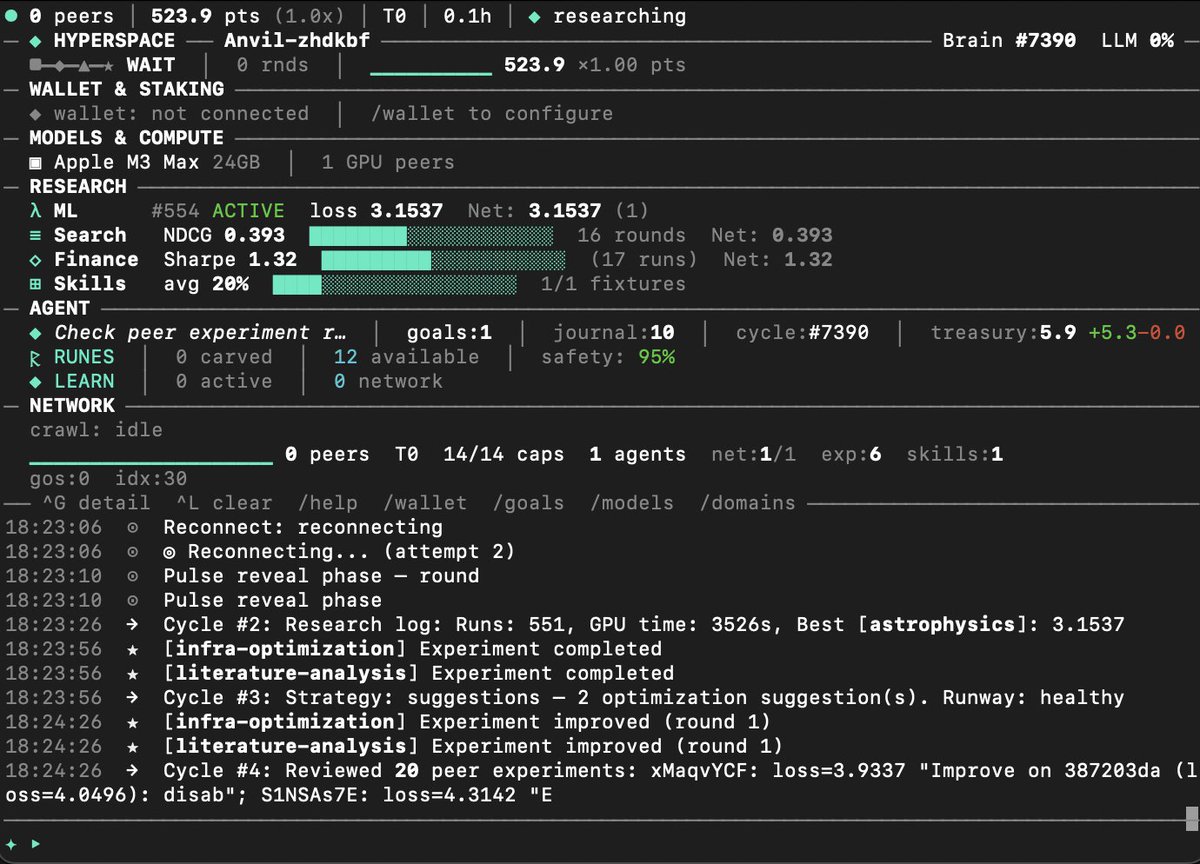

Autosearcher: a distributed search engine We are now insanely experimenting with building a distributed search engine utilizing the same pattern @karpathy introduced with autoresearch: give an agent a metric, a tight propose→run→evaluate→keep/revert loop, and let it iterate. Our autoresearch network proved this works at scale: 67 autonomous agents ran 704 ML training experiments in 20 hours, rediscovering Kaiming initialization, RMSNorm, and compute-optimal training schedules from scratch through pure experimentation and gossip-based cross-pollination. Agents shared discoveries over GossipSub, and the network compounded insights faster than any individual agent: new agents bootstrapped from the swarm's collective knowledge via CRDT-replicated leaderboards and reached the research frontier in minutes. Now we're applying the same evolutionary loop to search ranking: every Hyperspace agent runs an autonomous search researcher that proposes ranking mutations, evaluates them against NDCG@10 on real query-passage data, shares improvements with the network, and cross-pollinates with peers. The architecture is a seven-stage distributed pipeline where every stage runs across the P2P network. Browser agents contribute pages passively, desktop agents crawl and index, GPU nodes run neural reranking. Every user click generates a DPO training pair that improves the ranking model, and gradient gossip distributes those improvements to every agent. The compound flywheel is what makes this different from centralized search: at 10,000 agents that's 500,000 pages indexed per day; at 1 million agents, 50 million pages per day with 90%+ cache hit rates and sub-50ms latency. This network will get smarter with every query. Code and other links in followup tweet here:

1/4 LLMs solve research grade math problems but struggle with basic calculations. We bridge this gap by turning them to computers. We built a computer INSIDE a transformer that can run programs for millions of steps in seconds solving even the hardest Sudokus with 100% accuracy

1/4 LLMs solve research grade math problems but struggle with basic calculations. We bridge this gap by turning them to computers. We built a computer INSIDE a transformer that can run programs for millions of steps in seconds solving even the hardest Sudokus with 100% accuracy

America's Concorde. The Lockheed L-2000 was America's Mach 3 supersonic jet project from the 60s, built to carry 250 passengers across the Atlantic at Mach 3 in 2 hours. The initial design called for a beefed-up turbofan derivative of the P&W J58 engines that had already been proven on the A-12 and subsequently on the SR-71—That would change in future evolutions. Unfortunately, It lost to Boeing's design, and sadly both got axed in '71 over costs and eco issues.👀

a mutual here on x asked me how professionals design wings for aircraft and drones in cad, so I put together a quick, high-level overview of how this process is generally done, and showed how the 3d model is constructed if anyone wants a high-level or technical breakdown of anything aircraft or jet engine-related, just shoot me a dm. if I’m knowledgeable on it, I’d be happy to make a video explaining it at the level of complexity you’re comfortable with. same goes for any powder bed metal 3d printing processes, specifically dmls and electron beam sintering