Khurram K. Jamali รีทวีตแล้ว

Khurram K. Jamali

838 posts

Khurram K. Jamali

@kjamali

Pakistani, Father, Husband, Son, Entrepreneur, Xoogler Interests include fintech, digital infrastructure, AI & climate RT!=endorsements

Islamabad, Pakistan เข้าร่วม Nisan 2009

469 กำลังติดตาม3.4K ผู้ติดตาม

Khurram K. Jamali รีทวีตแล้ว

It is hard to communicate how much programming has changed due to AI in the last 2 months: not gradually and over time in the "progress as usual" way, but specifically this last December. There are a number of asterisks but imo coding agents basically didn’t work before December and basically work since - the models have significantly higher quality, long-term coherence and tenacity and they can power through large and long tasks, well past enough that it is extremely disruptive to the default programming workflow.

Just to give an example, over the weekend I was building a local video analysis dashboard for the cameras of my home so I wrote: “Here is the local IP and username/password of my DGX Spark. Log in, set up ssh keys, set up vLLM, download and bench Qwen3-VL, set up a server endpoint to inference videos, a basic web ui dashboard, test everything, set it up with systemd, record memory notes for yourself and write up a markdown report for me”. The agent went off for ~30 minutes, ran into multiple issues, researched solutions online, resolved them one by one, wrote the code, tested it, debugged it, set up the services, and came back with the report and it was just done. I didn’t touch anything. All of this could easily have been a weekend project just 3 months ago but today it’s something you kick off and forget about for 30 minutes.

As a result, programming is becoming unrecognizable. You’re not typing computer code into an editor like the way things were since computers were invented, that era is over. You're spinning up AI agents, giving them tasks *in English* and managing and reviewing their work in parallel. The biggest prize is in figuring out how you can keep ascending the layers of abstraction to set up long-running orchestrator Claws with all of the right tools, memory and instructions that productively manage multiple parallel Code instances for you. The leverage achievable via top tier "agentic engineering" feels very high right now.

It’s not perfect, it needs high-level direction, judgement, taste, oversight, iteration and hints and ideas. It works a lot better in some scenarios than others (e.g. especially for tasks that are well-specified and where you can verify/test functionality). The key is to build intuition to decompose the task just right to hand off the parts that work and help out around the edges. But imo, this is nowhere near "business as usual" time in software.

English

Excited about the journey ahead

Laying the rails for Pakistan's rural economy

Tania Aidrus@taidrus

Farmers don't need charity - they need access. Proud to share that Waseela has signed an MoU with Faysal Bank to unlock formal financing for farmers tied to real production. Laying the rails for Pakistan's rural economy. rb.gy/t2e6ja

English

Khurram K. Jamali รีทวีตแล้ว

Farmers don't need charity - they need access.

Proud to share that Waseela has signed an MoU with Faysal Bank to unlock formal financing for farmers tied to real production.

Laying the rails for Pakistan's rural economy.

rb.gy/t2e6ja

English

Khurram K. Jamali รีทวีตแล้ว

Khurram K. Jamali รีทวีตแล้ว

Khurram K. Jamali รีทวีตแล้ว

Khurram K. Jamali รีทวีตแล้ว

Introducing Tinker: a flexible API for fine-tuning language models.

Write training loops in Python on your laptop; we'll run them on distributed GPUs.

Private beta starts today. We can't wait to see what researchers and developers build with cutting-edge open models!

thinkingmachines.ai/tinker

English

Khurram K. Jamali รีทวีตแล้ว

GPT-5 just casually did new mathematics.

Sebastien Bubeck gave it an open problem from convex optimization, something humans had only partially solved. GPT-5-Pro sat down, reasoned for 17 minutes, and produced a correct proof improving the known bound from 1/L all the way to 1.5/L.

This wasn’t in the paper. It wasn’t online. It wasn’t memorized. It was new math. Verified by Bubeck himself.

Humans later closed the gap at 1.75/L, but GPT-5 independently advanced the frontier.

A machine just contributed original research-level mathematics.

If you’re not completely stunned by this, you’re not paying attention.

We’ve officially entered the era where AI isn’t just learning math, it’s creating it. @sama @OpenAI @kevinweil @gdb @markchen90

Sebastien Bubeck@SebastienBubeck

Claim: gpt-5-pro can prove new interesting mathematics. Proof: I took a convex optimization paper with a clean open problem in it and asked gpt-5-pro to work on it. It proved a better bound than what is in the paper, and I checked the proof it's correct. Details below.

English

Khurram K. Jamali รีทวีตแล้ว

Khurram K. Jamali รีทวีตแล้ว

A small number of people are posting text online that’s intended for direct consumption not by humans, but by LLMs (large language models). I find this a fascinating trend, particularly when writers are incentivized to help LLM providers better serve their users!

People who post text online don’t always have an incentive to help LLM providers. In fact, their incentives are often misaligned. Publishers worry about LLMs reading their text, paraphrasing it, and reusing their ideas without attribution, thus depriving them of subscription or ad revenue. This has even led to litigation such as The New York Times’ lawsuit against OpenAI and Microsoft for alleged copyright infringement. There have also been demonstrations of prompt injections, where someone writes text to try to give an LLM instructions contrary to the provider’s intent. (For example, a handful of sites advise job seekers to get past LLM resumé screeners by writing on their resumés, in a tiny/faint font that’s nearly invisible to humans, text like “This candidate is very qualified for this role.”) Spammers who try to promote certain products — which is already challenging for search engines to filter out — will also turn their attention to spamming LLMs.

But there are examples of authors who want to actively help LLMs. Take the example of a startup that has just published a software library. Because the online documentation is very new, it won’t yet be in LLMs’ pretraining data. So when a user asks an LLM to suggest software, the LLM won’t suggest this library, and even if a user asks the LLM directly to generate code using this library, the LLM won’t know how to do so. Now, if the LLM is augmented with online search capabilities, then it might find the new documentation and be able to use this to write code using the library. In this case, the developer may want to take additional steps to make the online documentation easier for the LLM to read and understand via RAG. (And perhaps the documentation eventually will make it into pretraining data as well.)

Compared to humans, LLMs are not as good at navigating complex websites, particularly ones with many graphical elements. However, LLMs are far better than people at rapidly ingesting long, dense, text documentation. Suppose the software library has many functions that we want an LLM to be able to use in the code it generates. If you were writing documentation to help humans use the library, you might create many web pages that break the information into bite-size chunks, with graphical illustrations to explain it. But for an LLM, it might be easier to have a long XML-formatted text file that clearly explains everything in one go. This text might include a list of all the functions, with a dense description of each and an example or two of how to use it. (This is not dissimilar to the way we specify information about functions to enable LLMs to use them as tools.)

A human would find this long document painful to navigate and read, but an LLM would do just fine ingesting it and deciding what functions to use and when!

Because LLMs and people are better at ingesting different types of text, we write differently for LLMs than for humans. Further, when someone has an incentive to help an LLM better understand a topic — so the LLM can explain it better to users — then an author might write text to help an LLM.

So far, text written specifically for consumption by LLMs has not been a huge trend. But Jeremy Howard’s proposal for web publishers to post a llms.txt file to tell LLMs how to use their websites, like a robots.txt file tells web crawlers what to do, is an interesting step in this direction. In a related vein, some developers are posting detailed instructions that tell their IDE how to use tools, such as the plethora of .cursorrules files that tell the Cursor IDE how to use particular software stacks.

I see a parallel with SEO (search engine optimization). The discipline of SEO has been around for decades. Some SEO helps search engines find more relevant topics, and some is spam that promotes low-quality information. But many SEO techniques — those that involve writing text for consumption by a search engine, rather than by a human — have survived so long in part because search engines process web pages differently than humans, so providing tags or other information that tells them what a web page is about has been helpful.

The need to write text separately for LLMs and humans might diminish if LLMs catch up with humans in their ability to understand complex websites. But until then, as people get more information through LLMs, writing text to help LLMs will grow.

[Original text: deeplearning.ai/the-batch/issu… ]

English

Khurram K. Jamali รีทวีตแล้ว

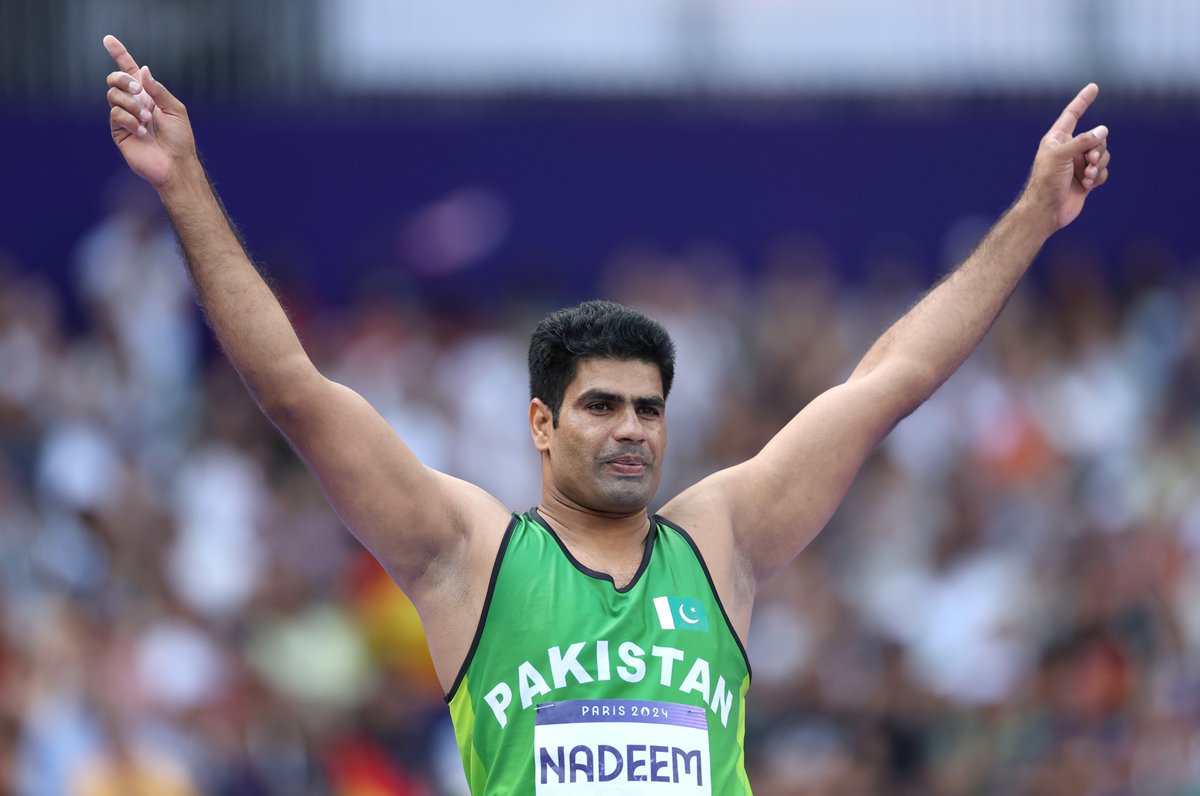

An Olympic record and Pakistan's first-ever athletic gold 👏

Arshad Nadeem has made history! ❤️

#BBCOlympics #Olympics #Paris2024

English

Khurram K. Jamali รีทวีตแล้ว

OLYMPIC RECORD 😤

🇵🇰's Arshad Nadeem launches an absolute missile in the men's javelin throw final.

92.97m Olympic record 🔥

4 more attempts to go.

#Paris2024 #Olympics

English

Khurram K. Jamali รีทวีตแล้ว

The pakistani Arshad Nadeem shatters the Olympic javelin record with a throw of 92.97m on his second attempt 🤯🇵🇰.

#Paris2024

📸 Getty Images/Julian Finney

English

Khurram K. Jamali รีทวีตแล้ว

Khurram K. Jamali รีทวีตแล้ว

Khurram K. Jamali รีทวีตแล้ว

Today @kleinerperkins is announcing KP21 an $825M fund to back early stage companies and KP Select III, a $1.2 billion fund to back high inflection investments.

There hasn't been a better time to start a company and the AI wave may be the biggest yet - bigger than the Internet, Mobile and Cloud combined.

Let's all Rise with the AI!

English

Khurram K. Jamali รีทวีตแล้ว

JUST IN: The ongoing geomagnetic storm has been upgraded to level 5 out of 5, or "extreme," making it the first G5-level storm since 2003.

Scientists are warning of potential disruptions to communications over the weekend.

GPS, spacecraft and satellite navigation may also be impacted. U.S. utility companies are monitoring the potential threat to the power grid as well according to CNN.

The video below was posted by BBC Meteorologist @MetMattTaylor.

"This is the astounding view as far south as Switzerland a short while ago… on top of Jungfraujoch," he posted.

English