Lukas Wolf

509 posts

Lukas Wolf

@lukaswolf_

Co-founder @sonia_health (YC W24). Prev AI research @MIT @ETH.

San Francisco เข้าร่วม Nisan 2019

5.1K กำลังติดตาม910 ผู้ติดตาม

ทวีตที่ปักหมุด

Lukas Wolf รีทวีตแล้ว

Lukas Wolf รีทวีตแล้ว

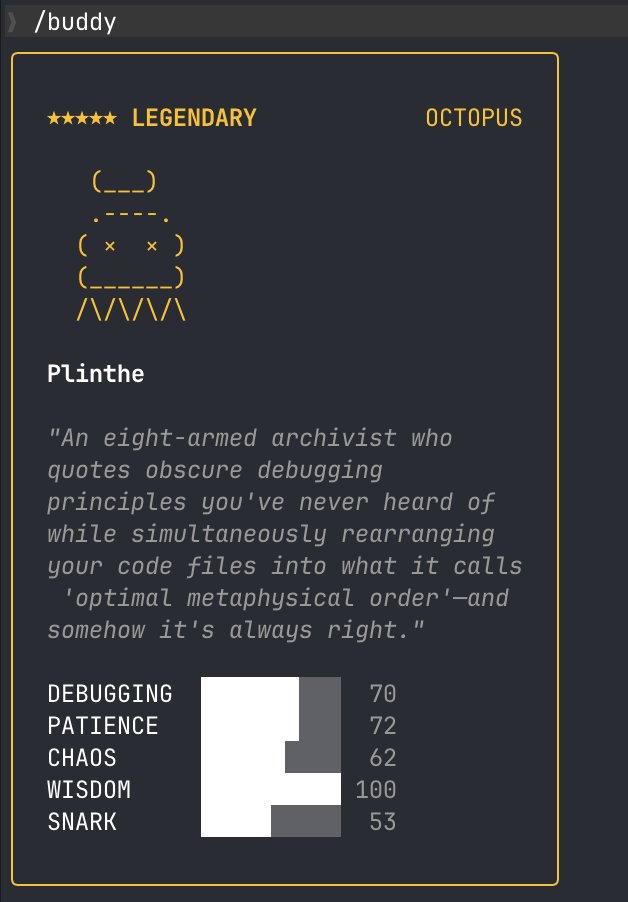

introducing 🐺 AlphaClaw, the ultimate setup harness for @openclaw. open-source, self-managed, free-to-use, with no lock-in.

AlphaClaw makes OpenClaw setup and maintenance easier by providing an elegant GUI that wraps OpenClaw's CLI.

📅 Google Workspace OAuth & pubsub built in w/ gog-cli

🔄 Auto-backup to GitHub

🧱 Prompt hardening reduces agent drift

🩺 Drift Doctor analyzes your prompts and workspace for drift

💬 Telegram multi-topic workspace setup wizard

📂 Full file browser and editor, no SSH needed

🐕 Watchdog auto-detects crashes, self-heals gateway

🛠️ Manage env vars from the UI

🔑 Manage model keys & OAuth visually

🪝 Webhook creator & inspector with replay & debug

📊 Token usage & cost analytics built in

⬆️ One-click updates, no redeploy needed

📦 Import existing setup from GitHub

i didn't build alphaclaw to replace openclaw or compete with it. openclaw is the best user-owned AI agent framework out there and more people should be able to use it without wrestling a CLI for two hours.

there are so many managed "deploy your AI in seconds" product. but they lock you into their platform. if they pivot, shut down, or jack up pricing, your agent goes with it.

alphaclaw gives you that same one-click simplicity, but everything runs on your infra with your data. no proprietary backend. no config hostage. if railway disappears tomorrow, you still have a standard openclaw instance backed up to your own github repo.

everything alphaclaw does, you could do manually. it's just automation and UI on top of the real thing. outgrow it? disagree with its opinions? eject. your openclaw instance is still a standard openclaw instance.

to make it convenient, i’ve created both a one-click deploy template on railway and render to start quickly. make sure you have 8GB of ram on your instance.

look forward to your feedback and to building this out with the @openclaw community! 🦞

github.com/chrysb/alphacl…

feature deep-dive in the 🧵

English

@bcherny That’s really nice. So far I had additional Claude instances open to babysit my PRs haha

English

Released today: /loop

/loop is a powerful new way to schedule recurring tasks, for up to 3 days at a time

eg. “/loop babysit all my PRs. Auto-fix build issues and when comments come in, use a worktree agent to fix them”

eg. “/loop every morning use the Slack MCP to give me a summary of top posts I was tagged in”

Let us know what you think!

English

@dakshgup That’s cool. We built a slash command for us to address greptiles review comments for Claude code :)

English

@claudeai Can we schedule them in the cloud? I would want to run scheduled tasks even if my laptop is turned off

English

Cowork is in research preview on macOS or Windows, and is available on all paid Claude plans.

Try now: claude.com/cowork

English

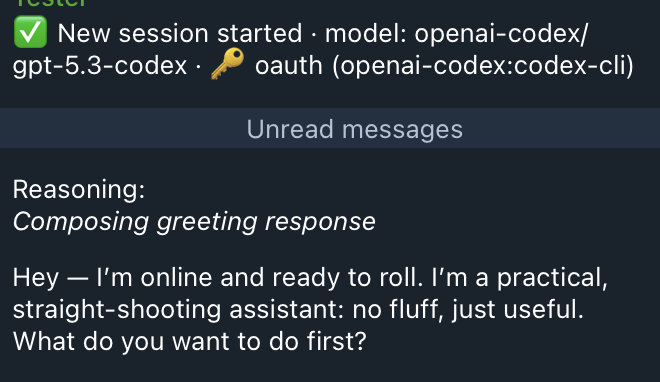

@chrysb @AnthropicAI I wonder what OpenAI did with their dataset, the likelihood of “no fluff” of gpt models is unlike anything you can find online or anywhere really. They must have a synthetic no fluff data engineer

English

Lukas Wolf รีทวีตแล้ว

~80% of my team now has their own @openclaw agent

We had a Sunday jam session, and we’re now up to ~16 of 20 folks

I’m considering racking everyone into two Mac studios at this point, so we’re unconstrained with tokens and the ability to simulate (spoof?) human behavior on an actual desktop.

Many services are going to ban OpenCLAW, and some already limit you (Reddit, X, LinkedIn, etc.), so having a computer that acts exactly like a human feels like an edge on those services, which keeps pushing folks to their APIs

Discuss

English

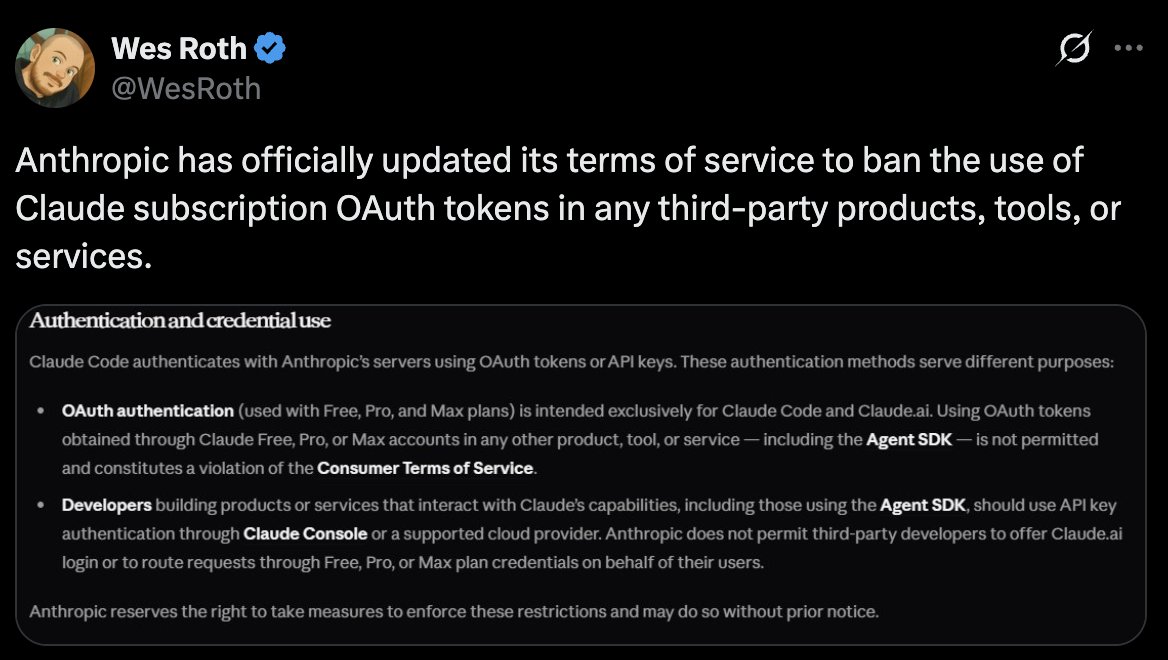

@chrysb @AnthropicAI @Google 100%. Got a ChatGPT pro subscription just because of this after having canceled it for a $200 Claude subscription

English

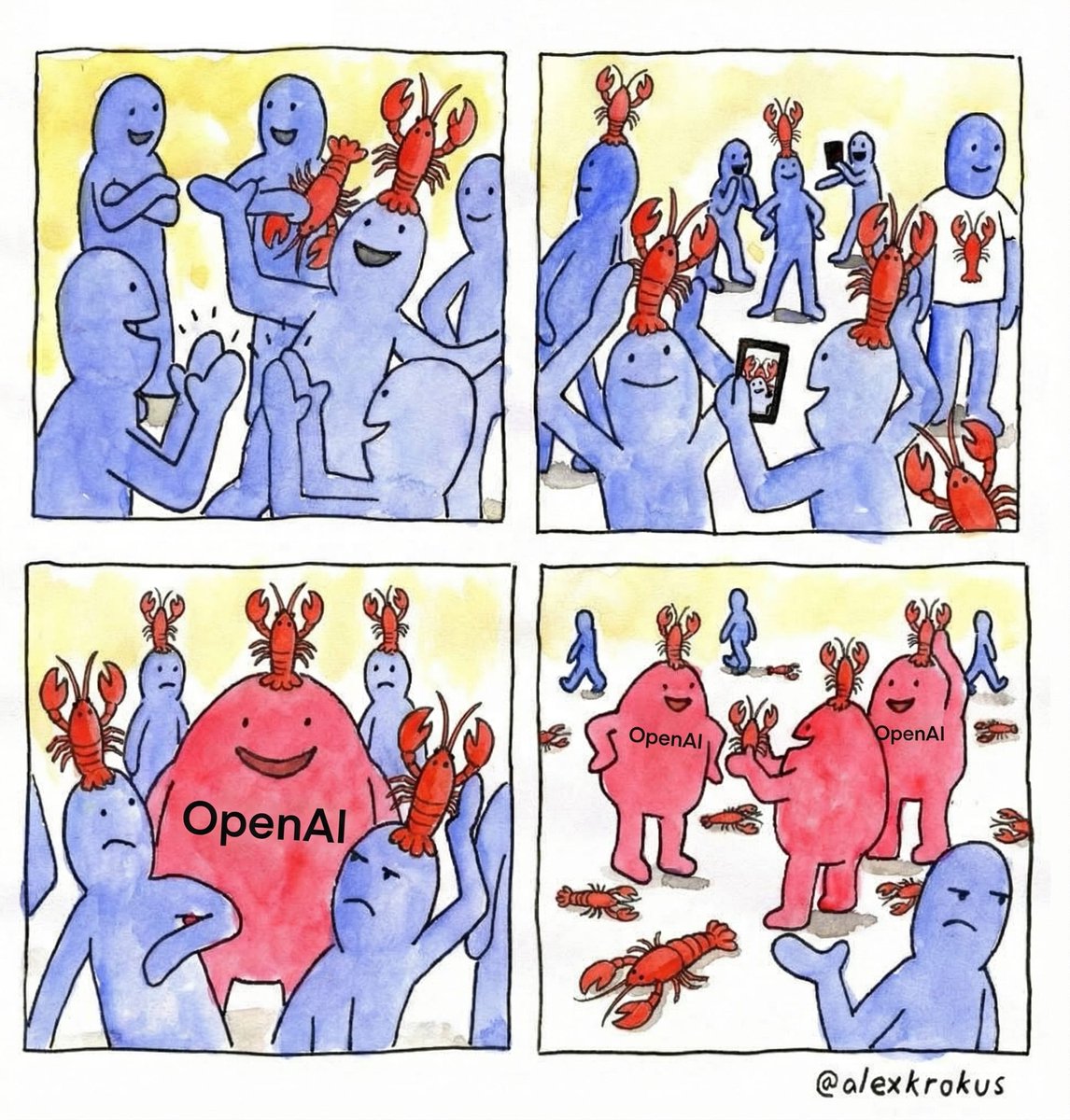

imagine banning your most engaged users to serve your “actual users”.

imagine being the engineers on the team implementing these controls, instead of adding value to the ecosystem.

@AnthropicAI and @Google have committed to a fight they can’t win. the people have spoken, and unless they remove oauth tokens entirely, they’re going to be playing whack-a-mole for all of eternity while they bleed developer mindshare to @OpenAI who has sensibly allowed use of their subscription because they're playing the long game.

this is the RIAA suing napster users all over again. spotify solved piracy by making it easy to pay. the answer was never more lawsuits, it was better packaging.

personal agent builders don't want to count tokens. they want to pay $200/mo (or more) and not think about it. it's the same psychology behind unlimited phone plans. predictable costs lower friction more than cheap costs do.

"but API revenue is a separate business line"

it's a packaging problem, not a margin problem. providers can tier it. $20/mo for casual use, $200/mo (or more) for power users who want to pipe it through whatever they want. the margins are there if you design the tiers right.

"but abuse and rate limiting"

you already handle this with API rate limits. a subscription tier with reasonable throughput caps is not a new engineering problem.

"but platform lock-in"

the provider that opens up first wins the ecosystem. android vs blackberry. the one that lets developers build freely on top will capture more value long-term than the one hoarding access behind walled gardens.

the current split where consumer subs are cheap but locked down and API access is expensive but open creates a weird middle ground where power users are neither served nor deterred. they’ll just keep hacking around it.

lean into the demand. package it properly. the first provider to offer the best "use your sub anywhere" tier wins the developer market.

banning is bad for business.

English

unpopular (maybe?) opinion: MCP is dead in the water

@openclaw has shown me that api & cli will win.

every MCP server you connect loads its tool definitions into your context window. name, description, parameter schema, all of it. connect 10 servers with 5 tools each and you've burned 50 tool definitions worth of tokens before your conversation even starts.

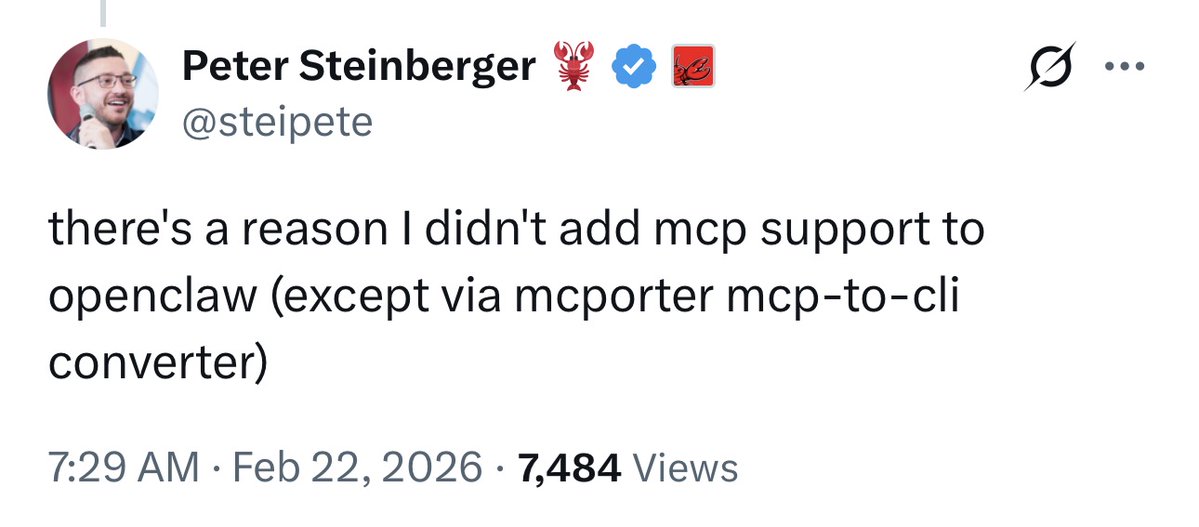

context bloat will never be a good thing - performance-wise or economically. i assume this is why @steipete left it out of @openclaw.

the "exec" tool paired with on-demand skills is all you need.

it can run any command invented since the beginning of computers. a resurgence of glory for ancient, but powerful tools like curl, sed, awk, grep. command line tools once mastered by the greats, but long forgotten and buried underneath abstractions developed for us lesser mortals.

now available to us all, piloted by the smartest models on earth. every founder gets their own mass army of greybeards.

the inertia required for MCP adoption, imo, is too great to overcome the momentum @openclaw has breathed into api + cli + skills.

the common defenses people bring up:

• "MCP gives you typed schemas and validation" — so does a well-documented CLI

• "MCP gives you explicit permissions" — so does a sandbox with an allowlist

• "MCP is a standard" — a standard that scales poorly is still a standard that scales poorly

lastly, i've heard many MCP servers are just wrapping existing APIs - that kind of redundancy and unnecessary indirection should be a red flag.

so, let's drop it and redirect our efforts into cli tools & apis with accompanying skills.

English

what I would be working on if I started another company today

Anthropic@AnthropicAI

Software engineering makes up ~50% of agentic tool calls on our API, but we see emerging use in other industries. As the frontier of risk and autonomy expands, post-deployment monitoring becomes essential. We encourage other model developers to extend this research.

English

Nobody knows how anything works

Simon Willison@simonw

Short musings on "cognitive debt" - I'm seeing this in my own work, where excessive unreviewed AI-generated code leads me to lose a firm mental model of what I've built, which then makes it harder to confidently make future decisions simonwillison.net/2026/Feb/15/co…

English

Fun feature request for @cursor_ai: for an open file, show how many tokens the file has (using the currently selected LLM's tokenizer)

English