vitalik.eth@VitalikButerin

I was recently at Real World Crypto (that's crypto as in cryptography) and the associated side events, and one thing that struck me was that it was a clarifying experience in terms of understanding *what blockchains are for*.

We blockchain people (myself included) often have a tendency to start off from the perspective that we are Ethereum, and therefore we need to go around and find use cases for Ethereum - and generate arguments for why sticking Ethereum into all kinds of places is beneficial.

But recently I have been thinking from a different perspective. For a moment, let us forget that we are "the Ethereum community". Rather, we are maintainers of the Ethereum tool, and members of the {CROPS (censorship-resistant, open-source, private, secure) tech | sanctuary tech | non-corposlop tech | d/acc | ...} community. Going in with zero attachment to Ethereum specifically, and entering a context (like RWC) where there are people with in-principle aligned values but no blockchain baggage, can we re-derive from zero in what places Ethereum adds the most value?

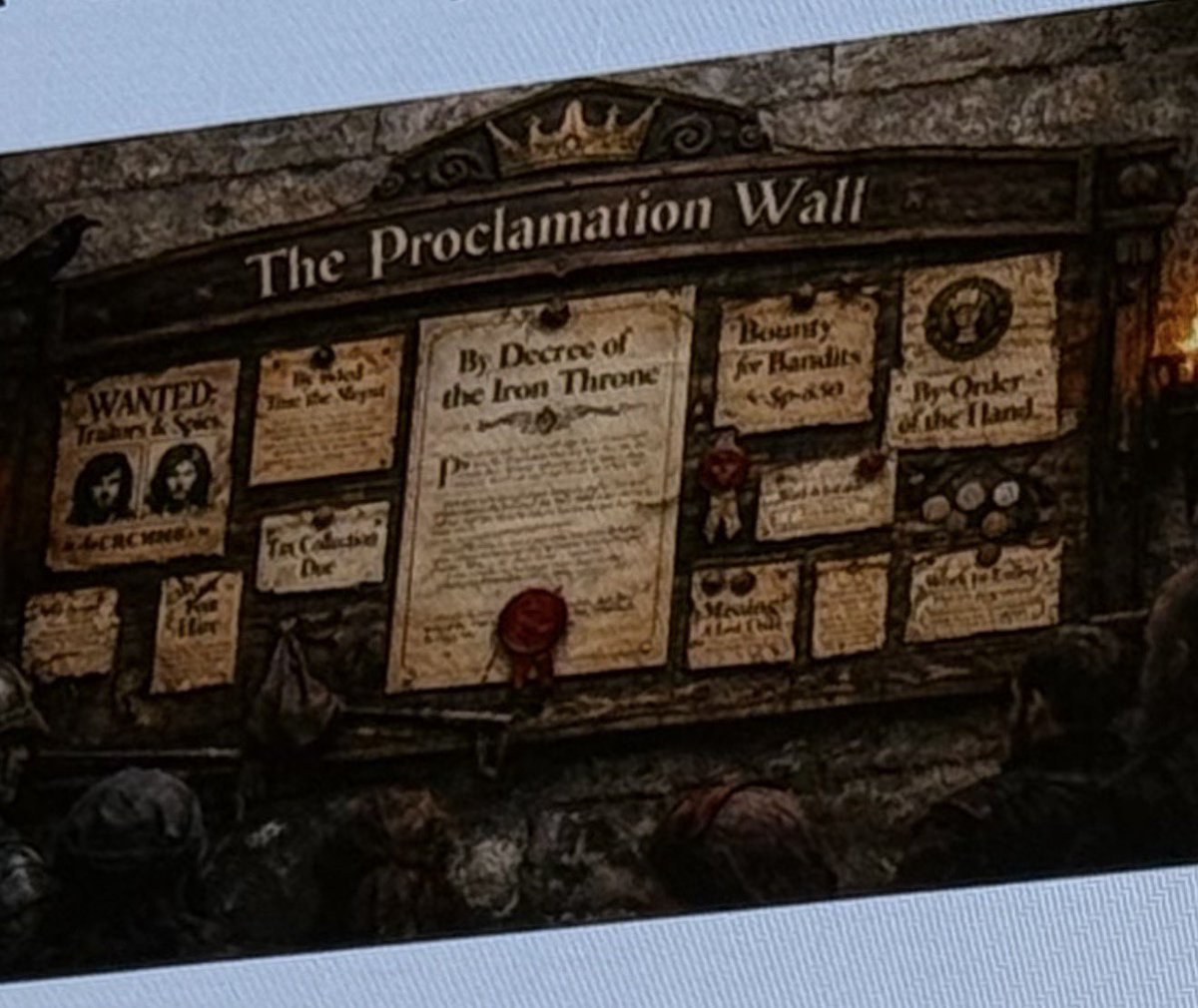

From attending the events, the first answer that comes up is actually not what you think. It's not smart contracts, it's not even payments. It's what cryptographers call a "public bulletin board".

See, lots of cryptographic protocols - including secure online voting, secure software and website version control, certificate revocation... - all require some publicly writable and readable place where people can post blobs of data. This does not require any computation functionality. In fact, it does not directly require money - though it does _indirectly_ require money, because if you want permissionless anti-spam it has to be economic. The only thing it _fundamentally_ requires is data availability.

And it just so happened that Ethereum recently did an upgrade (PeerDAS) to increase the amount of data availability it provides by 2.3x, with a path to going another 10-100x higher!

Next, payments. Many protocols require payments for many reasons. Some things need to be charged for to reduce spam. Other things because they are services provided by someone who expends resources and needs to be compensated. If you want a permissionless API that does not get spammed to death, you need payments. And Ethereum + ZK payment channels (eg. ethresear.ch/t/zk-api-usage… ) is one of the best payment systems for APIs you can come up with.

If you are making a private and secure application (eg. a messenger, or many other things), and you do not want to let people to spam the system by creating a million accounts and then uploading a gigabyte-sized video on each one, you need sybil resistance, and if you care about security and privacy, you really should care about permissionless participation (ie. don't have mandatory phone number dependency). ETH payment as anti-sybil tool is a natural backstop in such use cases.

Finally, smart contracts. One major use case is _security deposits_: ETH put into lockboxes that provably get destroyed if a proof is submitted that the owner violated some protocol rule. Another is actually implementing things like ZK payment channels. A third is making it easy to have pointers to "digital objects" that represent some socially defined external entity (not necessarily an RWA!), and for those pointers to interact with each other.

*Technically*, for every use case other than use cases handling ETH itself, the smart contracts are "just a convenience": you could just use the chain as a bulletin board, and use ZK-SNARKs to provide the results of any computations over it. But in practice, standardizing such things is hard, and you get the most interoperability if you just take the same mechanism that enables programs to control ETH, and let other digital objects use it too.

And from here, we start getting into a huge number of potential applications, including all of the things happening in defi.

---

So yes, Ethereum has a lot of value, that you can see from first principles if you take a step back and see it purely as a technical tool: global shared memory.

I suspect that a big bottleneck to seeing more of this kind of usage is that the world has not yet updated to the fact that we are no longer in 2020-22, fees are now extremely low, and we have a much stronger scaling roadmap to make sure that they will continue to stay low, even if much higher levels of usage return. Infrastructure for not exposing fee volatility to users is much more mature (eg. one way to do this for many use cases is to just operate a blob publisher).

Ethereum blobs as a bulletin board, ETH as an asset and universal-backup means of payment, and Ethereum smart contracts as a shared programming layer, all make total sense as part of a decentralized, private and secure open source software stack. But we should continue to improve the Ethereum protocol and infrastructure so that it's actually effective in all of these situations.