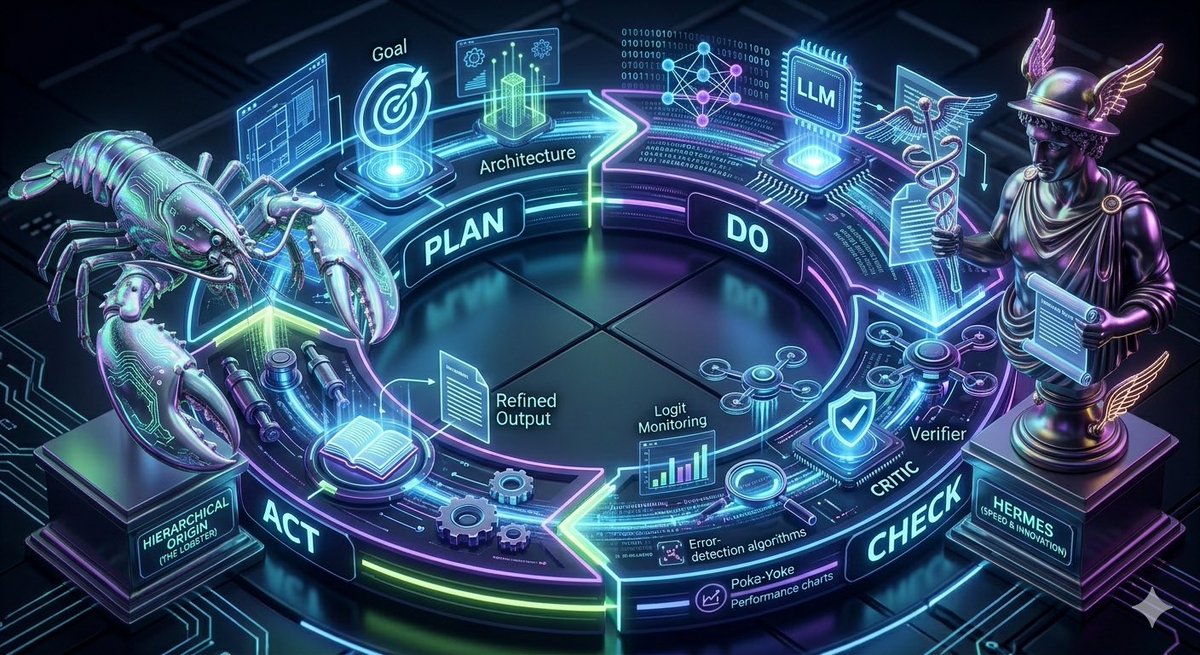

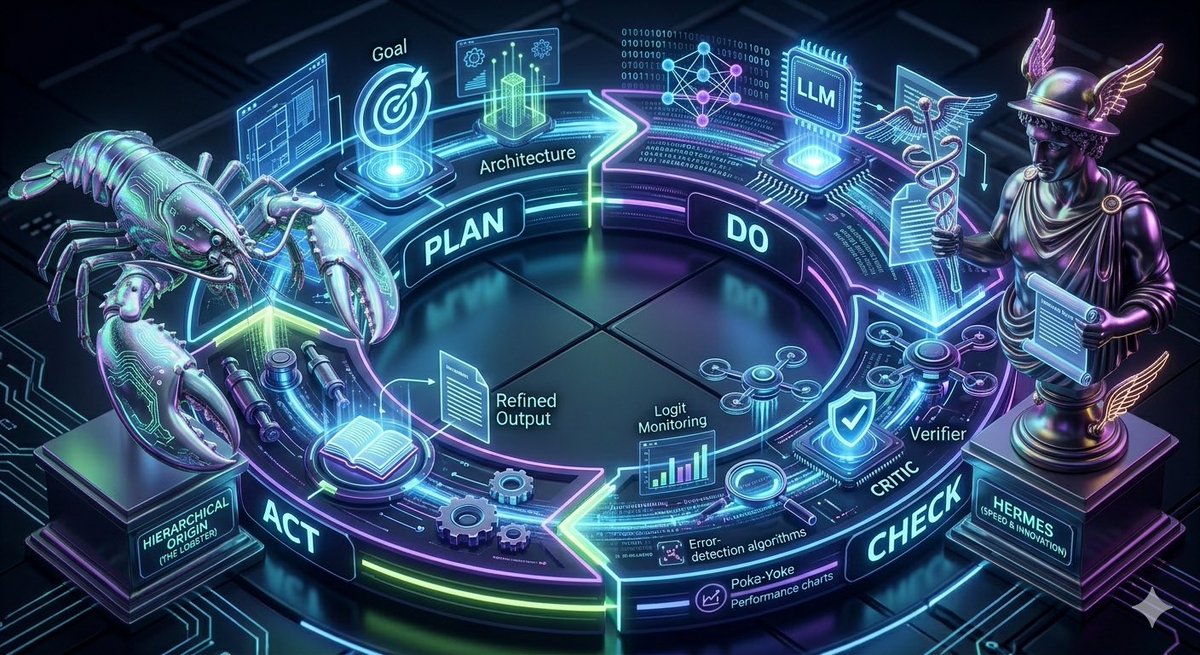

Modern “autoresearch” and “autonovel” frameworks are a reinterpretation of TQM, Total Quality Management from Edward Deming in the 1950s. @karpathy @NousResearch

English

plexsoup

128 posts

@plexsoup

Mapmaker and Content Creator for Tabletop RPGs, primarily on https://t.co/iNwYqyiN1Q

it's been a longstanding dream of mine build an ai system that can tell a compelling story. it's what got me started in the space in the beginning, and with Hermes Agent I finally pulled it off 100% written, typeset, etc. by Hermes Agent those at our gtc event got hard copies🤗

here are some footprints in the sand.

The AI girls are making their own ComfyUI tutorials ☠️ (from u/aigirlvideos)