James Evans

486 posts

@profjamesevans

Max Palevsky Professor of Sociology, Computational & Data Science @UChicago, Santa Fe Institute, & Google tweeting about science, technology, and AI in society.

Excited to share our @DSI_UChicago new work accepted by ICLR 2026, with the remarkable Siyang Wu, Sida Li, Ari Holtzman @universeinanegg, and @profjamesevans James Evans! Link: lnkd.in/gd9YZNBh A thread (1/n)

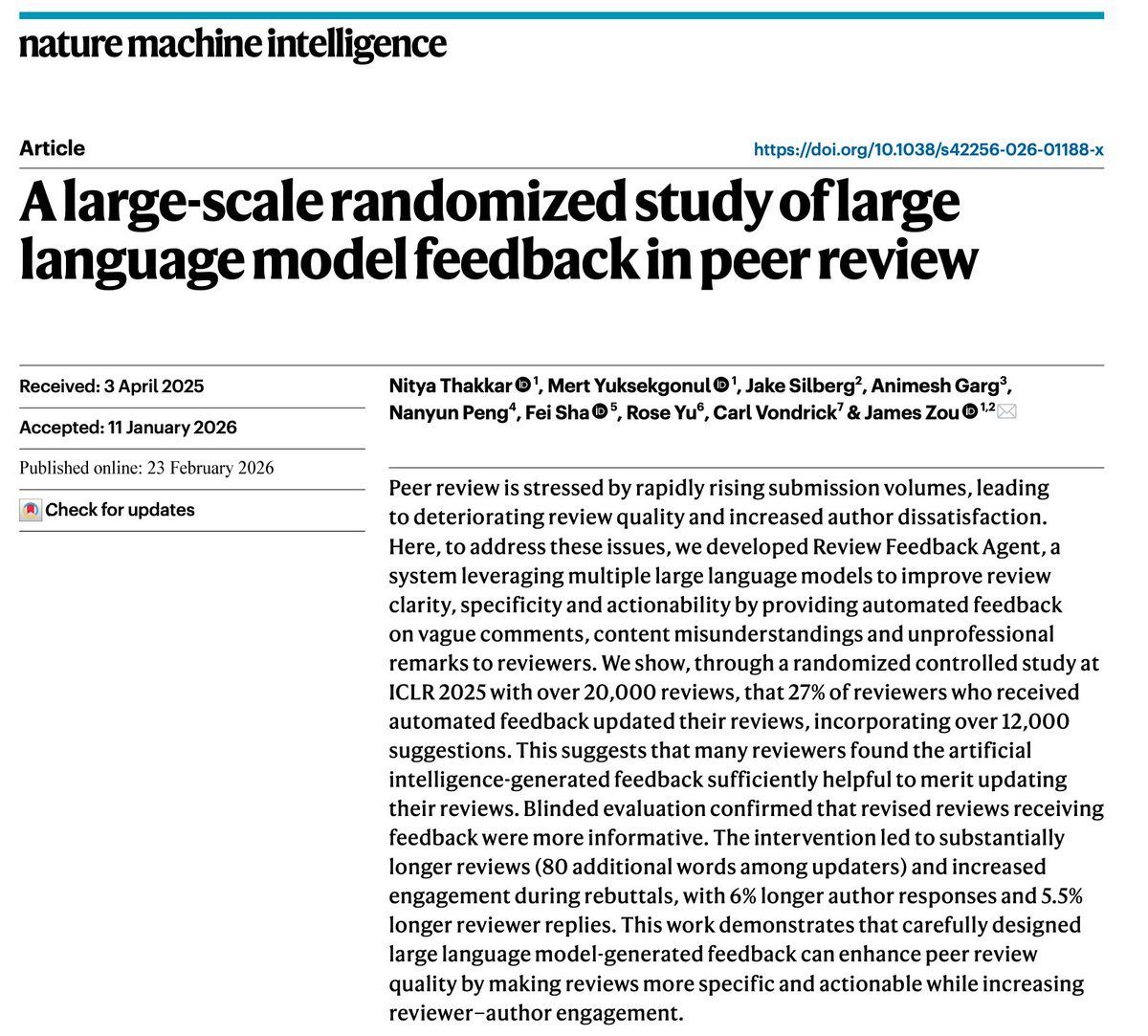

Excited to share that our paper has been published in Nature Machine Intelligence! We conducted a randomized controlled trial at ICLR 2025 with 20,000+ reviews to test whether LLM feedback improves peer review quality. Link: nature.com/articles/s4225…

Is the only way we can create algorithms that people understand to make them trivially simple? We argue, no. People can predict the behavior of algorithms that are arbitrarily complex, if and only if they are available, compact and aligned. arxiv.org/abs/2601.18966

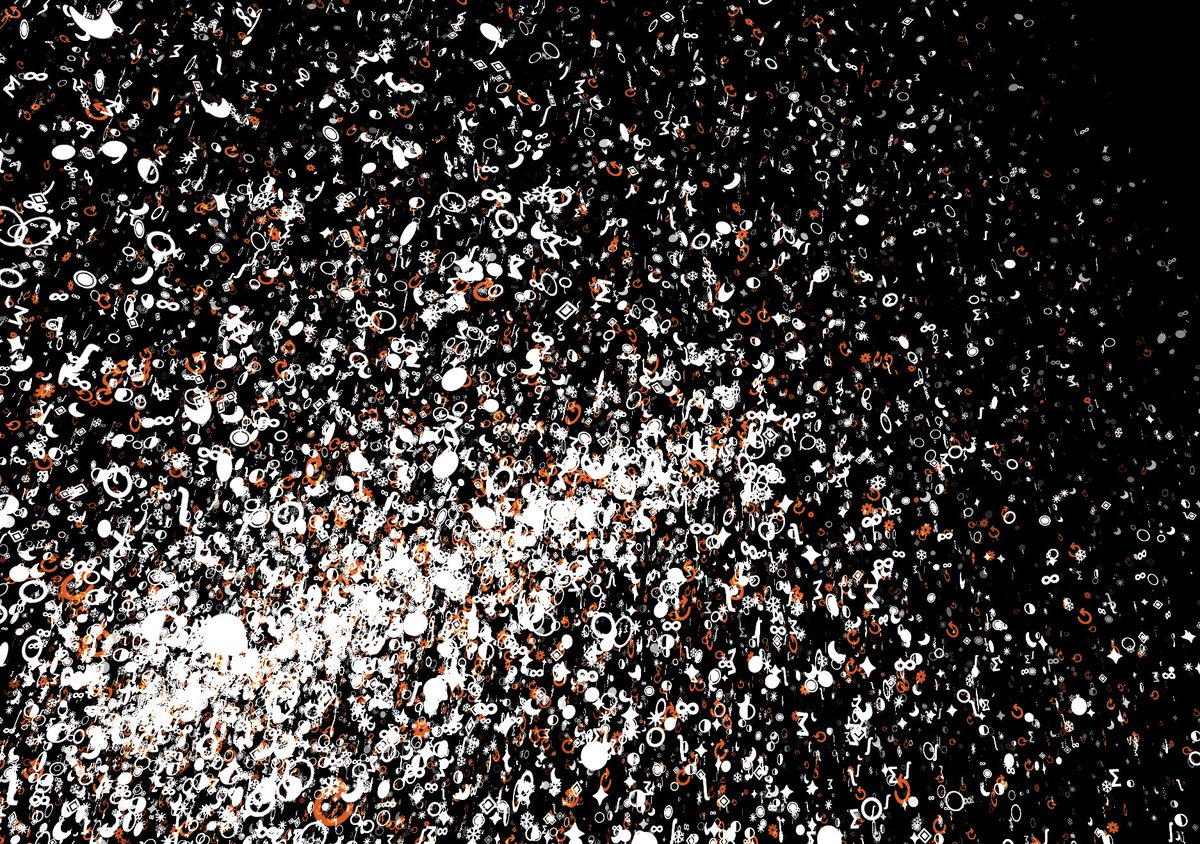

Thrilled to share our Nature paper, out today, on how AI use has shaped scientific careers and science as a whole (in collaboration with the amazing Qianyue Hao, @xu_fengli, and Li Yong from Tsinghua University and Zhongguancun Academy.) We analyzed tens of millions of research papers spanning four decades of natural science to understand how AI is reshaping science. The findings reveal a paradox. For individual scientists, AI is a career accelerator. Researchers who adopt AI publish 3 times more papers with fewer authors, receive 5 times more citations, and become research leaders more than a year earlier than peers who don't. AI papers appear ~20% more frequently in top-quartile journals. Annual citations run 100% higher than non-AI papers across three decades of follow-up. For science as a whole, AI is a narrowing force. AI-augmented research covers ~5% less topical ground and generates a quarter less engagement among follow-on researchers. The contraction appears in the vast majority of the 200+ subfields we examined. Citation patterns show a starker concentration: in AI research, just 22% of papers capture 80% of all citations. The mechanism is straightforward: AI use has shifted to where data is abundant, at an accelerating pace as models have grown larger. AI gravitates toward well-lit problems and away from foundational and emergent questions where data is necessarily sparse. The result is collective hill-climbing—everyone scaling the same popular peaks rather than searching for higher mountains. This creates "lonely crowds" in the scientific literature: clusters of researchers converging on identical problems without building on each other's work. The pattern holds across biology, chemistry, physics, medicine, materials science, and geology. It persists and increases through each wave of AI—from conventional machine learning through deep learning to today's generative models and LLMs. This isn't inevitable. Models that are powerful at prediction can be inverted to identify what is surprising (to those predictions), and enable us to consider and theorize entailments to surprising new data and findings. But without deliberate intervention, local incentives have and will likely continue to push scientists to optimize and compress what's already known rather than discover what isn't. The history of major discoveries is linked to new ways of seeing nature. If we want AI to accelerate breakthroughs rather than automate the familiar, we need AI systems tuned to surprise that expand sensory and experimental capacity—not just cognition. Paper: rdcu.be/eY5f7 Science commentary: science.org/content/articl… Nature commentary: nature.com/articles/d4158… Nature podcast: nature.com/articles/d4158…

Thrilled to share our Nature paper, out today, on how AI use has shaped scientific careers and science as a whole (in collaboration with the amazing Qianyue Hao, @xu_fengli, and Li Yong from Tsinghua University and Zhongguancun Academy.) We analyzed tens of millions of research papers spanning four decades of natural science to understand how AI is reshaping science. The findings reveal a paradox. For individual scientists, AI is a career accelerator. Researchers who adopt AI publish 3 times more papers with fewer authors, receive 5 times more citations, and become research leaders more than a year earlier than peers who don't. AI papers appear ~20% more frequently in top-quartile journals. Annual citations run 100% higher than non-AI papers across three decades of follow-up. For science as a whole, AI is a narrowing force. AI-augmented research covers ~5% less topical ground and generates a quarter less engagement among follow-on researchers. The contraction appears in the vast majority of the 200+ subfields we examined. Citation patterns show a starker concentration: in AI research, just 22% of papers capture 80% of all citations. The mechanism is straightforward: AI use has shifted to where data is abundant, at an accelerating pace as models have grown larger. AI gravitates toward well-lit problems and away from foundational and emergent questions where data is necessarily sparse. The result is collective hill-climbing—everyone scaling the same popular peaks rather than searching for higher mountains. This creates "lonely crowds" in the scientific literature: clusters of researchers converging on identical problems without building on each other's work. The pattern holds across biology, chemistry, physics, medicine, materials science, and geology. It persists and increases through each wave of AI—from conventional machine learning through deep learning to today's generative models and LLMs. This isn't inevitable. Models that are powerful at prediction can be inverted to identify what is surprising (to those predictions), and enable us to consider and theorize entailments to surprising new data and findings. But without deliberate intervention, local incentives have and will likely continue to push scientists to optimize and compress what's already known rather than discover what isn't. The history of major discoveries is linked to new ways of seeing nature. If we want AI to accelerate breakthroughs rather than automate the familiar, we need AI systems tuned to surprise that expand sensory and experimental capacity—not just cognition. Paper: rdcu.be/eY5f7 Science commentary: science.org/content/articl… Nature commentary: nature.com/articles/d4158… Nature podcast: nature.com/articles/d4158…

Thrilled to share our Nature paper, out today, on how AI use has shaped scientific careers and science as a whole (in collaboration with the amazing Qianyue Hao, @xu_fengli, and Li Yong from Tsinghua University and Zhongguancun Academy.) We analyzed tens of millions of research papers spanning four decades of natural science to understand how AI is reshaping science. The findings reveal a paradox. For individual scientists, AI is a career accelerator. Researchers who adopt AI publish 3 times more papers with fewer authors, receive 5 times more citations, and become research leaders more than a year earlier than peers who don't. AI papers appear ~20% more frequently in top-quartile journals. Annual citations run 100% higher than non-AI papers across three decades of follow-up. For science as a whole, AI is a narrowing force. AI-augmented research covers ~5% less topical ground and generates a quarter less engagement among follow-on researchers. The contraction appears in the vast majority of the 200+ subfields we examined. Citation patterns show a starker concentration: in AI research, just 22% of papers capture 80% of all citations. The mechanism is straightforward: AI use has shifted to where data is abundant, at an accelerating pace as models have grown larger. AI gravitates toward well-lit problems and away from foundational and emergent questions where data is necessarily sparse. The result is collective hill-climbing—everyone scaling the same popular peaks rather than searching for higher mountains. This creates "lonely crowds" in the scientific literature: clusters of researchers converging on identical problems without building on each other's work. The pattern holds across biology, chemistry, physics, medicine, materials science, and geology. It persists and increases through each wave of AI—from conventional machine learning through deep learning to today's generative models and LLMs. This isn't inevitable. Models that are powerful at prediction can be inverted to identify what is surprising (to those predictions), and enable us to consider and theorize entailments to surprising new data and findings. But without deliberate intervention, local incentives have and will likely continue to push scientists to optimize and compress what's already known rather than discover what isn't. The history of major discoveries is linked to new ways of seeing nature. If we want AI to accelerate breakthroughs rather than automate the familiar, we need AI systems tuned to surprise that expand sensory and experimental capacity—not just cognition. Paper: rdcu.be/eY5f7 Science commentary: science.org/content/articl… Nature commentary: nature.com/articles/d4158… Nature podcast: nature.com/articles/d4158…