R. Alessio @ ETH | RL, Bandits, Exploration

153 posts

@rssalessio

Postdoc at @BU_CDS with @aldopacchiano (https://t.co/Ekl0jGFIbd Lab). Interested in RL, Bandit problems and Adaptive Control.

Analysis on self-distillation. It works by increasing the confidence, and does not generalize well. We can't assume the distribution given the solution behaves well, and it could be similar to unsupervised model-based verification.

Assembling a team at DeepMind in London. Scaling up RL for post-training is working, but right now it's still mostly hacks and dark arts (pretraining circa 2019). Pre-training wasn't always scaling laws and log-log plots; someone had to find the simplicity. We aim to do the same. If you're interested in doing things right in a research-first environment that scales all the way, please apply: job-boards.greenhouse.io/deepmind/jobs/…

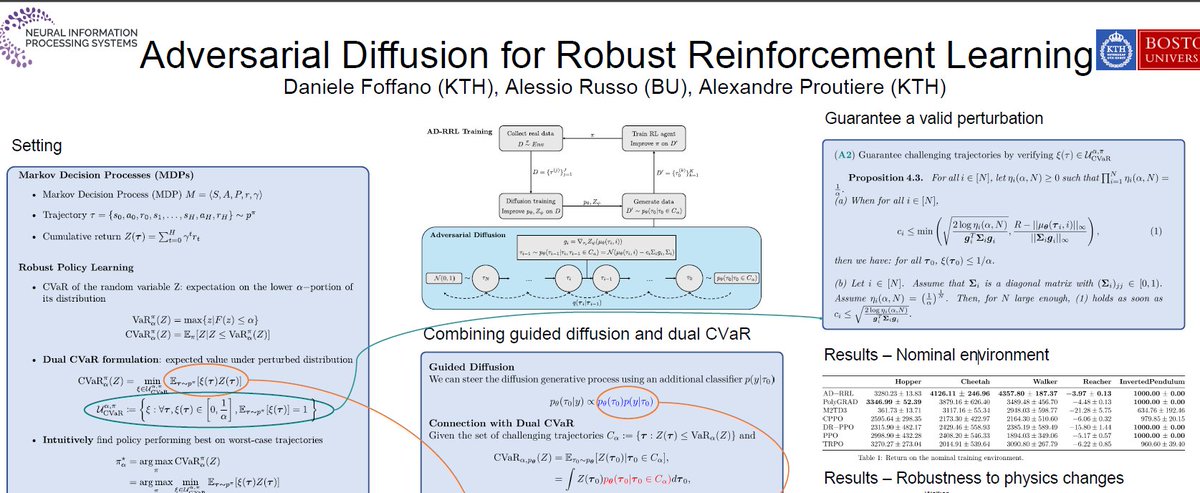

Excited to be in San Diego next week for #NeurIPS2025 🎉! Will present Adversarial Diffusion for Robust RL together with @DanieleFoffano. Poster session on Fri 5 Dec 7:30 p.m. EST, Exhibit Hall C,D,E. AD-RRL uses diffusion models to train Robust RL policies. #RL #Diffusion

Just a heads-up: this year @NeurIPSConf is not using the Whova app. You can find the new mobile app on the NeurIPS website neurips.cc/mobile/support/ Literally the worst communications by the organizers on this one. What was wrong with Whova? #NeurIPS2025 #whova

@iclr_conf reverted all reviews to pre-discussion state after the OpenReview bug. Result: one paper I’m reviewing has the authors' rebuttal responding point-by-point to concerns that were edited out and no longer exist in the system. New ACs: good luck making sense of this.

Initial Analysis of OpenReview API Security Incident

ICLR has placed OpenReview in a difficult position, so I want to offer a few words about the OpenReview team working behind the scenes. OpenReview has long been operated at UMass Amherst as a non-profit organization founded by Andrew McCallum. Each year, Andrew must raise more than $2 million to support a 20-person team that provides essential infrastructure for most major conferences. I once asked Andrew what might have been a naïve question: whether he had considered developing a business model for OpenReview, given its prominence and the seemingly obvious opportunities. He pushed back, explaining that everything he has done for OpenReview is driven by a commitment to serve and strengthen the academic community. He is willing to devote significant personal effort to ensure the platform remains freely accessible to all. We should not blame such a brilliant and dedicated team for an accidental issue. Otherwise, fewer people would be willing to shoulder this kind of responsibility in the future. Deep respect to the OpenReview team! I’m grateful for their work and happy to support in any way!