TonioR

1.3K posts

In Claude Code, the new /ultrareview command runs a dedicated review session that reads through your changes and flags what a careful reviewer would catch. We've also extended auto mode to Max users, so longer tasks run with fewer interruptions.

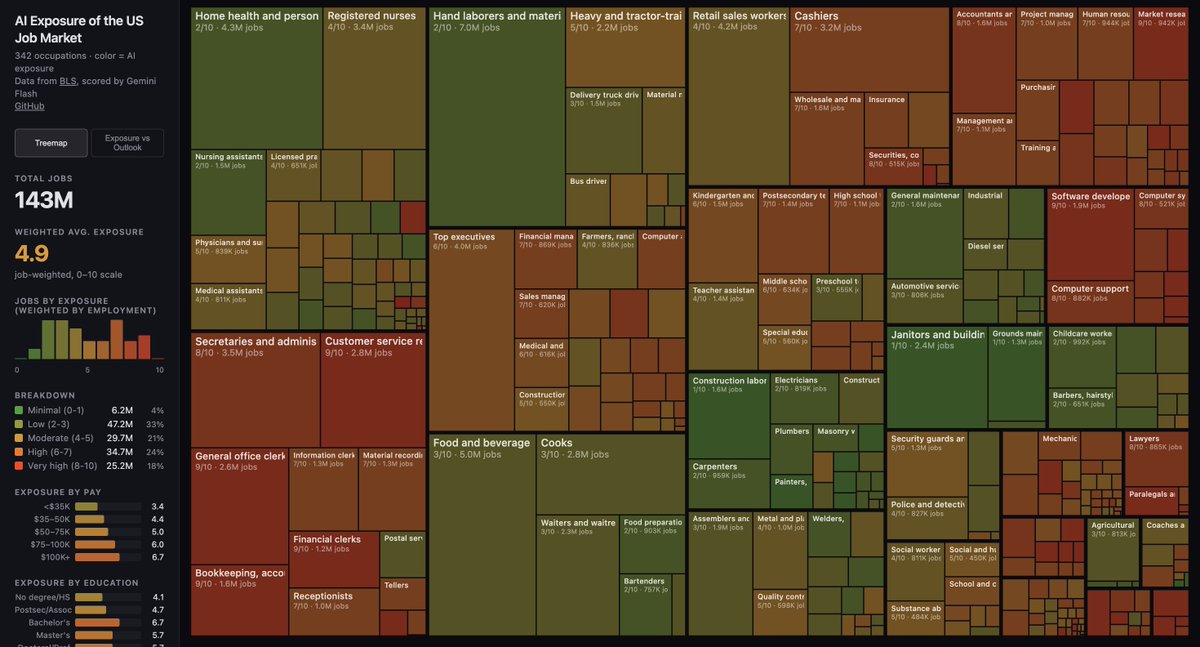

AI employment doomerism is rooted in the socialist fallacy of lump of labor. It is wrong now for the same reason it’s always been wrong. More people really should try to learn about this. The AI will teach you about it if you ask! (Hinton is a socialist. youtube.com/shorts/R-b8RR6…)

Your work tools in Claude are now available on mobile. Explore Figma designs, create Canva slides, check Amplitude dashboards, all from your phone. Give it a try: claude.com/download

Announcing Copilot Cowork, a new way to complete tasks and get work done in M365. When you hand off a task to Cowork, it turns your request into a plan and executes it across your apps and files, grounded in your work data and operating within M365’s security and governance boundaries.