Yali Du

245 posts

Yali Du

@yalidux

Researcher in reinforcement learning/multi-agent cooperation. @turinginst @KingsCollegeLon Ex at @AI_UCL @UCLCS

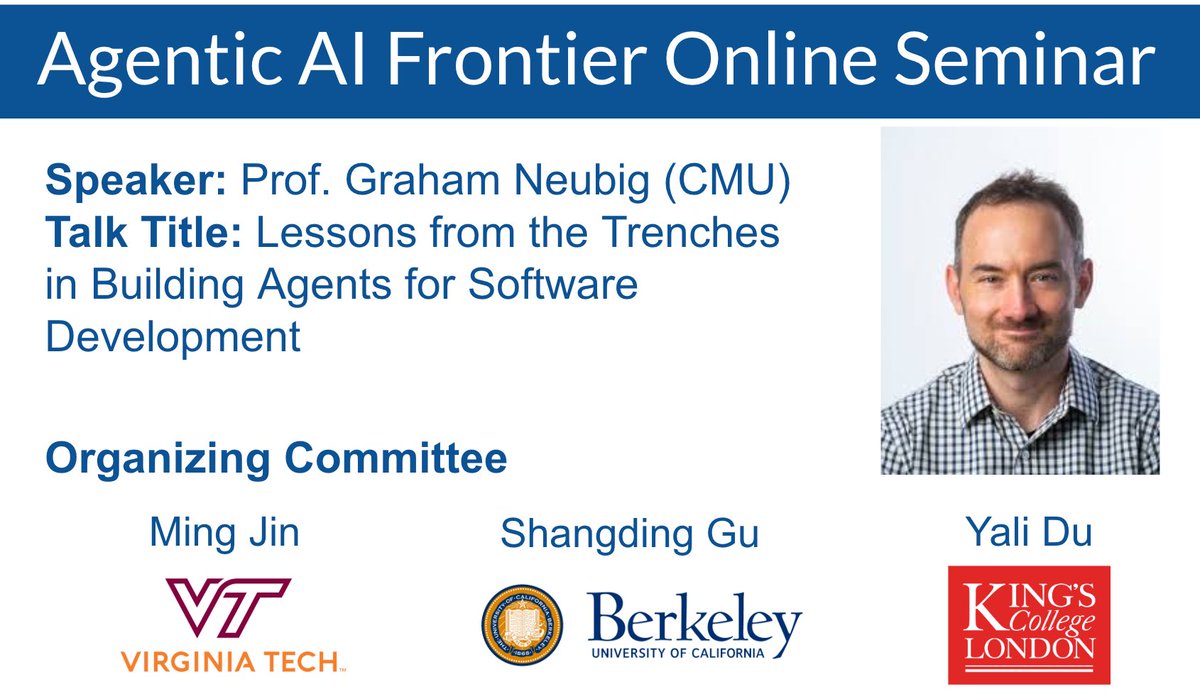

Vision-Language-Action (VLA) models are evolving fast. How do we move robots from following basic instructions to executing complex, multi-stage tasks with sophisticated test-time reasoning? 🤖🧠 We are incredibly honored to host Sergey Levine @svlevine for the next AI Agent Frontier Seminar to present: "Robotic Foundation Models." Sergey will discuss the leap from first-generation VLAs to models that handle diverse data modalities and advanced reasoning, outlining the true frontiers of the field. Date: This Friday 3/27 12pm ET/9am PT 🔗 agentic-ai-frontier-seminar.github.io 📍 Join: virginiatech.zoom.us/j/87872134251 🔑 Passcode: 309194 Organizers: @yalidux @ShangdingG95714 @MingJin_AIl #Robotics #AIAgents #VLA #FoundationModels

Half baked thought: In discussions about AI, claims are often made about both capabilities and societal effects, and in practice the boundary is pretty blurry. Different personality types and professions see things through different lenses. At the risk of over-caricaturising, two common lenses seem like the following: The comp sci prior tends to be: sufficiently capable ASI can in principle solve any problem. Local knowledge is just data to be ingested. If you're smart enough and have enough compute, you can centralize (and solve) everything. So once you pass the human 'intelligence' threshold, what 'utility' could a human ever have? The economist prior (especially the Hayekian strain) is: knowledge isn't just facts to be collected, it's contextual, tacit, often generated in the moment through interaction. It doesn't exist prior to the process that uses it. No amount of optimization power lets you skip the process, because the knowledge isn't sitting there waiting to be found - it's constituted by the interaction. In the former view, human agency becomes epiphenomenal, you're just watching the optimizer do its thing. Whereas with the latter, if knowledge is partly constituted through interaction, then agency is a core component. In fact it's ineliminable from the epistemic process itself. This maybe explains why the two camps talk past each other. The comp sci view sees the economist objection as "humans want to feel useful" or "current AI isn't capable enough yet" - contingent limitations that will be overcome. The economist view sees the comp sci position as a category error about knowledge is - not a claim about future capability levels but about the structure of the problem. The econ view seems inherently less deterministic and suggests some benefits: first, time. If deployment and adaptation are real work that can't be skipped, the transition isn't instantaneous. There's no "foom" where one system suddenly does everything. But more importantly, leverage points: if value creation requires context-specific integration, there are many points where governance, institutions, and choices can shape outcomes. It's not solely determined by whoever has the biggest training cluster or how capable your system is. The other crux is basically how you think about alignment. Gillian Hadfield explains that "norms and values are not just features of an exogenous environment... instead, they are the equilibrium outputs of dynamic behavioral systems." (youtube.com/watch?v=MPb93a…) With the comp sci prior, alignment is a technical problem of extracting the right objective function. If you hold the economist prior, alignment *is* the integration into the dynamic social processes that constitute normative judgment: products, votes, norms, conventions, choices, etc. Shaping this is a continuous thing, not something to be solved ex ante. This doesn't necessarily mean the comp sci view is wrong: principal agent problems and instruction following problems are real - but the solution space is far larger than the model itself and includes the entire institutional stack through which AI systems are deployed, governed, and held accountable.

Join us tomorrow at #runconference for #NeurIPS2015, same time, same place (see quoted thread for details)! It was three of us this morning but I forgot to take a picture before we separated, so here's a selfie to prove we actually went out 🤭 Hope to see more of you tomorrow!