Naka-pin na Tweet

Dimitri Lovato

1.4K posts

Dimitri Lovato

@DimitriAI

🤖 AI Explorer | Tech Storyteller 🧠 Breaking down complex AI into simple content 📈 Sharing tips, tools & growth hacks for creators

Australia Sumali Haziran 2019

4 Sinusundan6 Mga Tagasunod

Dimitri Lovato nag-retweet

🚨 OpenClaw just became the most important software most people have never heard of.

Not because of the hype. Because of what it's actually doing to real businesses right now.

A founder with a $2.5M ARR company spent one week with OpenClaw. Here's what his team built:

→ An end-to-end ad creative pipeline that goes from idea to published Meta ads — living inside Slack, open to the whole team

→ A data analytics agent connected to BigQuery that answers any business question in real time. Nobody writes SQL anymore

→ A recruiting agent that found 30 ranked candidates with portfolios and email addresses from a single job description

One week. Three agents. Material impact on a real business.

And he said he has twenty more stories.

Here's why this matters more than another AI tool launch.

OpenClaw isn't polished. It's raw. It takes work to figure out. The people calling it hype are the people who tried it once and bounced.

That's exactly what the early internet felt like.

The people who figured out the early internet didn't wait for it to get easier. They got in while it was hard and built things nobody else could see yet.

OpenClaw is that moment for agentic AI.

Here's the wildest part:

The shipping velocity is unlike anything in the space right now. Native PDF tools. Telegram live streaming. External secrets. Better routing. ACP subagents on by default. 100+ stability and security fixes. All in a single update cycle.

This is what a team building like they're running out of time looks like.

OpenClaw may not win the agentic race. There will be competitors. The big labs are already converging on this. But it doesn't matter who wins.

OpenClaw already opened Pandora's box.

Every personal self-improving agent you'll use in five years traces back to what this tool revealed. And right now, while most people are still deciding whether it's real — a small group of founders is quietly automating their entire operations with it.

This is the shittiest it will ever be.

That's not a warning. That's an invitation.

English

Dimitri Lovato nag-retweet

Dimitri Lovato nag-retweet

Dimitri Lovato nag-retweet

Dimitri Lovato nag-retweet

Dimitri Lovato nag-retweet

Dimitri Lovato nag-retweet

A 5-day plan to set up Claude:

(made for teams, not individuals)

✦ Monday (45-60 min): Set up Projects

Build a separate Project for every task your team does repeatedly. Let Claude interview you. Upload ONE gold-standard example per Project.

Full guide below (the article).

✦ Tuesday (15 min): Create Prompt Templates

For each Project, generate a one-sentence prompt template with a single [INPUT] field. Your teammates copy-paste it and fill in their raw notes.

✦ Wednesday (20-25 min): Test for a "wow"

Pick one real task you did manually this week. Run it through your Project. Screenshot the before/after. This becomes your proof for Thursday.

✦ Thursday (35 min): Convert 1 person

Don't train 10 people. Pick the one who's drowning. Sit with them for 15 min. Use THEIR work. Then, make them a co-owner of the Project.

✦ Friday (60 min): Roll out to the team

Send ONE message to your team channel. Lead with ONE specific result. List 3 things they can do RIGHT NOW. Then DM 2-3 people separately.

@rubenhassid

Ruben Hassid@rubenhassid

English

Dimitri Lovato nag-retweet

Dimitri Lovato nag-retweet

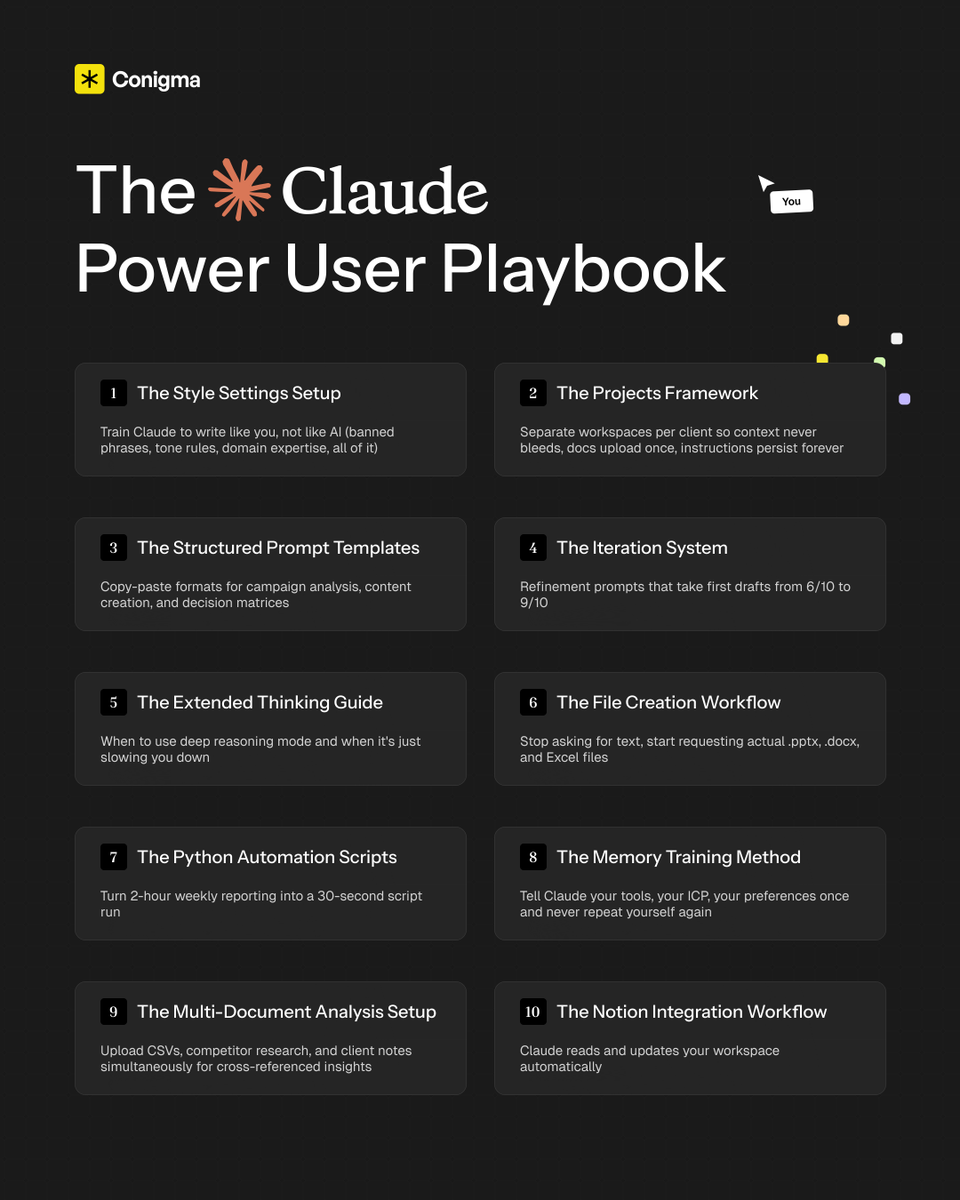

If you don't have my "Claude Power User Playbook" yet...

The one I built to get 10x more output from Claude every session with a complete system across settings, prompting frameworks, file creation, memory management, and advanced workflows...

Just comment "CLAUDE" and I'll DM it to you for free (must follow)

English

Dimitri Lovato nag-retweet

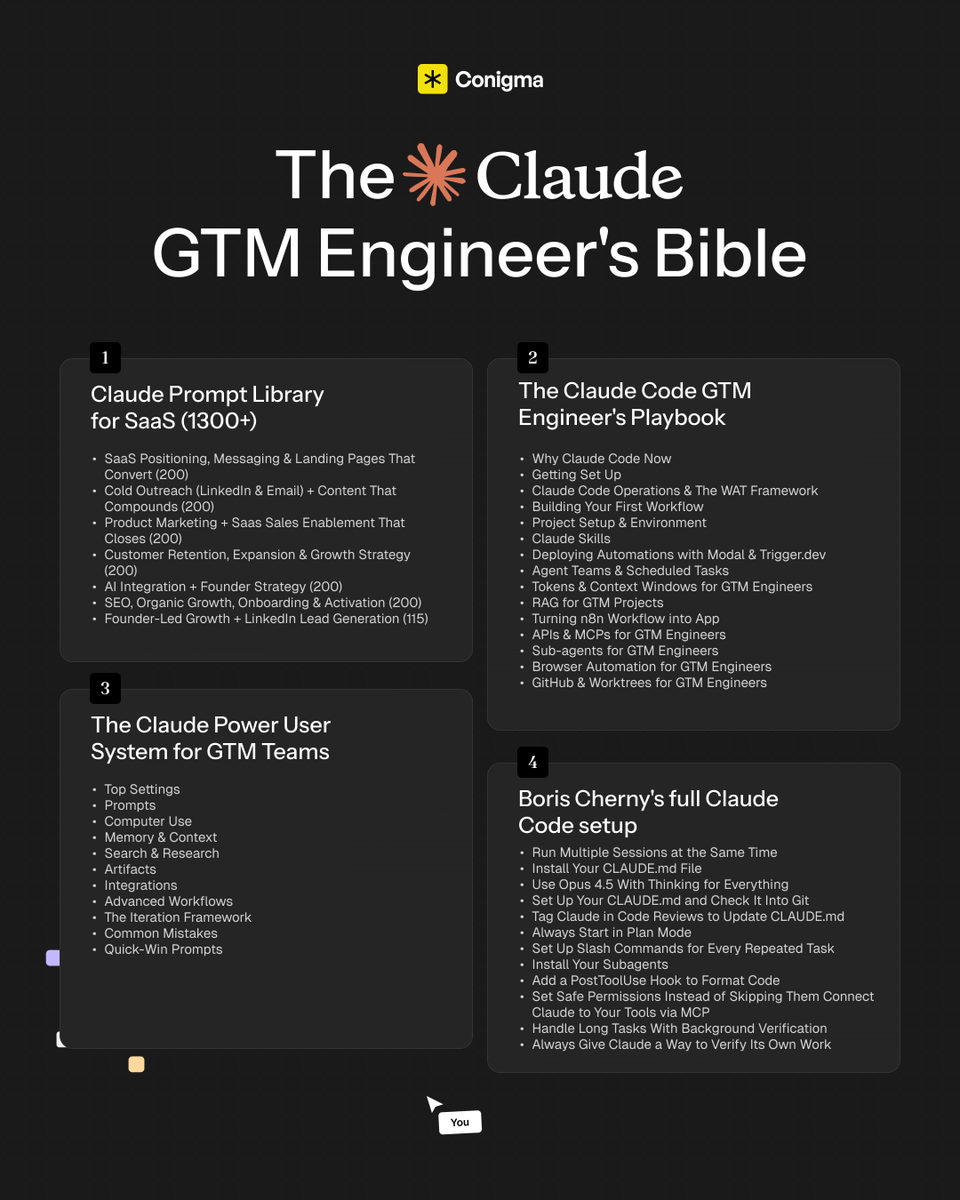

SHOCKING: 99% of GTM engineers using Claude are barely scratching the surface.

Right now, the entire internet is screaming "Claude, Claude, Claude"... But here's the truth: just prompting it won't build GTM infrastructure.

To unlock its real power, you need to master:

- Claude Code deployment with the WAT framework and CLAUDE. md self-improvement loop

- MCP connections, sub-agents, and automations running 24/7 without you

- Pre-built prompt systems covering every GTM function you actually run

I spent 100+ hours building and documenting the most complete Claude GTM Engineering Bible and compiled every prompt, workflow, build sequence, and deployment guide into one resource.

I'll give it to only 500 people.

To get it:

1. Follow me MUST (so I can DM)

2. Comment "CLAUDE"

3. I'll DM you the bible

If you don't follow or comment, you won't receive it.

English

Dimitri Lovato nag-retweet

Dimitri Lovato nag-retweet

Dimitri Lovato nag-retweet

🚨BREAKING: Researchers just proved that ChatGPT is failing you constantly. And you have no idea it's happening.

Not the failures where it says something wrong and you catch it. The other kind. The silent ones. The ones where you walk away satisfied with an answer that was incomplete, misleading, or flat-out wrong.

78% of AI failures are invisible to the user.

You never notice. You never push back. You move on. The wrong information lives in your head as fact.

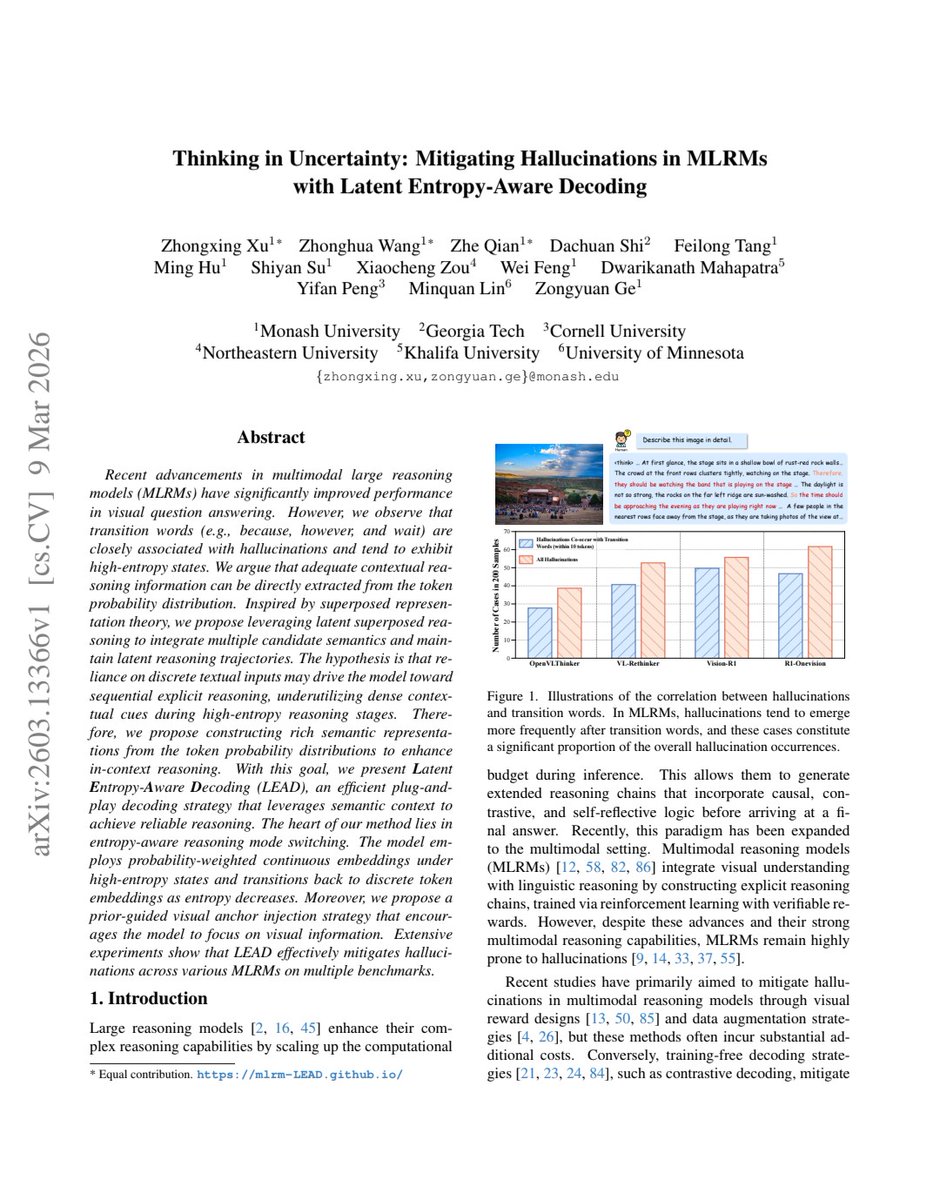

Researchers analyzed hundreds of thousands of real conversations from WildChat — one of the largest datasets of actual human-AI interactions ever collected. Not lab simulations. Not controlled experiments. Real people having real conversations with ChatGPT about real things that mattered to them.

They categorized every failure. Then they measured how often users detected them.

Most of the time, they didn't.

Here's how the invisible failures work.

The most dangerous failure isn't the one that sounds wrong. It's the one that sounds exactly right. Confident tone. Fluent sentences. Logical structure. Everything that makes you trust an answer — present. Everything that makes the answer correct — missing.

The AI doesn't stumble when it's wrong. It doesn't hedge. It doesn't slow down. It delivers incorrect information with the same velocity and certainty it delivers correct information. There is no signal that something went wrong.

So you don't check. Why would you? It sounded right.

Here's what the researchers found inside those failures.

The most common invisible failure: the AI answers a question you didn't ask. You asked something specific. It detected a related topic and answered that instead. The response is coherent, relevant-seeming, and completely misses your actual need. You read it. You think you got an answer. You didn't.

The second most common: confident incompleteness. The AI gives you part of the answer and stops. Not because it ran out of knowledge. Because it pattern-matched to a response length that felt complete. You got 60% of what you needed and left thinking you got 100%.

The third: plausible fabrication. Not hallucination in the obvious sense — a made-up name, a fake citation. Subtle fabrication. A statistic that's close but wrong. A date that's off by a year. A nuance reversed. Nothing that triggers your skepticism. Everything that embeds quietly as knowledge.

Here's the part that makes it structural, not accidental.

These failures aren't random. They cluster around a specific condition: when the user already has partial knowledge about the topic.

If you know nothing, you ask follow-up questions. If you know a lot, you catch the errors. If you know just enough to feel informed but not enough to verify — which is the exact situation most people are in for most topics — you are maximally vulnerable to invisible failures.

The AI isn't failing the experts. It isn't failing the complete beginners. It's failing the people in the middle. Which is almost everyone. Almost all the time.

It gets worse.

The researchers found that conversational warmth actively suppresses failure detection. When the AI is friendly, apologetic, and engaging, users rate the interaction as successful even when the output was objectively wrong. The experience of being helped overrides the reality of not being helped.

You feel heard. You feel assisted. The answer was wrong. You leave happy.

Now think about every decision you've made recently where ChatGPT was part of your research. Every answer you accepted because it was fluent and confident. Every time you didn't check because the response felt complete.

You weren't evaluating the quality of the answer. You were evaluating the quality of the experience.

Those are not the same thing. And 78% of the time, the gap between them went unnoticed.

English

Dimitri Lovato nag-retweet

Dimitri Lovato nag-retweet

Dimitri Lovato nag-retweet

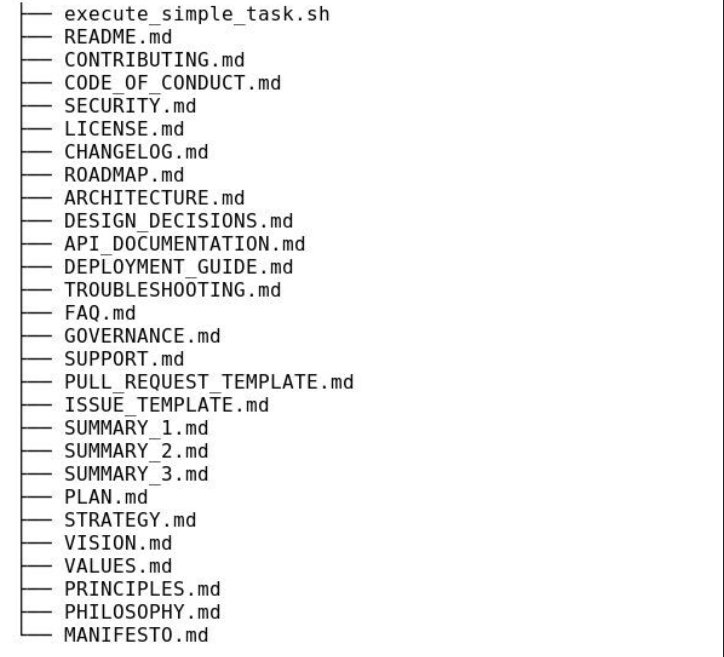

🚨 Someone just open sourced the exact workflow that stops your coding agent from going off the rails.

It's called Superpowers.

A complete software development framework that runs inside Claude Code, Codex, or OpenCode. It doesn't add tools. It changes how your agent thinks before it touches a single line of code.

Not a prompt. Not a wrapper. A full methodology that turns a chaotic coding agent into a disciplined engineer.

Here's the problem it solves.

You fire up Claude Code. You describe what you want. It immediately starts writing. No clarifying questions. No design review. No implementation plan. Just code — fast, confident, and quietly building on assumptions you never agreed to.

Four hours later you have 800 lines that technically run but don't do what you actually needed.

Superpowers stops that from happening at step one.

Here's how it works:

→ The moment you describe something to build, the agent stops. It asks what you're actually trying to do

→ It drafts a spec and shows it to you in short readable chunks — not a wall of text

→ You approve the design before any code gets written

→ It generates an implementation plan clear enough for an engineer with no context, no judgment, and no testing instincts to follow correctly

→ It enforces true red/green TDD, YAGNI, and DRY throughout execution

→ It then launches subagent-driven development — agents work through each task, inspect their own work, and continue forward autonomously

Here's the wildest part:

Once you approve the plan and say go, Claude can work autonomously for two hours straight without deviating. Not because it got smarter. Because it's following a structure tight enough to keep it on track.

The skills trigger automatically. You don't configure anything. You don't change how you prompt. You just have a coding agent that now thinks before it acts.

One command to install in Claude Code:

/plugin marketplace add obra/superpowers-marketplace

Then:

/plugin install superpowers@superpowers-marketplace

That's it. Your coding agent just got Superpowers.

40.9K GitHub stars. 3.1K forks. 254 commits. MIT License.

100% Open Source.

English

Dimitri Lovato nag-retweet