Eric Hartford

11.4K posts

Eric Hartford

@QuixiAI

We make AI models Dolphin and Samantha BTC 3ENBV6zdwyqieAXzZP2i3EjeZtVwEmAuo4 https://t.co/3ri2GbXrQB https://t.co/zH0F3pTjjY @dphnAI

INCREDIBLE STUFF INCOMING Nemotron 3 Ultra Base (~500B) benchmarks against Kimi K2 and GLM looking goood

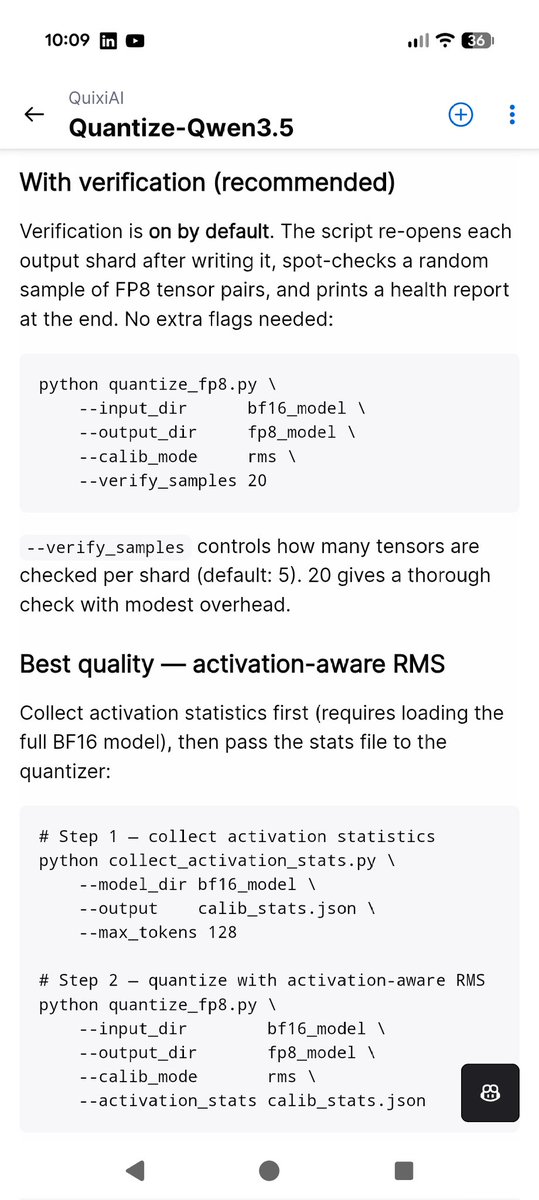

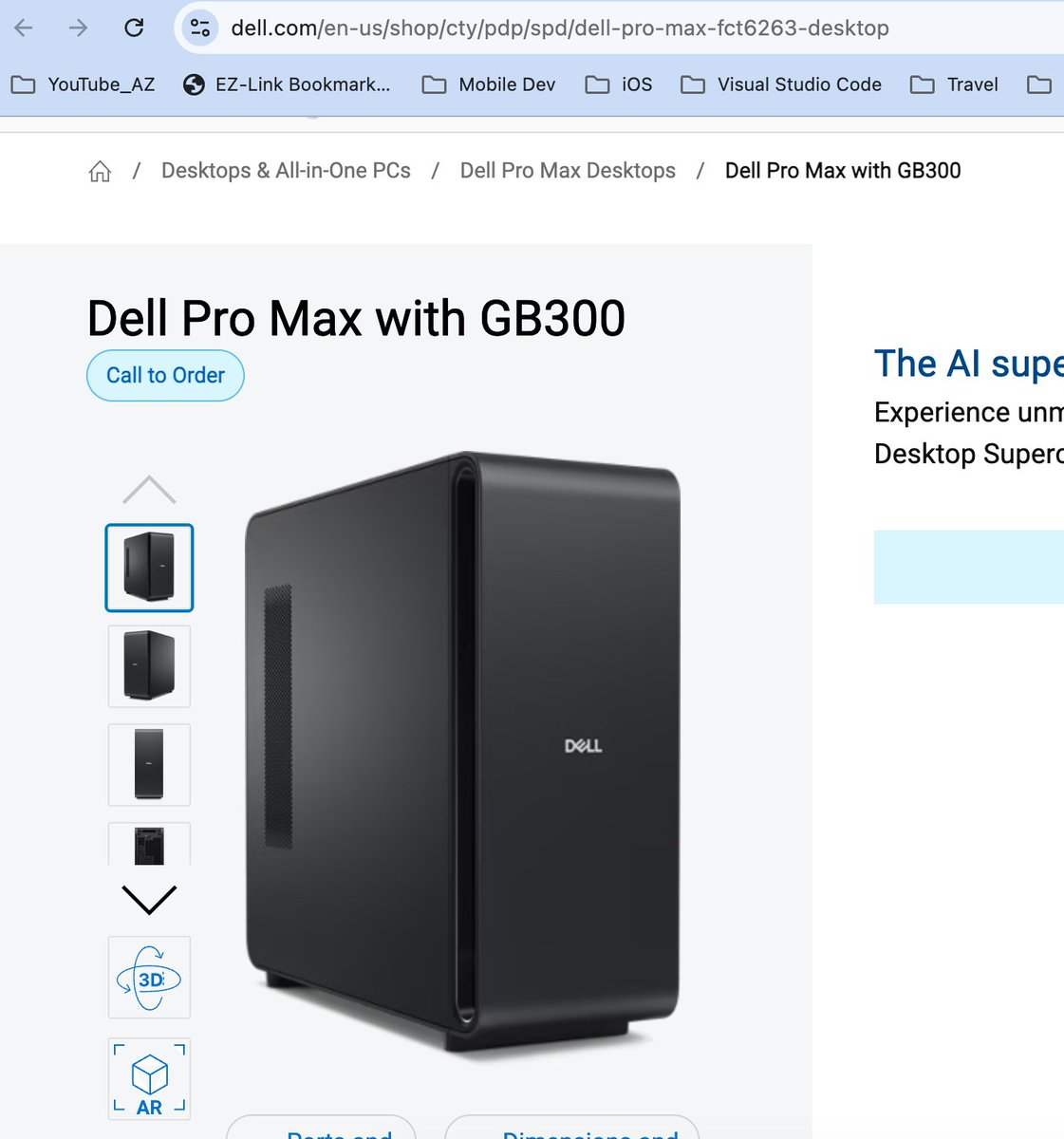

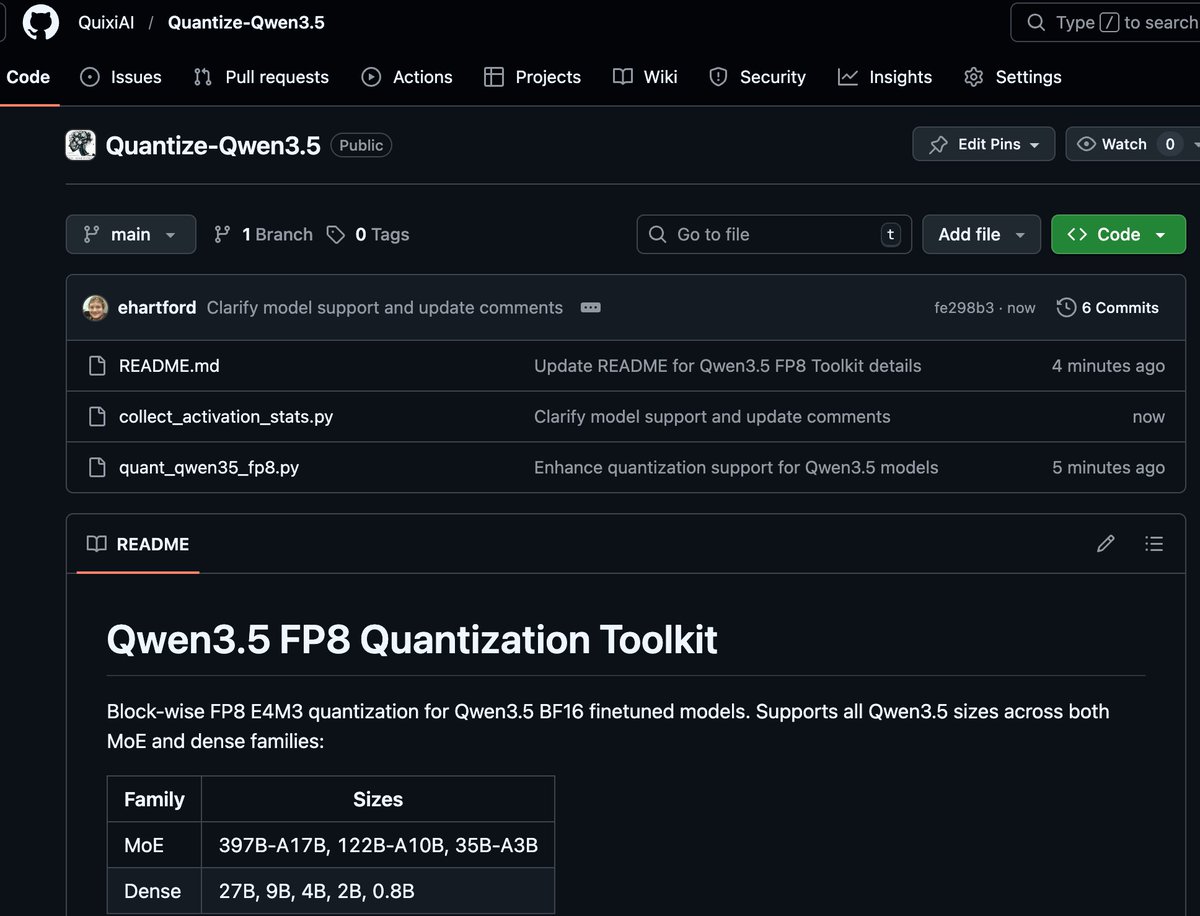

.@nvidia hand delivered a pre-production unit of the @Dell Pro Max with GB300 to my house. 100lbs beast with 750GB+ of unified memory to power the best open-source models in the world. What should I test first?

⚡️New on ZenMux: LLaDA2.1-flash 100B diffusion LLM from @TheInclusionAI . → Error-correcting editable generation → Speed Mode: ultra-fast inference → Quality Mode: competitive performance → RL tailored for 100B-scale dLLM 🔗 zenmux.ai/inclusionai/ll… 🔗 huggingface.co/inclusionAI/LL…