InclusionAI

113 posts

InclusionAI

@TheInclusionAI

AI Lab @AntGroup, we envision AGI as humanity's shared milestone. Our Language Model @AntLingAGI and LLaDA, Embodied AI @robbyant_brain, OSS projects AReaL etc.

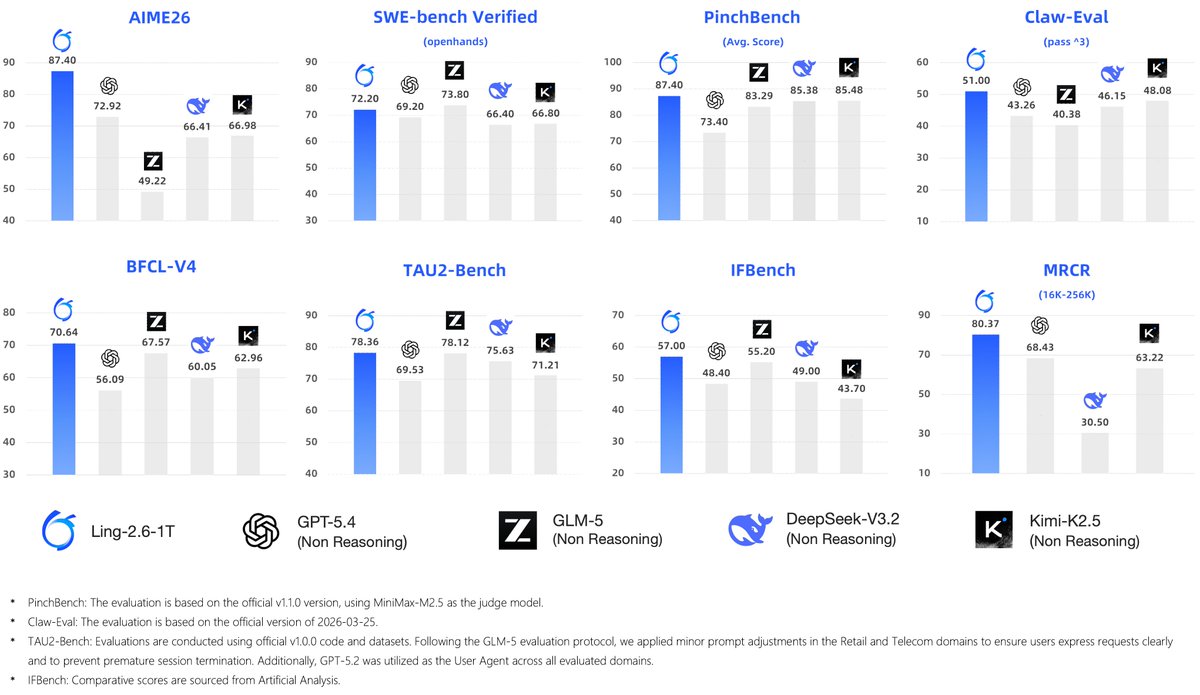

Last week, we introduced Ling-2.6-1T. Today, Ling-2.6-1T is officially an open model~ 🤗 1T total parameters · 63B active parameters We bring values to developers by making it easier to test, deploy, customize, and build. It is optimized to be "token efficiency" for real production needs: • Lower token overhead: strong intelligence without long reasoning traces • Reliable multi-step execution: better instruction, tool, context, and workflow control • Production-ready deployment: from code generation to bug fixing, with broad agent framework compatibility A sneak pick into the agentic capability in @opencode

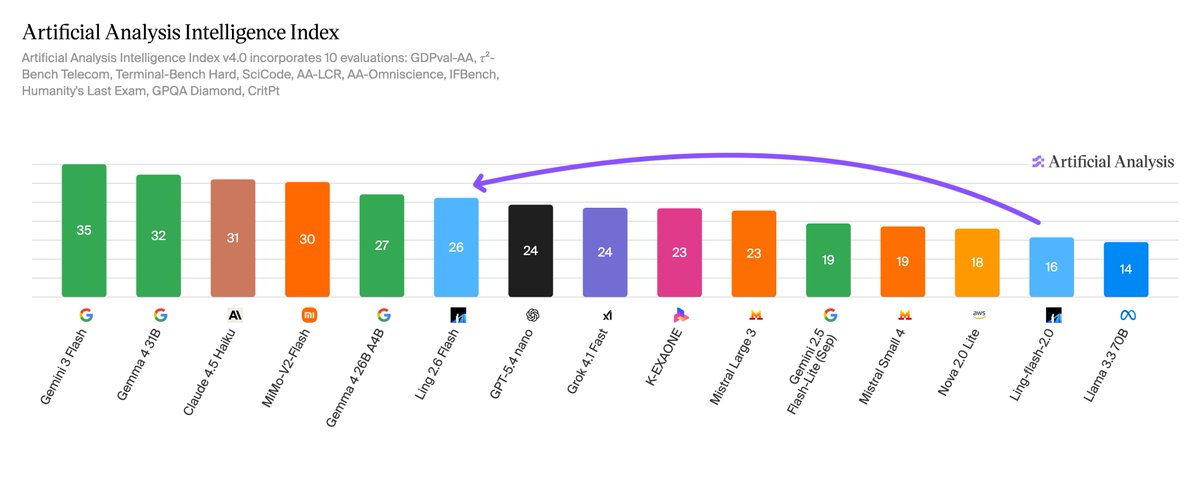

Ling-2.6-flash is now officially open-sourced! A fast, token-efficient Instruct model built for real-world agent workflows. 104B total parameters · 7.4B active parameters Available in BF16, FP8, and INT4 variants for different deployment needs. Key strengths: - Fast generation: 215 tokens/s on Artificial Analysis Output Speed - High token efficiency: only 15M tokens on the full AA Intelligence Index evaluation - Real task execution: strong performance across coding, document processing, and lightweight agent workflows - Improved experience: better Chinese-English switching and smoother compatibility with mainstream coding frameworks

🚀 Today, we are launching Ling-2.6-1T, a trillion-parameter flagship model designed for precise instruct task execution. By prioritizing a "Fast-Thinking" mechanism, it delivers SOTA intelligence with ultra-low token overhead, making token efficiency a first-class citizen.

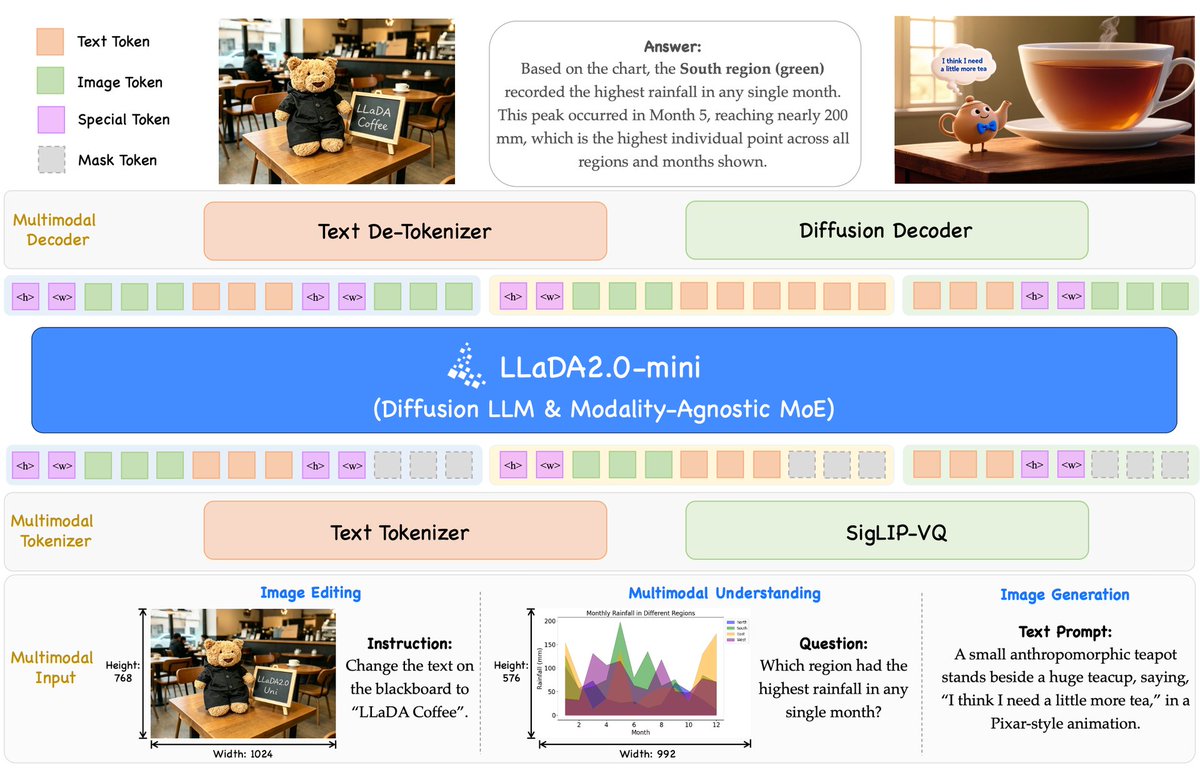

After two months of teamwork, we’re excited to share our team’s latest achievement — LLaDA2.0-Uni, InclusionAI’s first multimodal LLaDA. A unified discrete diffusion LLM built for both understanding and generation across text and images. Highlights: ● One paradigm for VQA, doc understanding, and image generation ● Efficient inference with a new decoding strategy + 8-step distilled decoder ● Interleaved text-image generation enabled by unified discrete representations (SGLang support soon) 🤗 Hugging Face: huggingface.co/inclusionAI/LL… 📷 ModelScope: modelscope.cn/models/inclusi…

Introducing Ling-2.6-flash, an instruct model with 104B total parameters and 7.4B active parameters. Ling-2.6-flash is designed for high token efficiency, not inflated outputs. It stays competitive on real agent tasks while helping developers reduce cost, improve throughput, and deploy more efficiently at scale.

🎉 After one year of teamwork, we are excited to release our 3D foundation model — LingBot-Map! Unlike DA3/VGGT, LingBot-Map is a purely autoregressive model for streaming 3D reconstruction ⚡ It achieves ~20 FPS on 518×378 resolution over sequences exceeding 10,000 frames — and beyond 🚀 Two key insights behind LingBot-Map: 🔑 Keep SLAM's structural wisdom: build Geometric Context Attention with long-context modeling while maintaining a compact streaming state 🔑 Make everything end-to-end learnable — no optimization, no post-processing Let's check out our demos 👇

Since last year, we've been building something for developers: a data-driven insight to what matters in the AI open-source development space. 🚀 Today we introduce the Q1 2026 Agentic AI Landscape with @TheInclusionAI - your ecosystem navigation map. What we're releasing today: ✅ 50+ projects mapped — from OpenClaw & Claude-Mem to Aden Hive & Paperclip, covering Coding Agents, Personal Assistants, Orchestration Frameworks ✅ Community insights from 21K+ active developers — extreme power law distribution; indie builders & startups dominate; <10% from big tech More details👉 inclusion-ai.org/blog/agentic-l… #AgenticAI #OpenSource #inclusionAI

🚀 Exciting news for the spatial perception community! 📷 For too long, the lack of large-scale, real-world depth datasets has been a major bottleneck. Today, we are open-sourcing the RGB-D dataset built for training our spatial perception model LingBot-Depth — and it's massive. 👇

⚡️New on ZenMux: LLaDA2.1-flash 100B diffusion LLM from @TheInclusionAI . → Error-correcting editable generation → Speed Mode: ultra-fast inference → Quality Mode: competitive performance → RL tailored for 100B-scale dLLM 🔗 zenmux.ai/inclusionai/ll… 🔗 huggingface.co/inclusionAI/LL…