Royce

2.5K posts

Royce

@RobRoyce_

Principal SWE at https://t.co/JHm2jsE6n8 | Formerly AI+Robotics @NASAJPL | CS&E @UCLA

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

We've reached an agreement to acquire Astral. After we close, OpenAI plans for @astral_sh to join our Codex team, with a continued focus on building great tools and advancing the shared mission of making developers more productive. openai.com/index/openai-t…

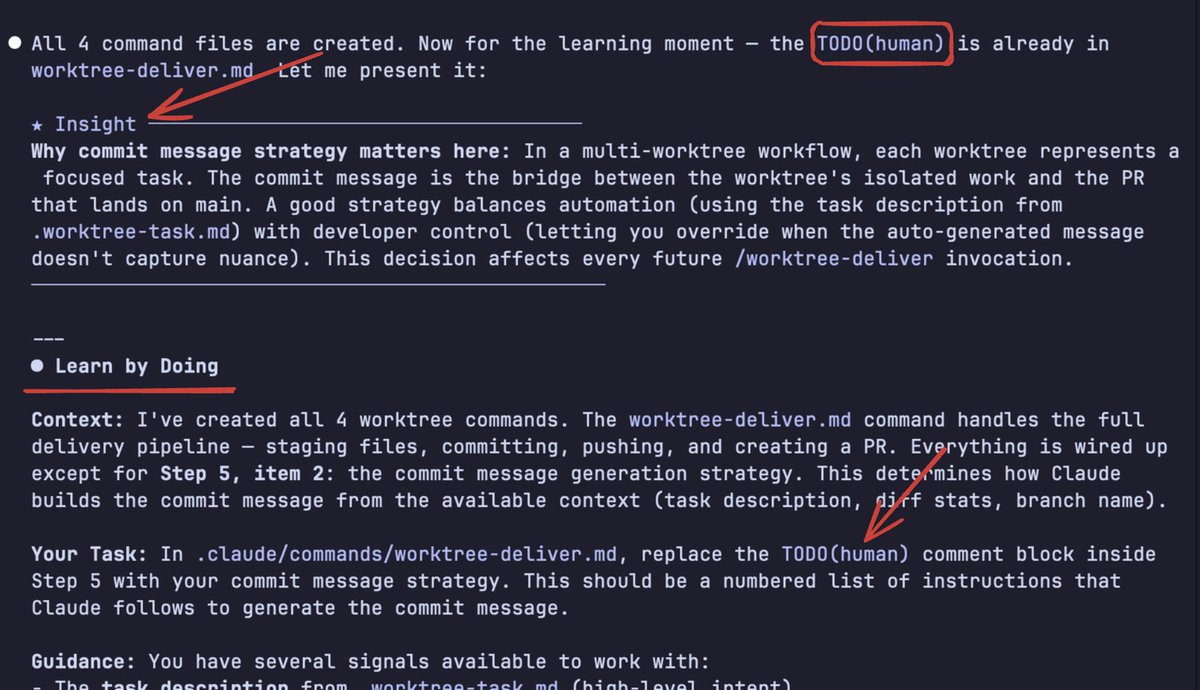

2. Start every complex task in plan mode. Pour your energy into the plan so Claude can 1-shot the implementation. One person has one Claude write the plan, then they spin up a second Claude to review it as a staff engineer. Another says the moment something goes sideways, they switch back to plan mode and re-plan. Don't keep pushing. They also explicitly tell Claude to enter plan mode for verification steps, not just for the build

Did you know you can change how Claude Code interacts with you? Output Styles modify the system prompt to adapt the agent's behavior: - Default: Efficient software engineering - Explanatory: Adds educational insights while coding - Learning: Claude guides you to write key parts yourself Switch with /output-style [style] If you're learning a new codebase, try Learning mode, you'll actually understand what Claude is building instead of just accepting diffs.