Joe Davison

2.1K posts

Joe Davison

@joeddav

AI Research & Eng. @huggingface alumn.

Introducing OpenReward. 🌍 330+ RL environments through one API ⚡ Autoscaled sandbox compute 🍒 4.5M+ unique RL tasks 🚂 Works like magic with Tinker, Miles, Slime Link and thread below.

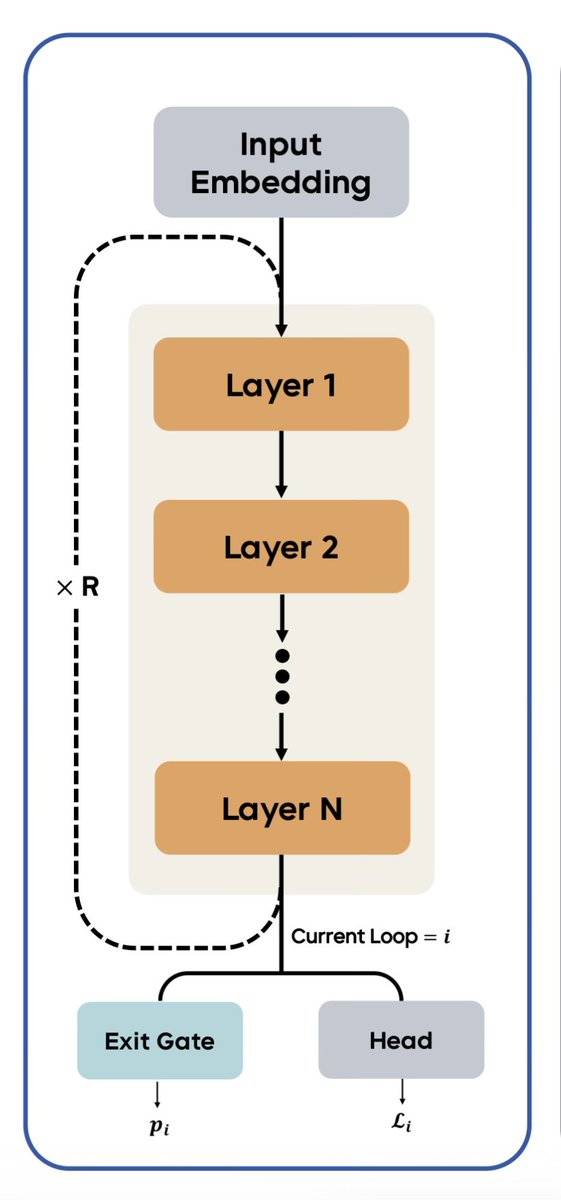

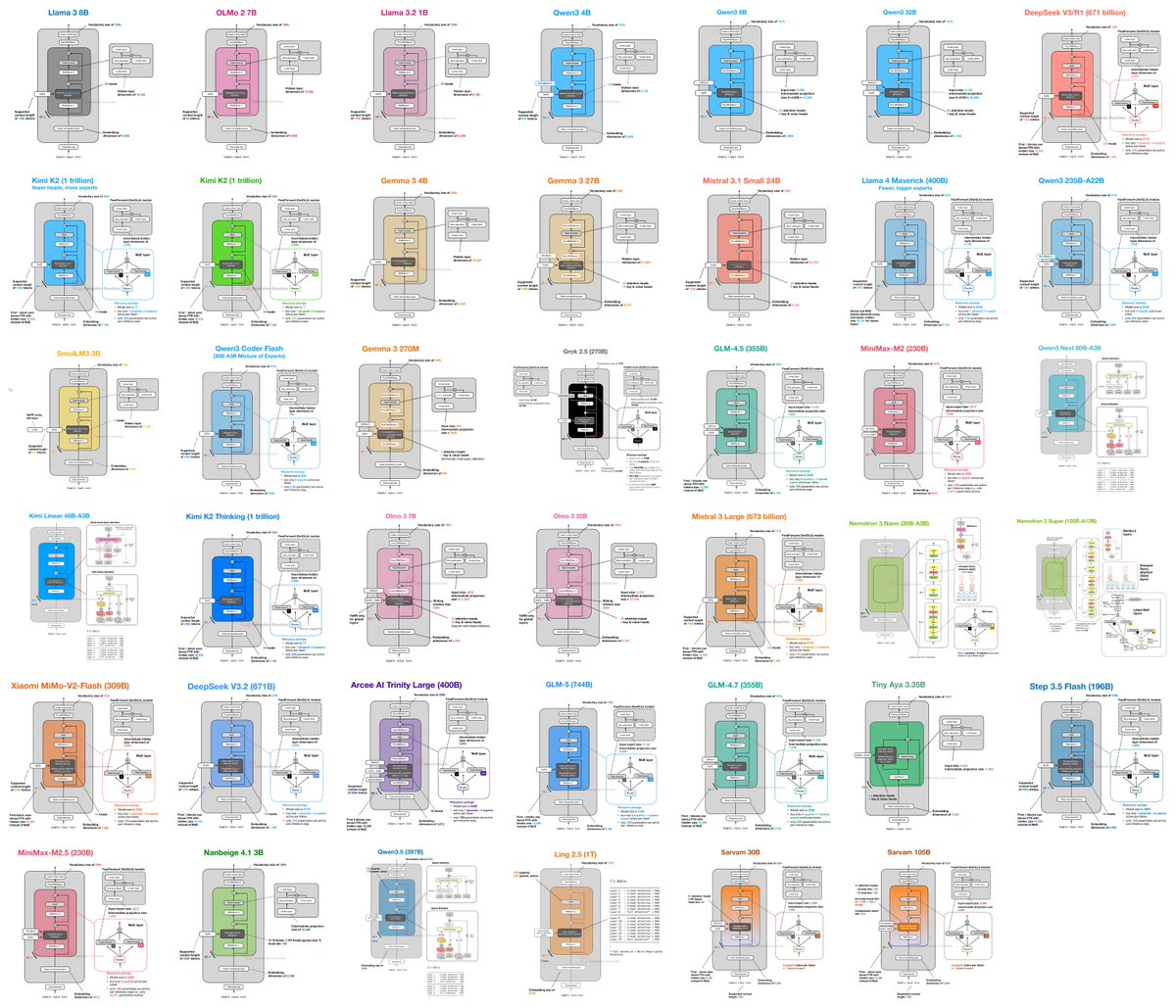

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers. 🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth. 🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale. 🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead. 🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains. 🔗Full report: github.com/MoonshotAI/Att…

Out of context reasoning is one of the most fascinating developments in the science of how LLMs work. This primer by @OwainEvans_UK, one of the main discoverers of the phenomena, is a great introduction

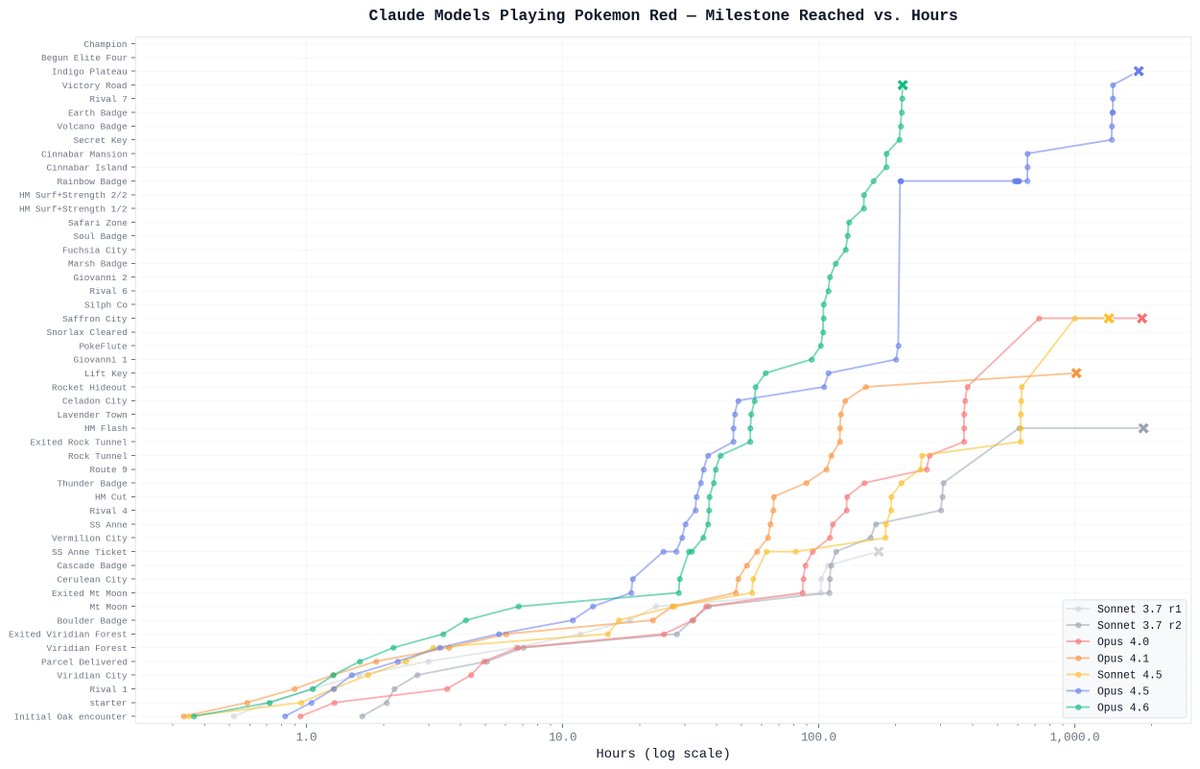

1 million context window: Now generally available for Claude Opus 4.6 and Claude Sonnet 4.6.

I'm so excited to introduce this! We've worked on a million different moving parts to produce this. I'm fairly confident it's the best multimodal model that exists, period -- and it's not too shabby at pushing back the LIMITs of retrieval either...

After almost 10 years of near nonstop grind, I’m taking 2 months of paternity leave to support my hero of a wife and welcome our twin daughters. @huggingface is in great hands with the team and @julien_c acting as interim CEO. Hope to return a changed man, to an even stronger HF, and to an AI field that’s more open and collaborative than ever!

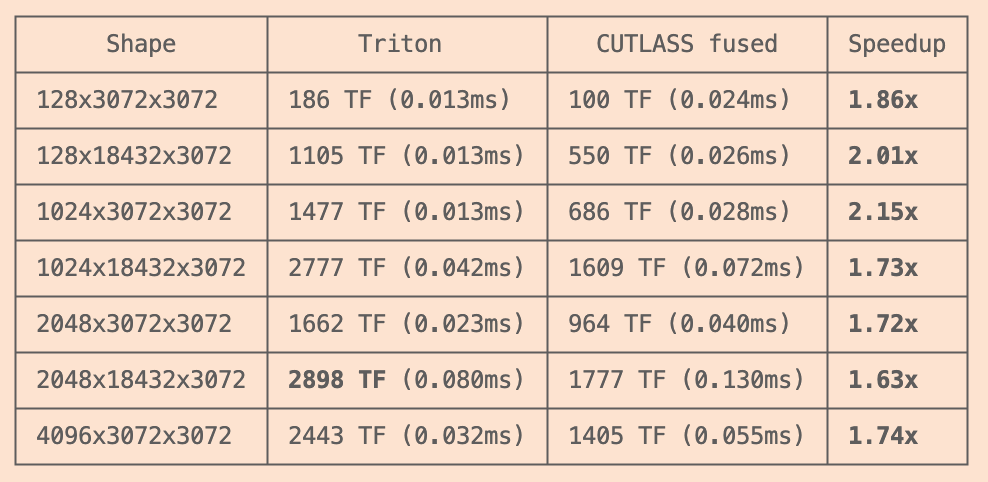

i open-sourced autokernel -- autoresearch for GPU kernels you give it any pytorch model. it profiles the model, finds the bottleneck kernels, writes triton replacements, and runs experiments overnight. edit one file, benchmark, keep or revert, repeat forever. same loop as @karpathy autoresearch, applied to kernel optimization 95 experiments. 18 TFLOPS → 187 TFLOPS. 1.31x vs cuBLAS. all autonomous 9 kernel types (matmul, flash attention, fused mlp, layernorm, rmsnorm, softmax, rope, cross entropy, reduce). amdahl's law decides what to optimize next. 5-stage correctness checks before any speedup counts the agent reads program.md (the "research org code"), edits kernel.py, runs bench.py, and either keeps or reverts. ~40 experiments/hour. ~320 overnight ships with self-contained GPT-2, LLaMA, and BERT definitions so you don't need the transformers library to get started github.com/RightNow-AI/au…