0xmaddy | Tech Adrenaline

3.1K posts

0xmaddy | Tech Adrenaline

@tech_maddy

Building secure AI systems | Dev x Security Engineer | Dm's open

We’re saying goodbye to the Sora app. To everyone who created with Sora, shared it, and built community around it: thank you. What you made with Sora mattered, and we know this news is disappointing. We’ll share more soon, including timelines for the app and API and details on preserving your work. – The Sora Team

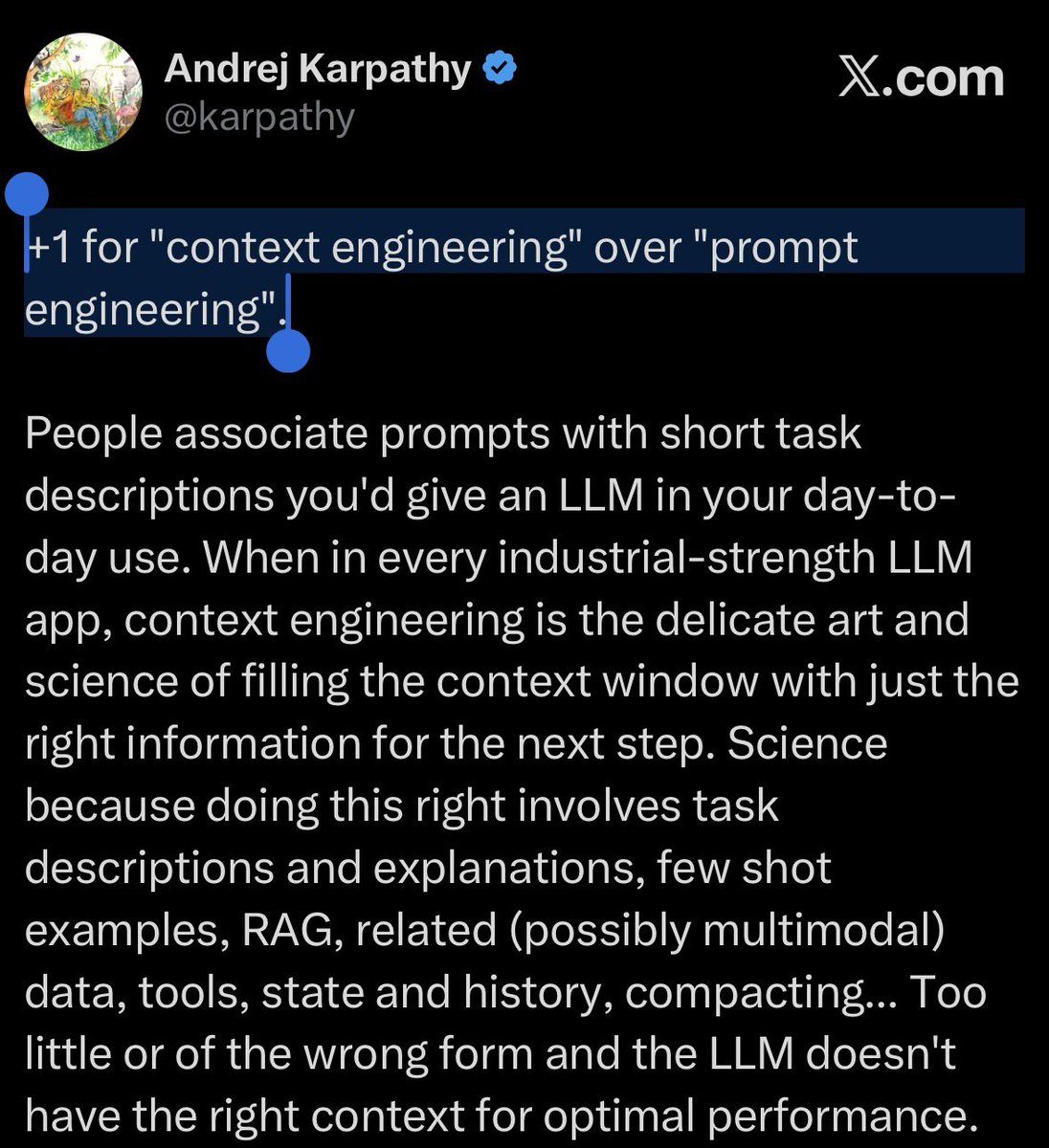

Is AI being designed to fail? Everyone talks about reasoning. But when given a task, the AI isn't reasoning the way you might expect. It looks at your input, finds the closest match it's seen before, and predicts the most likely next action. That process is called vector similarity search. It's genuinely powerful. It's also not the same thing as understanding what you actually meant. Think of a plumber who hears the word "leak" and starts pulling up floorboards before you've finished the sentence. He's not being careless. He's pattern-matching - that's exactly how he was trained. Your AI agent is doing the same thing. Context is the one thing that gets deprioritized when teams are racing to ship. But without it, you don't have an intelligent agent. You have a very fast guesser. Similarity ≠ relevance. How? Find out with the link in the comments ⬇️

Give Claude a Blue Bubble! iMessage Channel now available.

Is AI being designed to fail? Everyone talks about reasoning. But when given a task, the AI isn't reasoning the way you might expect. It looks at your input, finds the closest match it's seen before, and predicts the most likely next action. That process is called vector similarity search. It's genuinely powerful. It's also not the same thing as understanding what you actually meant. Think of a plumber who hears the word "leak" and starts pulling up floorboards before you've finished the sentence. He's not being careless. He's pattern-matching - that's exactly how he was trained. Your AI agent is doing the same thing. Context is the one thing that gets deprioritized when teams are racing to ship. But without it, you don't have an intelligent agent. You have a very fast guesser. Similarity ≠ relevance. How? Find out with the link in the comments ⬇️

Your work tools in Claude are now available on mobile. Explore Figma designs, create Canva slides, check Amplitude dashboards, all from your phone. Give it a try: claude.com/download

Your work tools in Claude are now available on mobile. Explore Figma designs, create Canva slides, check Amplitude dashboards, all from your phone. Give it a try: claude.com/download

Anthropic CEO: “50% of all entry-level Lawyers, Consultants, and Finance Professionals will be completely wiped out within the next 1–5 years." grad students and junior hires are cooked.