bryan

2.6K posts

bryan

@BryzonX

Buy risk assets & chill // Fintwit’s top honesty broker // Trading journal

@ParadisLabs How does one buy when its miles away from 200ema amd 50ema?

Samsung Foundry's 4nm "Fully Booked" Through Next Year… Set for H2 Profitability Turnaround Samsung Electronics' foundry flagship 4nm line has entered "fully booked" status through next year. The result reflects the convergence of HBM4 volume ramp and orders from global Big Tech. Industry observers expect the chronically loss-making foundry division to fire its first signal of recovery as early as the second half of this year. According to the semiconductor industry on the 3rd, Samsung Electronics' 4nm foundry process has recently secured order volumes extending into next year's production. A semiconductor industry source who requested anonymity said, "The 4nm process has recently demonstrated better-than-expected stability among global customers, and demand is exploding," adding, "The line is running so tightly that it is effectively impossible to take additional orders through next year." The core driver of these orders is HBM4. Samsung Electronics produces the base die mounted in its HBM4 on the 4nm foundry process. As the company begins full-scale HBM4 supply to AI accelerator vendors such as NVIDIA and AMD, the utilization rate of the supporting 4nm foundry line has likewise reached its ceiling. Demand for the 4nm process is not confined to memory. Global fabless companies that previously relied on TSMC are now knocking on Samsung's door, factoring in supply-chain diversification and cost-effectiveness. NVIDIA and Google currently appear on the customer roster for Samsung's 4nm node. With improved yields and verified power efficiency (performance per watt), the "love calls" from Big Tech continue to come in. With high-value-added HBM4 and global Big Tech volumes filling the 4nm line, expectations for an earnings turnaround are also rising. The 4nm process has already completed its large-scale investment phase, easing the depreciation burden. The structure is one in which profitability rises sharply as utilization is maximized. A source at a Samsung Foundry partner company assessed, "Thanks to the stabilization of the 4nm process and the strong demand anchor of HBM4, Samsung Foundry is expected to swing to profit as early as the second half of this year, or at the latest in the first half of next year," adding, "After a prolonged slump, it has clearly entered a recovery phase." That said, the industry points to the new fab under construction in Taylor, Texas, as the biggest variable for future earnings. The substantial initial operating costs and labor expenses incurred during fab completion and ramp-up preparation could swing reported earnings depending on how they are accounted for on the books. Another semiconductor industry source said, "Since the Taylor fab currently being built sits on U.S. soil, whether the related performance is booked under the U.S. subsidiary (DSA) or consolidated into the domestic foundry results remains to be seen."

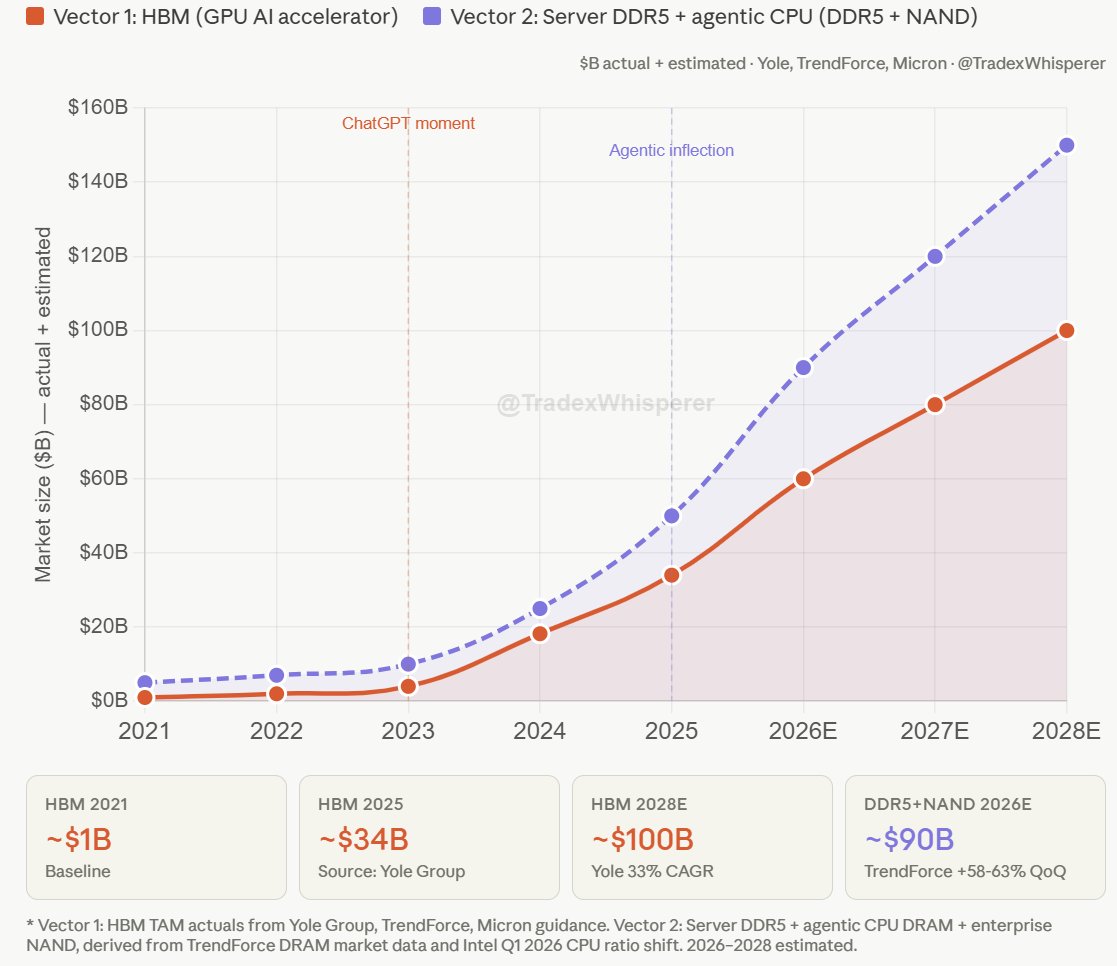

$NVDA $MU $EWY Key Comment from Jensen Huang on Revenue Generation, Agentic AIs: "We have now seen the inflection of agentic AI and the usefulness of agents across the world in enterprises everywhere you're seeing incredible compute demand because of it. In this new world of AI. Compute is revenues without compute, there's no way to generate tokens. Without tokens, there's no way to grow revenues. So in this new world of AI, compute equals revenues. And I am certain that at this point, with the product, productive use of Codex and cloud code and the the excitement around cloud co-work and, you know, just that the incredible enthusiasm about Open Claw and the enterprise versions of them, all of the enterprise ISVs who are now working on agentic systems on top of their tools, platforms. I am certain at this point that we are at the inflection point."

$AMD CEO LISA SU SEES COMPUTE DEMAND GROWING BY 100X OVER THE NEXT COUPLE YEARS AMD has long been positioning itself as the leader in AI Compute. The demand is finally here.

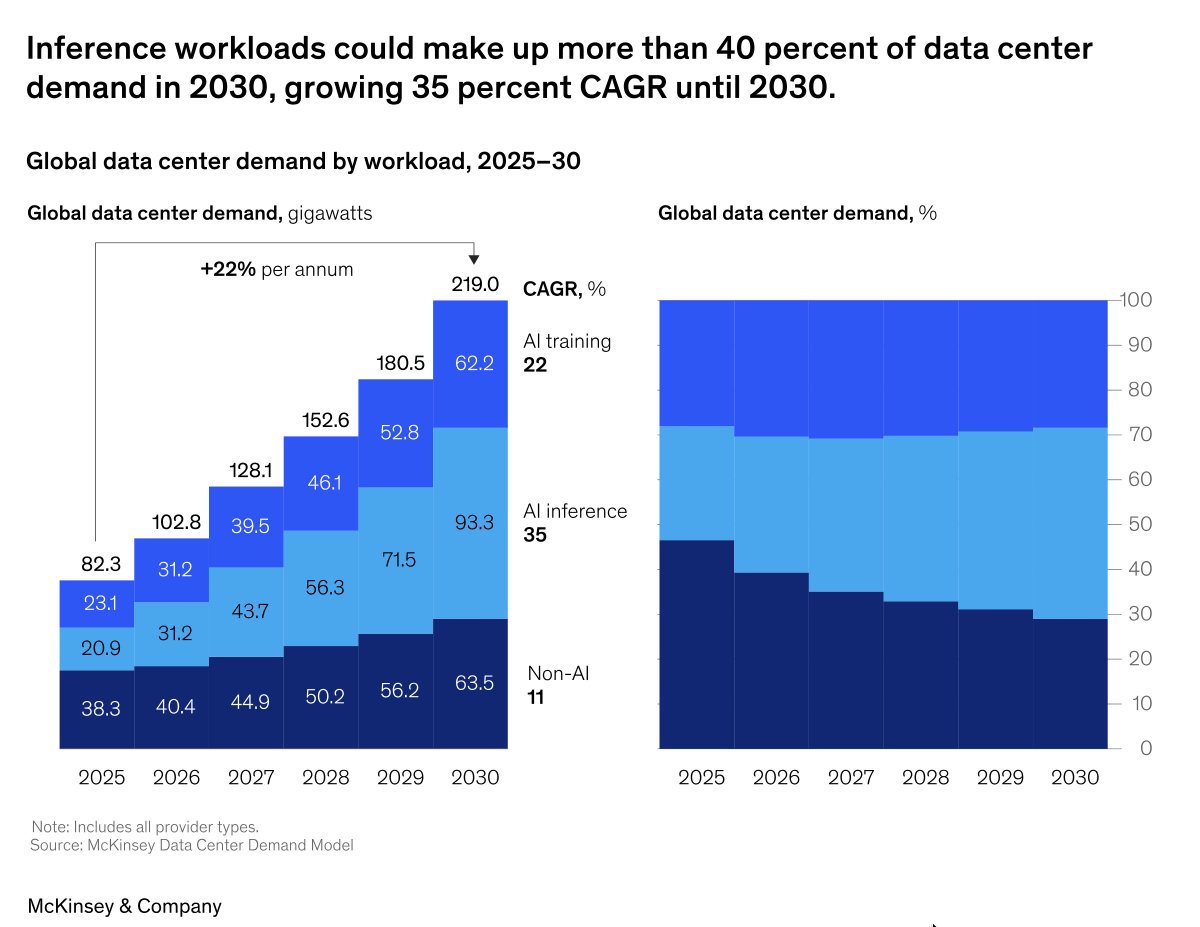

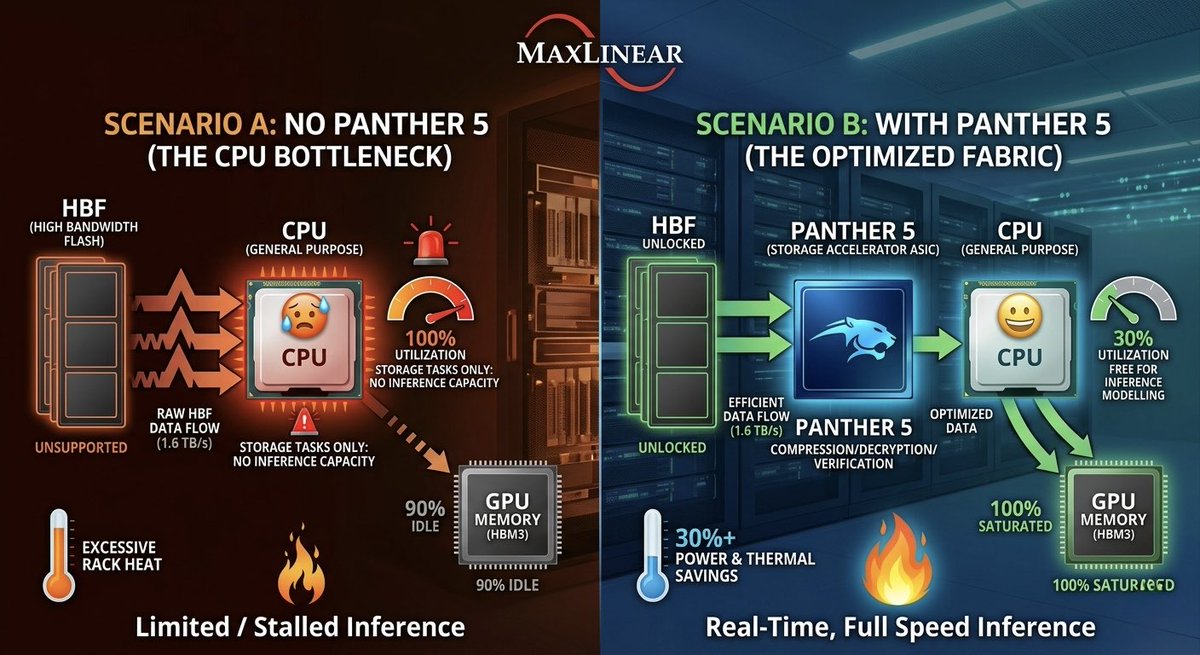

"The Next Bottleneck After HBM Is HBF"... A Computing Pioneer's Prediction "I have been consistently paying close attention to High Bandwidth Flash (HBF). I'm also collaborating with semiconductor companies on this. HBF is highly likely to stand at the center of the next bottleneck — a surge in demand." David Patterson, professor at UC Berkeley, Turing Award laureate, and widely recognized as the architect of RISC (Reduced Instruction Set Computing — an approach that simplifies instructions to improve processing efficiency), made these remarks on April 30 (local time) when he met with reporters in San Francisco immediately after delivering a keynote at the Dreamy Next event. Asked about what comes after HBM (High Bandwidth Memory), which is currently in a supply-constrained bottleneck, Professor Patterson answered that HBF will emerge as the next focus. Specifically, he said, "Although a number of technical challenges still remain, the HBF being developed by companies such as SK hynix and SanDisk is a meaningful alternative in that it can deliver large capacity with low power consumption," adding, "Going forward, how efficiently data can be stored and delivered will become the critical variable." This past March, SK hynix announced that it had joined hands with U.S. flash memory company SanDisk to drive the global standardization of HBF. Unlike HBM, which stacks DRAM, HBF is built by stacking NAND flash — a non-volatile memory. Their roles are also distinct. While HBM serves as a fast computation aid, HBF is focused on storing the vast amounts of data that AI processes at high capacity. HBF is drawing attention as the AI inference market grows. The AI market is broadly divided into learning (training) and inference. Training is the process of feeding massive amounts of data to teach an AI model. Inference is the stage in which results are derived based on the trained data. In inference AI, the ability to continuously store and retrieve vast amounts of intermediate data — such as prior conversations, judgment outcomes, and task context — is crucial. This is because AI carries out reasoning by remembering context and building upon it. The problem is that all of this data is difficult to fit into HBM. Since HBM is optimized for handling data used immediately, its capacity itself is inherently limited. Moreover, given its high price, processing the enormous amounts of context data generated during inference using HBM alone would impose significant cost burdens. As a result, an environment has formed in which both HBM and HBF are needed simultaneously — a kind of division of labor. Domestic experts in Korea also anticipate that the importance of HBF will grow going forward. At an HBF research and technology development strategy briefing held this past February, Kim Jung-ho, professor in the School of Electrical and Electronic Engineering at KAIST, stated, "If the central processing unit (CPU) was the core in the PC era and low-power technology was the core in the smartphone era, memory will be the core of the AI era," adding, "What determines speed is HBM, and what determines capacity is HBF." He further predicted, "From 2038 onward, demand for HBF will surpass that of HBM."

$SYNA back above all daily moving averages and cleared local resistance This could be a big mover in the coming days I have a position for edge/robotics basket exposure NFA.

$PENG Retesting the 8 year breakout looking for continuation. Up.