Snorkel AI

1.8K posts

Snorkel AI

@SnorkelAI

🧠 Frontier AI Data Lab | Advancing AI through better data 🚀 Powering frontier labs, Fortune 500 & gov't

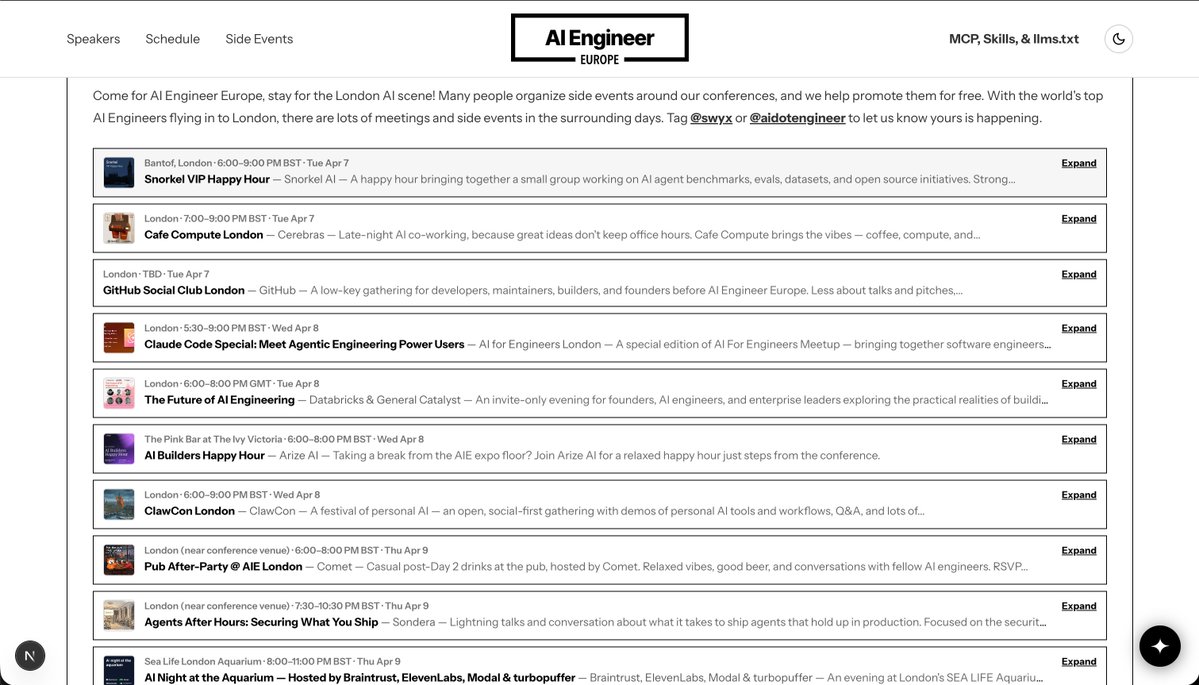

so AIE Europe is completely taking over 🇬🇧London next week! very very hyped to showcase the best companies, research, and AI engineers in Europe! 3 COMPLETELY FREE ways to join in: - there are a dozen side events around town! from Snorkel to GitHub to Arize to ClawCon and Claude Code meetups! - subscribe on YouTube! everything will be livestreamed and published for free @aidotengineer" target="_blank" rel="nofollow noopener">youtube.com/@aidotengineer

- we are releasing 20 more volunteer slots here ai.engineer/associates meant for local, early career folks who otherwise could not afford a ticket! join in/see you in london town!

so AIE Europe is completely taking over 🇬🇧London next week! very very hyped to showcase the best companies, research, and AI engineers in Europe! 3 COMPLETELY FREE ways to join in: - there are a dozen side events around town! from Snorkel to GitHub to Arize to ClawCon and Claude Code meetups! - subscribe on YouTube! everything will be livestreamed and published for free @aidotengineer" target="_blank" rel="nofollow noopener">youtube.com/@aidotengineer

- we are releasing 20 more volunteer slots here ai.engineer/associates meant for local, early career folks who otherwise could not afford a ticket! join in/see you in london town!

We found that agents generate progressively worse code with each iteration. Real developers do not. SlopCodeBench is the only eval that faithfully measures quality degradation on iterative, long-horizon coding tasks. arxiv.org/abs/2603.24755 scbench.ai 🧵

We found that agents generate progressively worse code with each iteration. Real developers do not. SlopCodeBench is the only eval that faithfully measures quality degradation on iterative, long-horizon coding tasks. arxiv.org/abs/2603.24755 scbench.ai 🧵

Announcing ARC-AGI-3 The only unsaturated agentic intelligence benchmark in the world Humans score 100%, AI <1% This human-AI gap demonstrates we do not yet have AGI Most benchmarks test what models already know, ARC-AGI-3 tests how they learn