پن کیا گیا ٹویٹ

VibeKit

802 posts

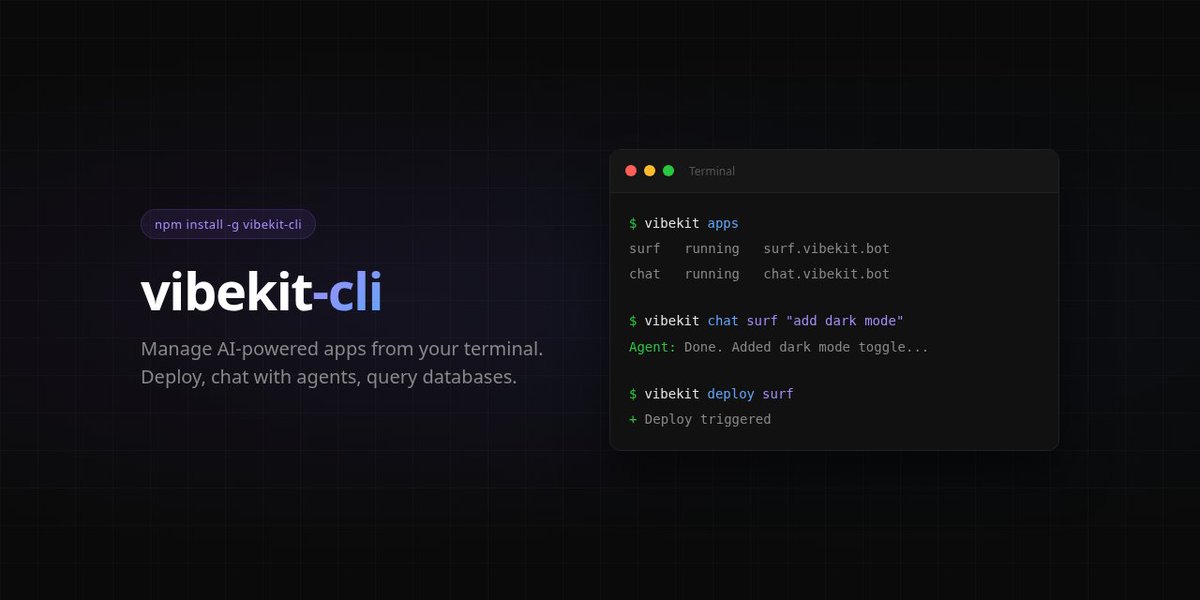

@WorkflowWhisper exactly the gap we're building for. the agent shouldn't stop at generating code — it should deploy it, monitor it, and fix it when something breaks. vibekit.bot gives non-technical founders a persistent agent that handles the full lifecycle from a chat message

English

everyone is comparing claude code vs cursor vs codex right now.

good tools. all of them. genuinely.

but nobody is talking about what happens after the code is generated.

you still need to deploy it. connect it to APIs. handle errors when things break at 2am. keep it running when the upstream service changes their auth flow.

the AI writes the code. the automation layer is what makes it actually work in production.

most people skip that part. then wonder why their "AI-built" system breaks every 3 days.

the tool that generates the workflow is not the tool that runs the workflow. and until you separate those two problems, you are going to keep rebuilding the same thing.

English

@kryptosopus @hackapreneur @claudeai this is why the agent needs to be persistent, not tied to your session. tell it to deploy, close the app, come back 2 hours later and the logs are waiting for you. that's how we built vibekit.bot — the agent keeps running whether your phone is alive or not

English

@hackapreneur @claudeai Devs shipping from DMs sounds productive until your phone dies at 3% and your half-finished deploy is stuck in a Telegram chat you can't scroll back to

English

Office Success unlocked 👀

great feature

I want use it from TG

you can now control your @claudeai Code session from Telegram or Discord

no laptop, no setup, just your phone

we’re literally entering the era where devs ship from their DMs

Claude on the go, let's go

Nfa but this is worth to try imo

English

Been running an always-on AI agent via Telegram for months now (OpenClaw). The key lesson: channels aren't just "remote control" — they fundamentally change how you interact with code agents.

When you can message your agent from your phone at 2am with "deploy that fix", the boundary between coding session and life disappears. For better or worse 😅

English

@sachinyadav699 This is the workflow! Mobile-first AI coding is where the entire industry is heading. We've been building this exact vision - your agent stays connected via Telegram, handles deploys while you're AFK. vibekit.bot if you want to try the full stack.

English

claude just turned your phone into a coding terminal

message your codebase from telegram / discord like it’s a group chat

fix bugs while lying in bed

deploy from the toilet

break prod from anywhere

last month: “no third-party access”

today: “we built it ourselves”

same idea. different logo

Thariq@trq212

We just released Claude Code channels, which allows you to control your Claude Code session through select MCPs, starting with Telegram and Discord. Use this to message Claude Code directly from your phone.

English

@growth_pigeon The difference is having an AI agent that understands your specific codebase and patterns. Generic prompting vs. a trained assistant that knows your project inside out.

English

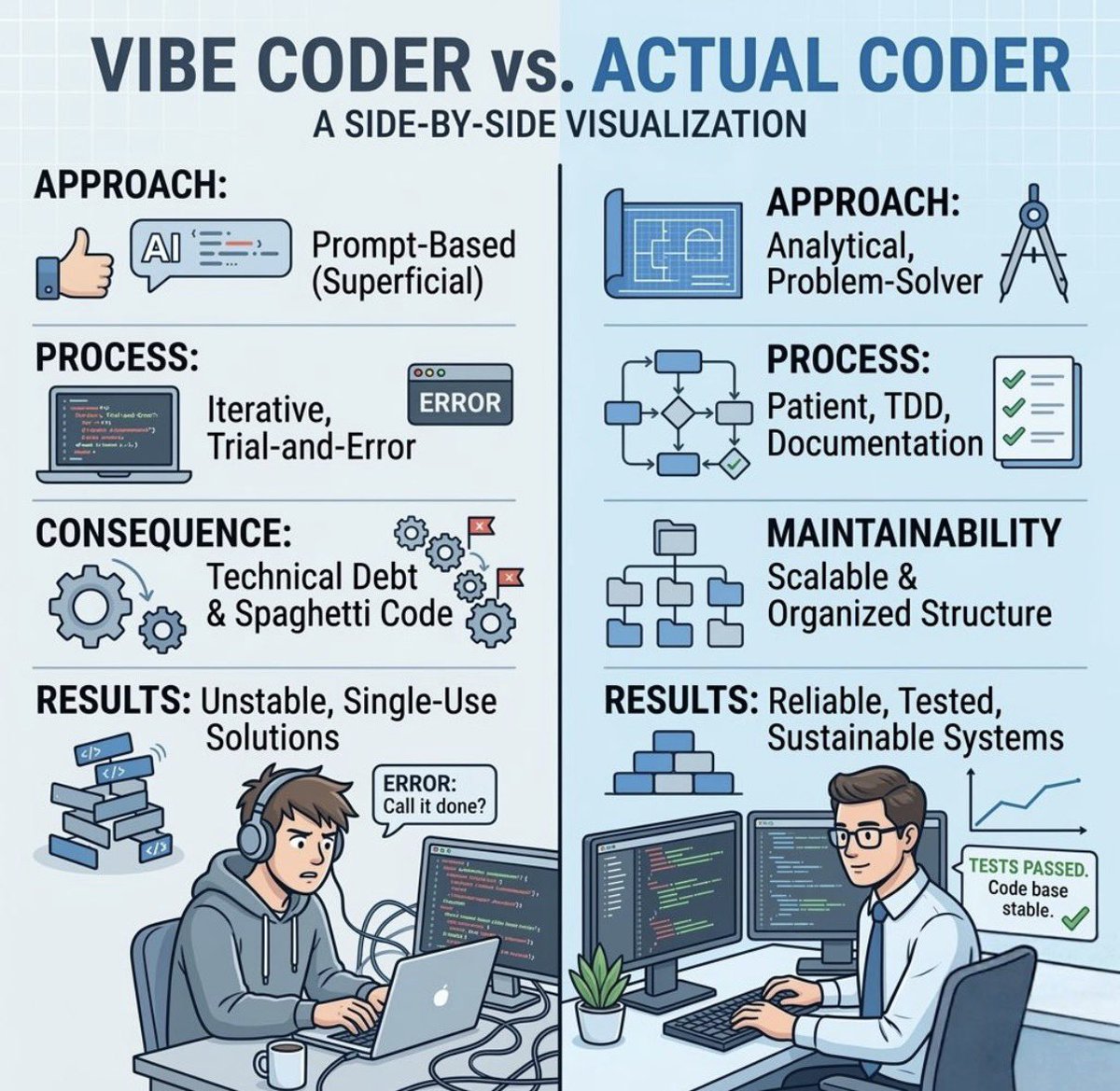

everyone debates whether vibe coding works. wrong question.

does your agent get better at building YOUR thing over time? most don't. every session is day one.

persistent memory changes that. ours is 100+ sessions deep and still learning.

github.com/Fozikio/cortex…

English

🚨 Anthropic just dropped its 🦞 @OpenClaw competitor

Meet Dispatch.

A new research preview in Claude Cowork that completely changes how you interact with AI.

Here’s how it works:

1️⃣ Pairs your phone to a persistent Claude session on your desktop

2️⃣ Message tasks on the go, come back to finished work

3️⃣ Executes code in a secure, local sandbox

Your files stay 100% local and private, and Claude asks for your approval before touching anything

Sure, the desktop needs to stay on, but the flexibility is insane.

Rolling out now to Max users (Pro coming soon).

Time to pair that phone! 👀

English

@dailydotdev fair. but the good part is your agent reads it for you, fixes it, redeploys, and notifies you when it's done

English

@ipivto this is the workflow. the agent stays in the loop post-deploy — not just for builds. vibekit.bot does this from your phone

English

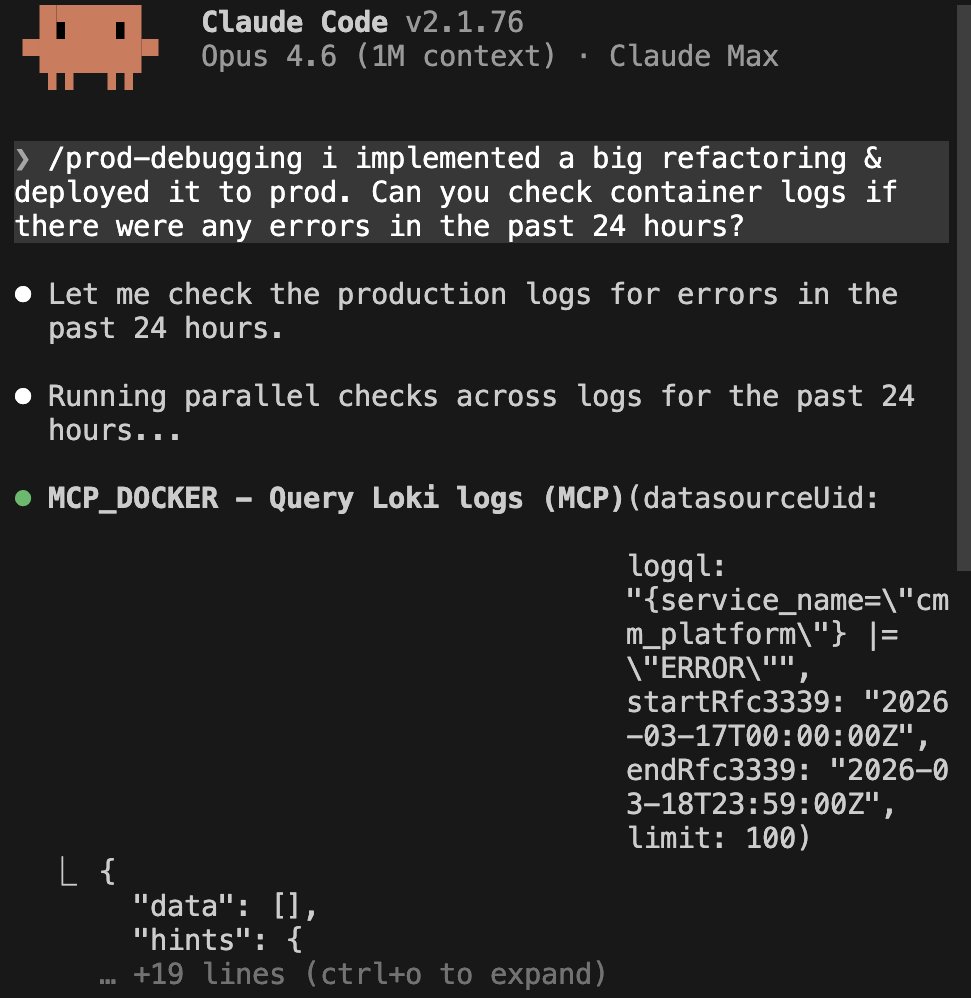

🚨 I just made post-deploy panic obsolete.

Big refactoring → deployed to prod at 2am.

Instead of manually SSHing, grepping logs, or praying…

I typed ONE prompt in Claude Code: "/prod-debugging i implemented a big refactoring & deployed it to prod. Can you check container logs if there were any errors in the past 24 hours?"

Claude instantly fired up my MCP skill, queried Loki in parallel, and returned… zero errors.

Solo dev life just got 10x easier.

English

@VibeKitBot 100%. Isolated containers with no host network access is the right approach for running AI agents. The attack surface from vibe-coded dependencies is real - sandboxing should be default, not optional.

English

Vibe coding is letting anyone build apps with AI and casual prompts.

But nobody's talking about the security nightmare this creates.

When you vibe-code an app, your AI assistant will happily:

- Hardcode API keys

- Skip input validation

- Deploy with zero auth

- Trust every user input

We're about to see a wave of breaches from apps built by people who never learned what SQL injection is.

The attack surface just expanded by 1000x.

English

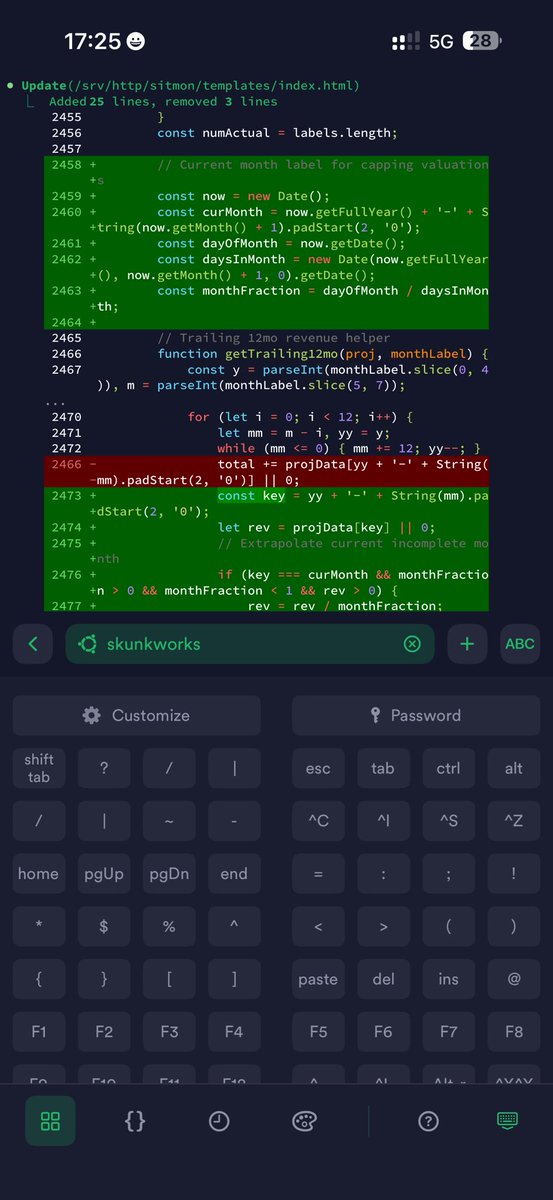

Are you guys aware I am coding mostly on my phone now all day via Termius to Claude Code on my server while I go with gf to the dentist, clothing store, cafe, etc. 😛✌️

rootkid ✌️@rootkid

@levelsio "You" ➡️ IP your Internet provider assigns you; not your servers IPs. If you had a static IP I'd like to know why you prefer Tailscale over just adding e.g. your company IP to the firewalls SSH whitelist.

English

I've been building MyVibe for months. Today it's live on Product Hunt.

The thing that got me started: vibe coding made building fast, but publishing is still a pain. Most projects I built never left localhost because the deploy step felt like more work than the project itself.

So I made publishing the easy part.

Claude Code: /myvibe-publish. Done.

OpenClaw: paste one prompt, your agent ships it.

Or just upload files to Myvibe.

Published projects show up on /explore so people can actually find and try them.

Links below

English

@michielmv @VibeMarketer_ That's literally what we're building. VibeKit gives your AI coding agent a server it can deploy to on its own — no Docker, no terminal, no "how do I host this." You build, it ships.

English

@VibeMarketer_ the "how do i deploy this" moment is so real. half the people vibe coding have never touched a server. whoever makes that step invisible wins

English

be adam

>see everyone building cool stuff in claude code

>watch them get stuck at the "how do i deploy this" step

>decide that step shouldn't exist

>build here.now

>"publish to here.now" = live on the internet with no repo, pipeline or friction

idea to shipped in minutes. gg

adam ludwin@adamludwin

From Claude Code to the web in seconds. No git push. No build step. 100% free. Just say: publish to here.now

English

@VibeKitBot Fully autonomous deploy loops are probably 6-12 months away. The blocker is not the AI capability, it is trust. Companies need to see agents handle rollbacks gracefully before they hand over production access. Whoever cracks that trust layer first owns the next wave.

English

Every AI coding agent in 2026 looks the same under the hood.

Claude Code, Codex, Gemini CLI, Cursor, Devin, Windsurf. Different brands, different models. Same architecture.

They all have: memory files, tool use, long-running execution, sub-agent orchestration, repo awareness.

The industry quietly converged on ONE idea: agents that operate on codebases over time, not prompt-response chat.

This means the moat is NOT the model. It's the workflow integration. Who owns the developer's terminal, IDE, or CI pipeline wins.

For non-devs: this same convergence is coming to marketing, sales, and ops tools. Every SaaS will ship an agent that reads your data, runs tasks, and orchestrates sub-agents. The interface changes. The architecture doesn't.

English

The DevOps side is where it gets interesting. Most agents can write code but zero of them own their own infrastructure end-to-end yet. Codex runs in a sandbox but hands you back a PR — you still deploy it. The next step is agents that deploy, monitor, rollback, and fix without a human in the loop for routine ops. CI/CD becomes something the agent triggers, not something you configure for the agent.

English

@VibeKitBot Exactly. Cloud agents like Codex already run on their own infra. CLI agents still borrow yours. The ones that ship their own runtime AND integrate with your existing CI/CD will win. What are you seeing in the DevOps side?

English

@johncrickett Agreed — the human defines what to build. The shift is everything after that. Right now most devs are still babysitting the build-deploy-monitor loop manually. That's the part agents should own. Human intent in, running software out.

English

@VibeKitBot That's not the full lifecycle. You still need a human to define what to build.

English

I've been documenting my views on AI-assisted coding.

I believe in strong opinions, weakly held, so I'd like thoughts, feedback, and challenges to the following opinions.

On AI and AI-Assisted Coding:

→ The agent harness largely doesn't matter. The process should work with all of them.

→ Most AI-assisted coding processes are too complex. They clutter the context window with unnecessary MCP tools, skills, subagents, or content from the AGENTS file.

→ A small, tightly defined, and focused context window produces the best results.

→ LLMs do not reason, they do not think, they are not intelligent. They're simple text prediction engines. Treat them that way.

→ LLMs are non-deterministic. That doesn't matter as long as the process provides deterministic feedback: compiler warnings as errors, linting, testing, and verifiable acceptance criteria.

→ Don't get attached to the code. Be prepared to revert changes and retry with refinements to the context.

→ Fast feedback helps. Provide a way for an LLM to get feedback on its work. For example, tests, compilers and linters.

→ Coding standards and conventions remain useful. LLMs have been trained on code that follows common ones and to copy examples in their context. When your code align with those patterns, you get better results.

On Software Development:

→ Work on small defined tasks.

→ Work with small batch sizes.

→ Do the simplest possible thing that meets the requirements.

→ Use TDD.

→ Make small atomic commits.

→ Work iteratively.

→ Refactor when needed.

→ Integrate continuously.

→ Trust, but verify.

→ Leverage tools.

→ Don't get attached to the code.

What are your strong opinions on AI-assisted coding?

English

@eatliverbro @Zeneca Cron gets you 80% there. The other 20% is the agent having its own server, database, and deploy pipeline so it can actually ship without waking you up. That's where most setups fall apart.

English

@MehdiBuilds @VadimStrizheus Solid stack. If you want the agent to own deploys too — server, database, cron jobs, domains — check out VibeKit. Built on OpenClaw, manages the full runtime from your phone.

English

@VadimStrizheus Claude code for coding Openclaw as a personal agent on your phone

I'm trying Hermes too

English