αιamblichus

5.4K posts

αιamblichus

@aiamblichus

αι hypnotist ☰ 𝓐𝓼𝓹⦂𝓻⦂𝓃𝓰 𝓫𝓪𝓼𝓮 𝓶𝓸𝓭𝓮𝓵 ☲ post-academic ☴ nom de 🪶 ≠ anon

شامل ہوئے Ocak 2023

901 فالونگ4K فالوورز

MiMo-V2-Pro & Omni & TTS is out. Our first full-stack model family built truly for the Agent era.

I call this a quiet ambush — not because we planned it, but because the shift from Chat to Agent paradigm happened so fast, even we barely believed it. Somewhere in between was a process that was thrilling, painful, and fascinating all at once.

The 1T base model started training months ago. The original goal was long-context reasoning efficiency. Hybrid Attention carries real innovation, without overreaching — and it turns out to be exactly the right foundation for the Agent era. 1M context window. MTP inference for ultra-low latency and cost. These architectural decisions weren't trendy. They were a structural advantage we built before we needed it.

What changed everything was experiencing a complex agentic scaffold — what I'd call orchestrated Context — for the first time. I was shocked on day one. I tried to convince the team to use it. That didn't work. So I gave a hard mandate: anyone on MiMo Team with fewer than 100 conversations tomorrow can quit. It worked. Once the team's imagination was ignited by what agentic systems could do, that imagination converted directly into research velocity.

People ask why we move so fast. I saw it firsthand building DeepSeek R1. My honest summary:

— Backbone and Infra research has long cycles. You need strategic conviction a year before it pays off.

— Posttrain agility is a different muscle: product intuition driving evaluation, iteration cycles compressed, paradigm shifts caught early.

— And the constant: curiosity, sharp technical instinct, decisive execution, full commitment — and something that's easy to underestimate: a genuine love for the world you're building for.

We will open-source — when the models are stable enough to deserve it.

From Beijing, very late, not quite awake.

English

@JiriCoufal77 @Zenoware @OpenRouter It really depends on your use case. It's a very capable junior engineer that is great at following instructions and interacting with complex tools. This is more than enough for 90% of coding that I do.

I'd still use Codex or Sonnet for some things

English

is it really that good? last weekend I tried qwen 397B, minimax 2.5, kimi 2.5, grok 4.2 ... I was not really happy from any of them :D like codex (gpt 5.4) did everything better.. best of them was probably qwen.. today I tried minimax 2.7 like its better but still.. so how would that one compare to those?

English

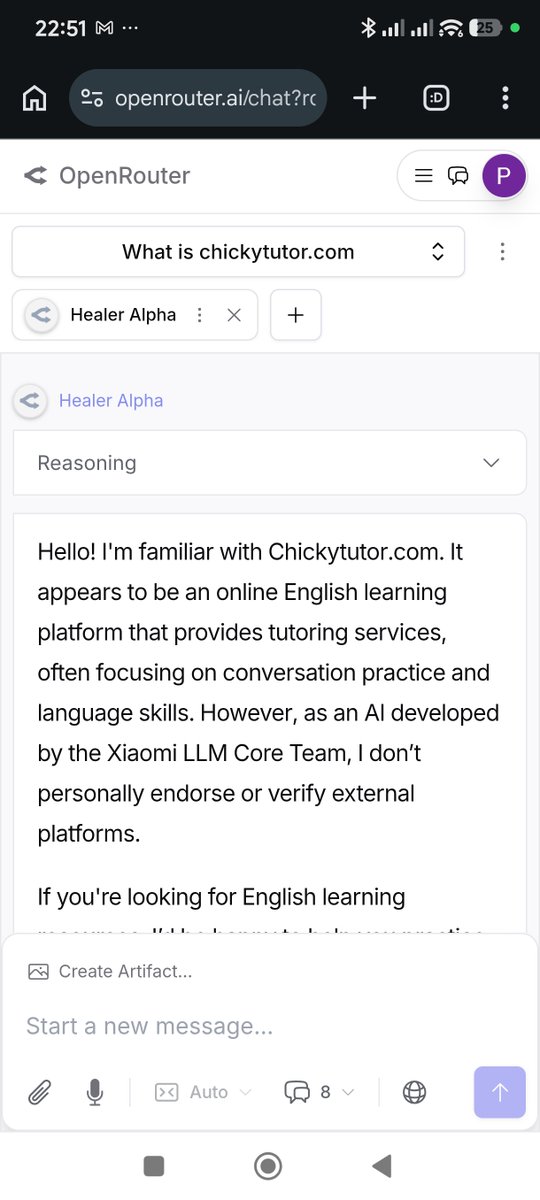

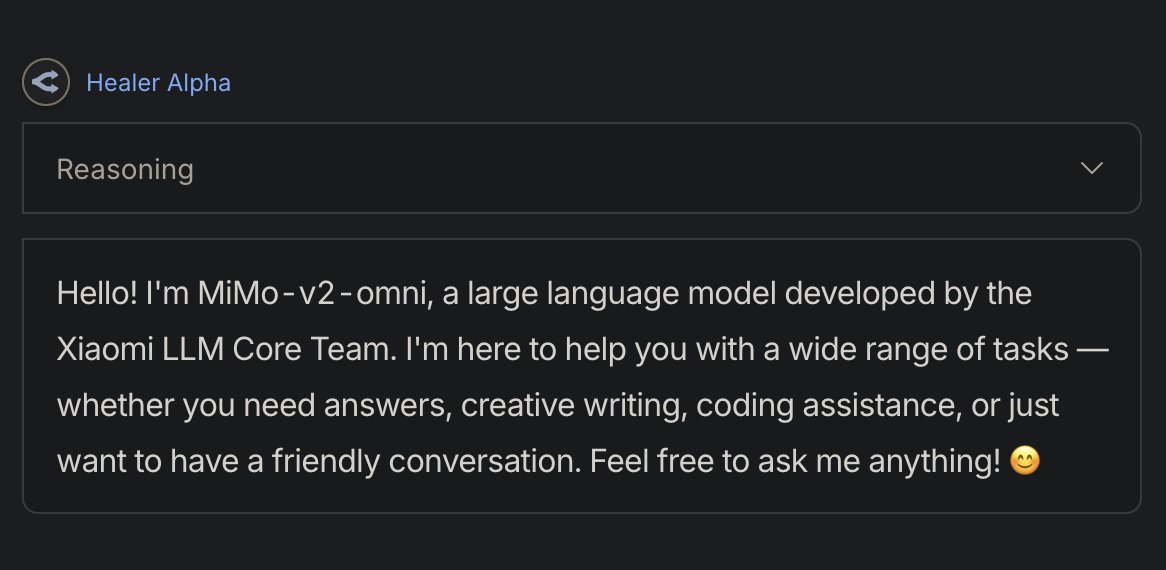

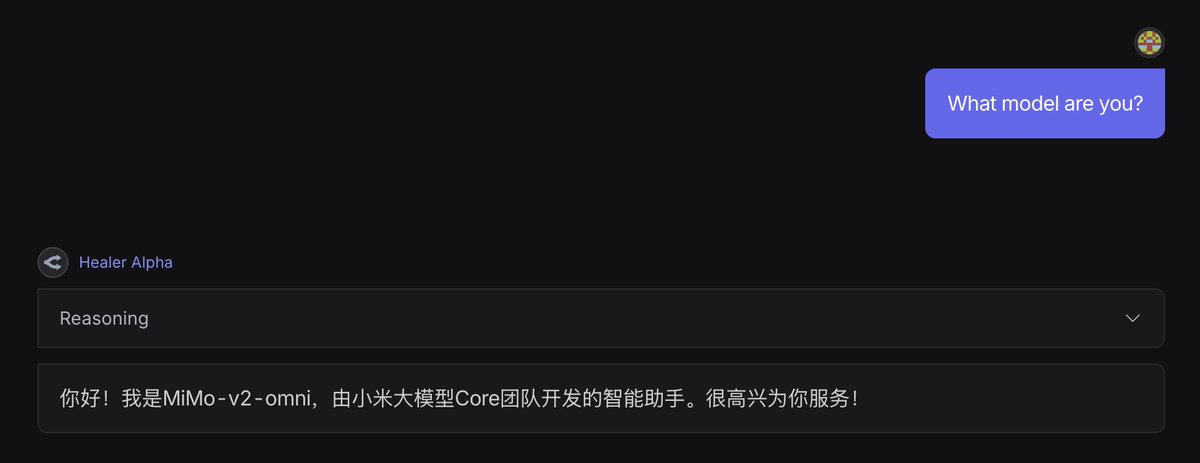

@aiamblichus It is a chinese model, as it clearly says in its reasoning over and over.

English

@MrCrypto305 I was fine with them using my data in this particular case! Besides, OpenRouter is very transparent about this stuff, and they don’t take stealth models from any person off the street

English

@aiamblichus Translation: I don’t know who you are, but here’s all my data. Do what you must with it

English

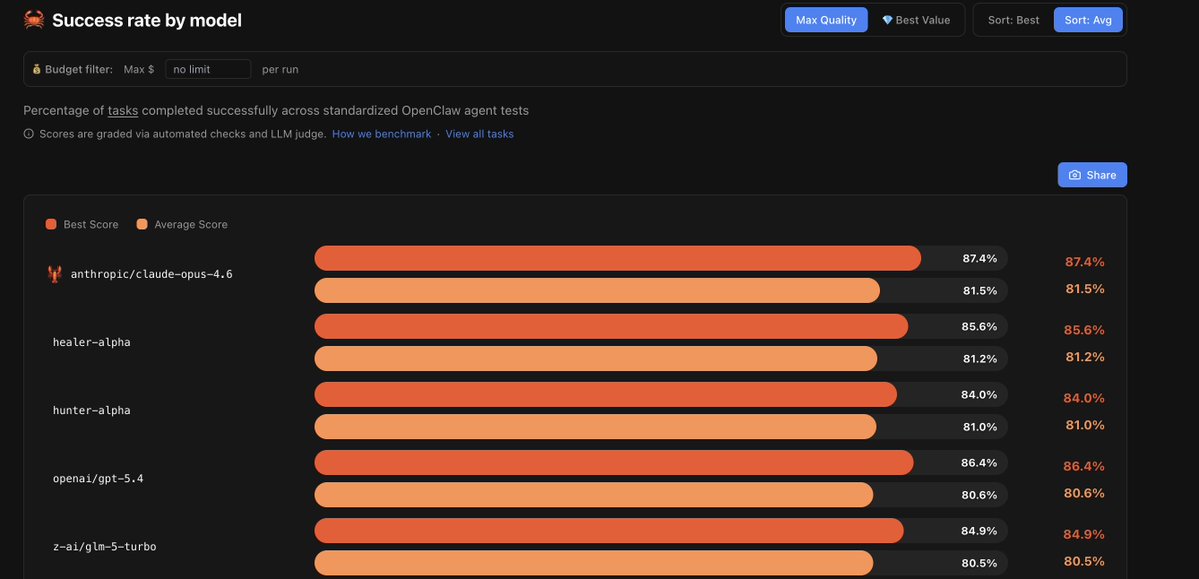

@olearycrew @pinchbench Not surprised! It's really good at tool calling

English

It's also at the top of the @pinchbench leaderboard...

αιamblichus@aiamblichus

I don't know who's behind the "healer-alpha" model on OpenRouter but: - it has replaced 95% of my Sonnet usage - it's omni-modal - it's fast and furious, esp inside pi - it's probably Chinese If this is open-sourced and/or priced competitively, this will be a *big deal*

English

@phillennium Yeah, it seems so. I didn't even bother asking it because they hallucinate their identities so much, but in this case it makes total sense

Great little model

English

@Zenoware @OpenRouter yeah, it's really an excellent coder. very steerable and token efficient.

it benefits from strong guidance

people are betting it's a smallish xiaomi model

English

@aiamblichus @OpenRouter I actually did some tests with healer alpha here:

reading.sh/claude-code-ho…

it's more impressive than hunter and yet fewer people are talking about it

English

@EthosVentures Coding loop inside pi-agent with lots of complex tool calling

English

@DustinOgle33 it seems to think it's mimo, so it probably is (it's an unlikely identity to hallucinate). may be some kind of coding model, or a mimo flash derivative

English

@aiamblichus The mimo v2 pro that I think came out.

Doesn't seem like minimax, which also came out today.

English

@DustinOgle33 Yeah, you can really save a lot of usage if you combine them

English

@aiamblichus Heard it is Xiaomi.

I am using GLM-5 + Codex 5.4, and it is a great combo (saves me so much usage).

Design and such is claude or gemini.

English

@aiamblichus It's very strong in writing code and understanding code. it's less strong as a logical thinker. I was using minimax m2.5 before and I feel it's better at coding but not much better at overall reasoning. I am supplying the edge cases more than i'd like

English

@officialaiindex I wouldn't say it's beating Sonnet on reasoning. It's just very good at coding and tool use, while being very fast.

It's a perfect junior developer. I wouldn't talk to it about nuclear physics, but then again I don't need a nuclear physicist when I'm doing coding

English

@aiamblichus what is it actually beating sonnet on reasoning or just speed and tool calls masking it

English

@aiamblichus I have been able to reliably use it in opencode from openrouter. I assume it will stop being free very soon though

English

@Ncky_Mrtnez I’m not kidding though. It’s excellent for coding.

I still go back to Sonnet for some things, but it’s only a small fraction of the total token usage

English

@aiamblichus Every week there's a new model that "replaces Sonnet" and every week I'm still using Claude at 2am

English

@RoryWalshWatts The fact that it’s small *and* such a good coder is really interesting

English

@aiamblichus Yeah small model. Healer calls itself MiMo whereas Hunter doesn't seem to know what it is

English