Alex Zhurkevich

339 posts

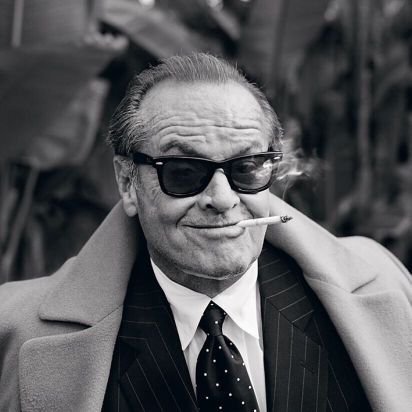

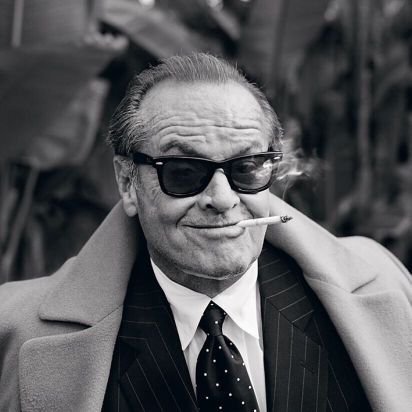

Alex Zhurkevich

@cudagdb

Select difficulty 👁️🗨️

@marksaroufim You build communities that last, and that’s rare. GPU Mode, PyTorch… they worked because you showed up with honesty and zero ego. This makes you a rare pokemon. Thanks for your service. Excited for what’s next. I'm sure you’re definitely not done leveling up.

realized the door im running in front of every day of is anthropics office let's see attendance on a saturday

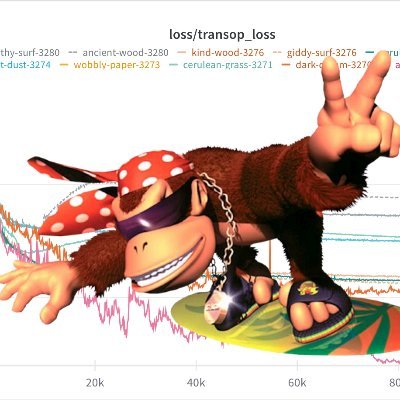

Trtllmgen kernels are now open. Fastest prefill and decode kernels for our target workloads. We wrote these to win InferenceX, MLPerf, other benchmarks. Powering some of today’s top served models. Dive in, learn, use them, or level up your own. Enjoy. github.com/flashinfer-ai/…

impressive, very nice. now let's compare a 31b dense to a 31b active 670b total instead. flop for flop

google shutting down a deepmind hedge fund

What do we call a "large scale MoE" nowadays? 100B+ 500B+?

We're releasing a technical report describing how Composer 2 was trained.

If you do this, don’t forget to set CUTLASS_LIBRARY_KERNELS to all True too. Enjoy the dreadful compile times though!

Excited to share @Standard_Kernel's seed round and some reflections on what we’ve learned about kernel generation and what we believe is next. Grateful to our amazing team, supporters, and the broader community pushing this space forward.