Jon Deaton

44 posts

Jon Deaton

@deaton_jon

Research Scientist at @GoogleDeepMind

San Francisco, CA شامل ہوئے Kasım 2012

178 فالونگ100 فالوورز

@mrkocnnll @longstosee I think the lack of violence in the modern world is weirder than the instincts to defend your kin tbh

English

@kvfrans pallas is the way to get what you want when xla doesn't do it

English

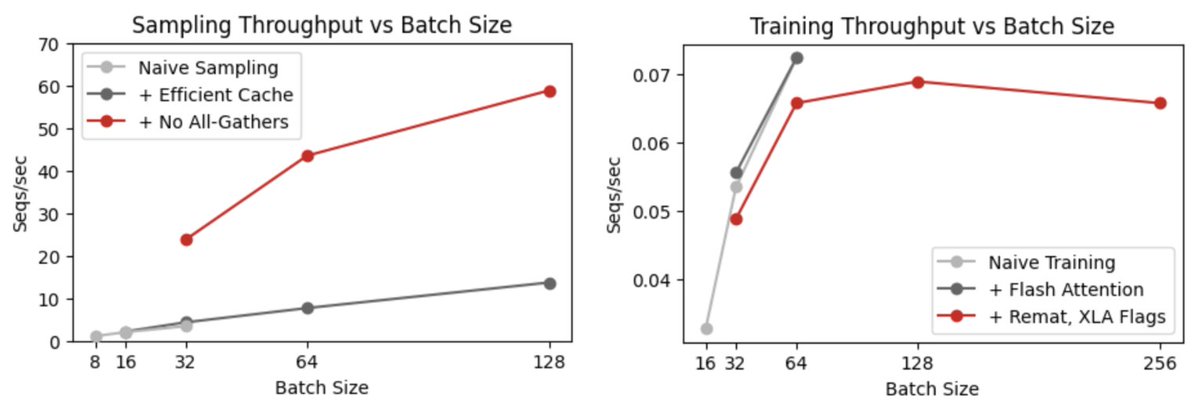

New notes: We've been building a research-friendly LLM-RL repo in JAX, and I recently took the time to optimize the sampling/training pipeline.

We're able to match vLLM sampling and get decent training batchsizes now!

notes.kvfrans.com/7-misc/rl-infr…

English

@francoisfleuret vae is an adversarial training dynamic are you expecting different curves

English

Weirdest graph ever, but this thing is robust. The recovery on Human Eval + is spectacular.

Anway version +1 already running, we'll see.

François Fleuret@francoisfleuret

English

just out of vain curiousity ; what happens if you increase the complexity of attention? like, has anyone tried cubic attention lol

Lucas Beyer (bl16)@giffmana

> There’s no free lunch. > When you reduce the complexity of attention, you pay a price. > The question is, where? This is *exactly* how I typically end my Transformer tutorial. This slide is already 4 years old, I've never updated it, but it still holds:

English

@francoisfleuret I thought this was going to be a big problem especially in your sigma gpt but I found it doesn't matter

English

@thisismadani thats a neat trick you did to preserve the token positions of the span while doing fill-in-the middle. seems effective

English

This is an incredibly cool plot - it shows how protein language models form internal representations of physical structure when trained only on amino acid sequences selected by evolution.

The representation fidelity scales with compute.

Alex Rives@alexrives

Information about protein structure in ESM C representations improves predictably with increasing training compute, demonstrating linear scaling across multiple orders of magnitude. (We overtrained the 300M and 600M models past the predicted point of compute optimality).

English