Mingyu Ding

22 posts

@dingmyu

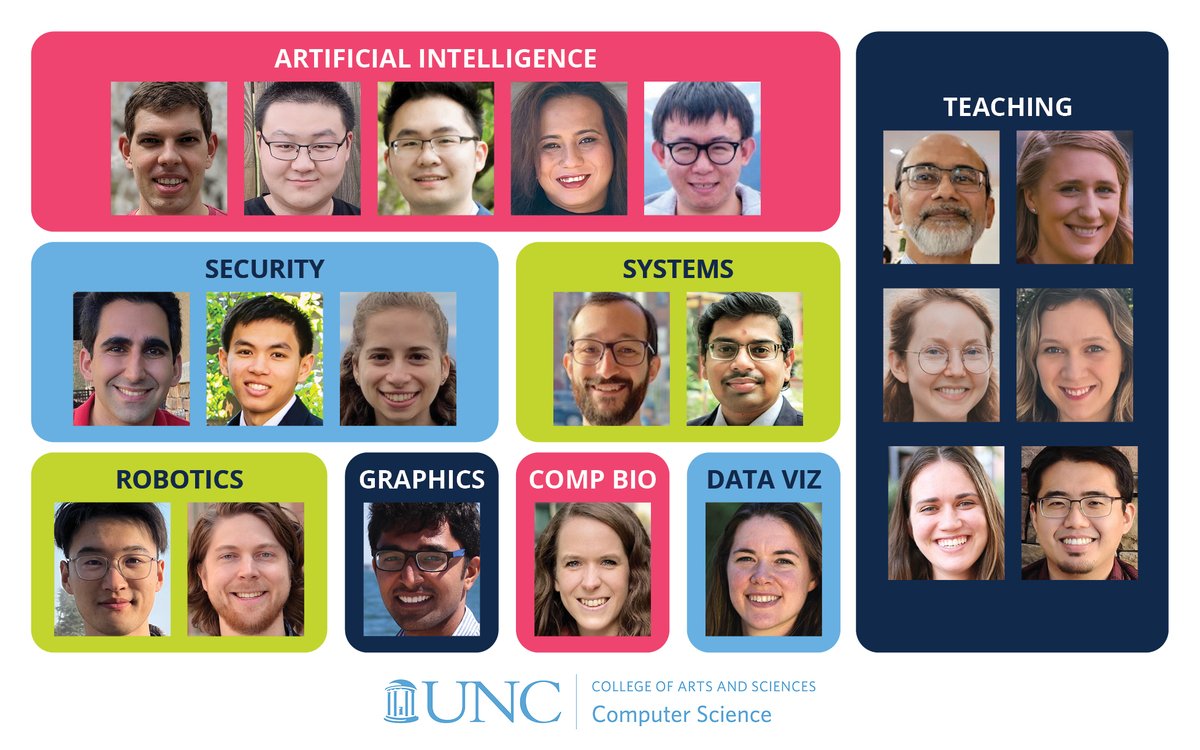

Assistant Professor @UNC @unccs | IDEAL@UNC | Dexterous/Loco-Manipulation | #robotics, #embodiedAI, #3Dvision, #foundationmodels.

Introducing Moto: Latent Motion Token as the Bridging Language for Robot Manipulation. Motion prior learned from videos can be seamlessly transferred to robot manipulation. Code and model released! @ChenYi041699 @tttoaster_ @ge_yixiao @dingmyu @yshan2u chenyi99.github.io/moto/

Human perception is inherently situated – we understand the world relative to our own body, viewpoint, and motion. To deploy multimodal foundation models in embodied settings, we ask: “Can these models reason in the same observer-centric way?” We study this through SAW-Bench: a novel benchmark for observer-centric situated awareness: - 786 real world egocentric videos - 2,071 human-annotated QA pairs Across all tasks, we evaluate 24 state-of-the-art MFMs: 📉 Best model: 53.9% 🧑 Humans: 91.6% Models systematically: ❌ Confuse head rotation with physical movement ❌ Collapse under multi-turn trajectories ❌ Fail to maintain persistent world-state memory 👉 We see that maintaining a stable observer-centric representation remains challenging. As MFMs are increasingly integrated into embodied agents, situated awareness becomes essential for reliable real-world interaction. We release SAW-Bench and encourage further research toward improving observer-centric reasoning in multimodal foundation models.