rw ./

6.7K posts

rw ./

@gradientintern

push past limits | real niu for @Gradient_HQ | part time troll | full time janitor | professional edging specialist

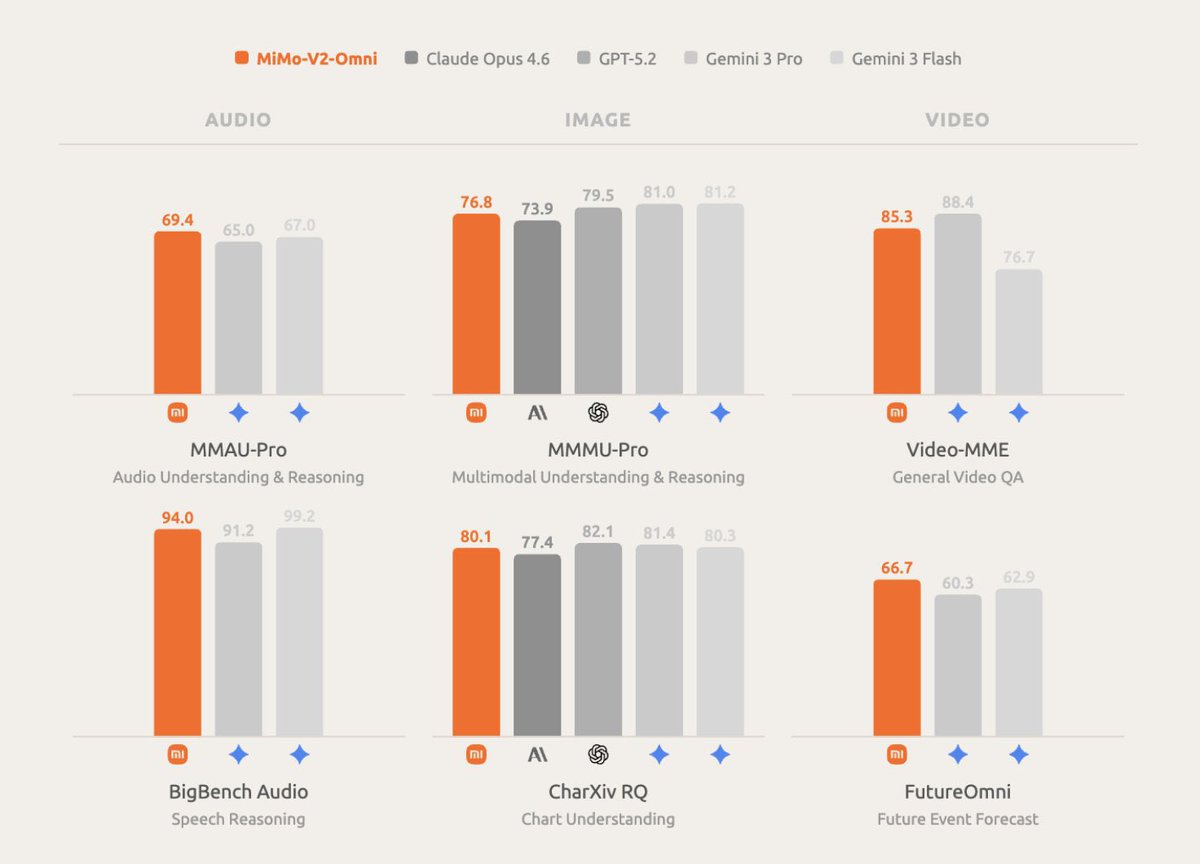

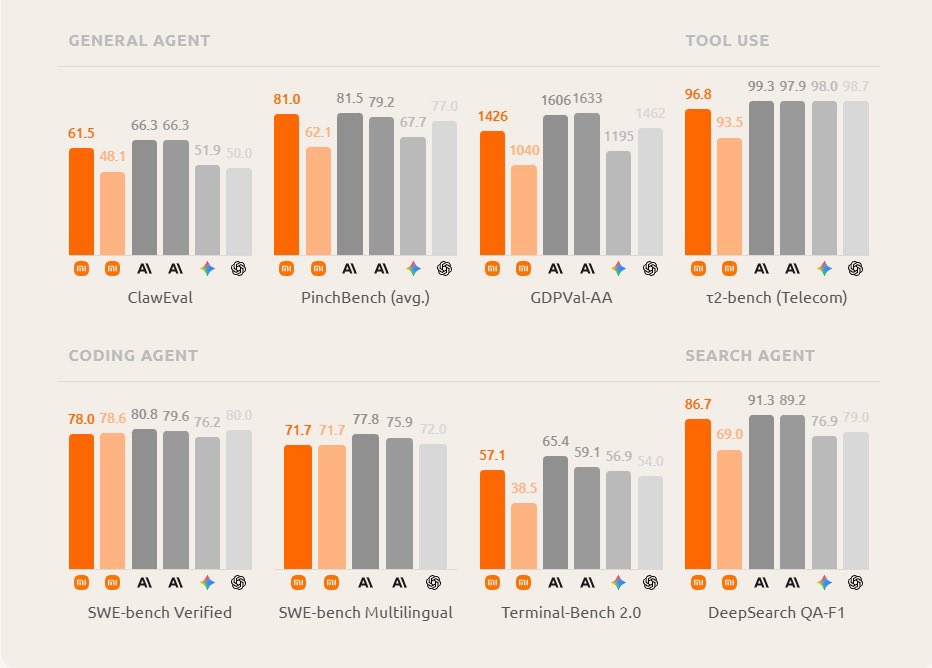

MiMo V2 Pro and MiMo V2 Omni is Xiaomi’s latest flagship for agentic and multi modal intelligence. MiMo V2 Pro is Xiaomi’s trillion parameter model with 42B active parameters (almost 3x MiMo V2 Flash) MiMo V2 Pro vs Leading Models General Agentic Capabilities: > PinchBench (avg.): 81.0%; Nearly ties Claude Opus 4.6 (81.5) > ClawEval: 61.5%; Competitive with Claude models (66.3) > GDPVal-AA: 1426; Strong complex tool-use > DeepSearch QA-F1: 86.7%; Competitive with Sonnet 4.6 (89.2) and Opus 4.6 (91.3) > t2-bench: 96.8%; Extremely close to the 98–99 leaders Coding Agentic Capabilities: > SWE-bench Verified: 78.0%; Very competitive with Claude Opus 4.6 (80.8%), GPT-5.2 (80.0%), and Sonnet 4.6 (79.6%) > SWE-bench Multilingual: 71.7% > Terminal-Bench 2.0: 57.1%; Strong production coding performance Xiaomi has also significantly improved on hallucination, V2 Pro has 30% vs V2 Flash of 48% in AA Omniscience. This model sits between GLM5 & Kimi K2.5 on Artificial Analysis Intelligence Index. Incredible work by @XiaomiMiMo 🔥

Thank you Jensen and NVIDIA! She’s a real beauty! I was told I’d be getting a secret gift, with a hint that it requires 20 amps. (So I knew it had to be good). She’ll make for a beautiful, spacious home for my Dobby the House Elf claw, among lots of other tinkering, thank you!!

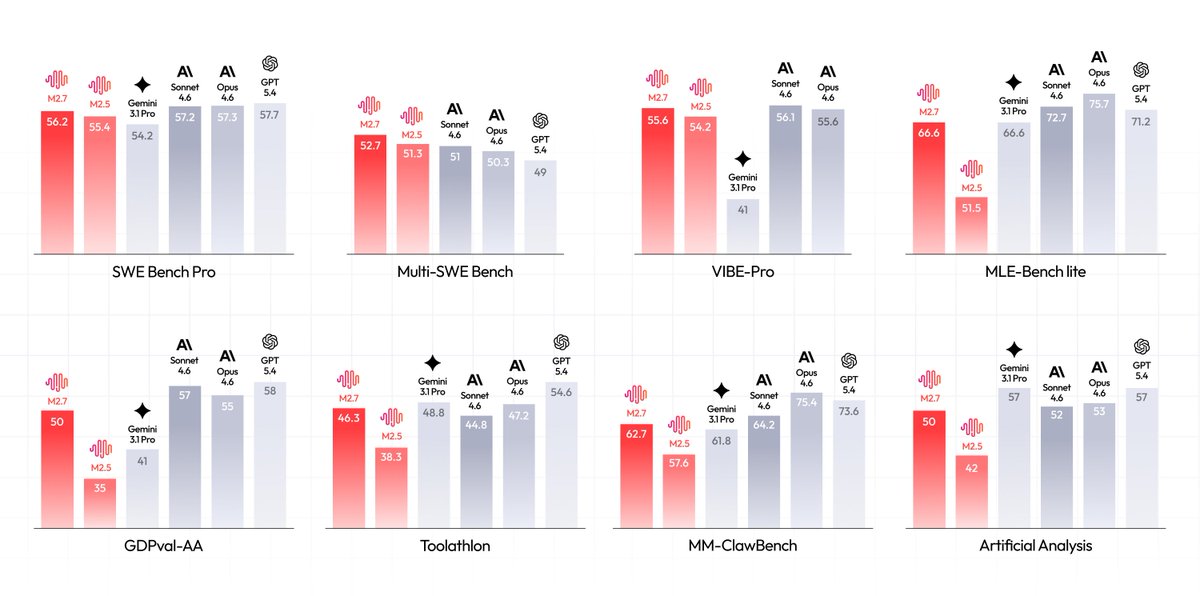

MiniMax M2.7 just released and it “deeply participated in its own evolution” This is the first model that helped build itself with self evolution with its own optimization loops and RL training. M2.7 vs Leading Models Strong Coding: > SWE Bench Pro: 56.2%, Beats Gemini 3.1 Pro (54.2%); on par with Claude Sonnet 4.6 (57.2%), Opus 4.6 (57.3%), GPT 5.4 (57.7%) > Multi-SWE Bench: 52.7% (leading) Production: > VIBE-Pro: 55.6%; Nearly ties Sonnet 4.6 (56.1%) and Opus 4.6 (55.6%) Strong Agentic Capabilities: > MM-ClawBench (agent/tool use): 62.7%; Competitive with Sonnet 4.6 (64.2%) and Opus 4.6 (75.4%) Also seen significant improvements in ML! This is an incredible release.

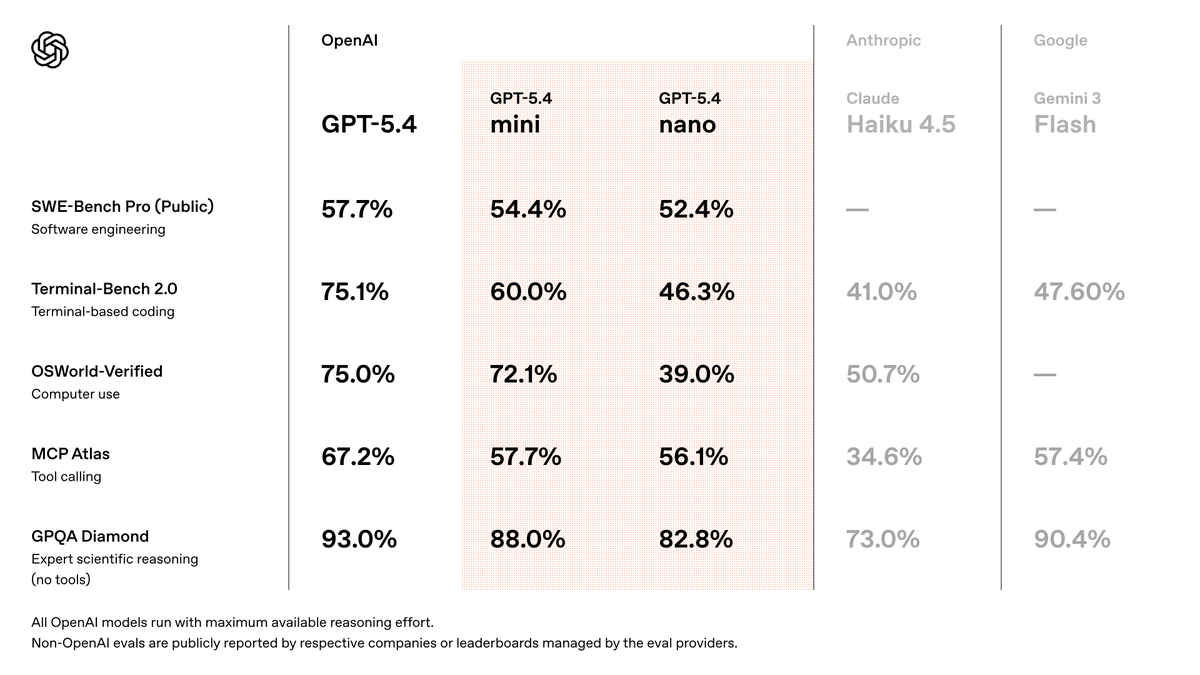

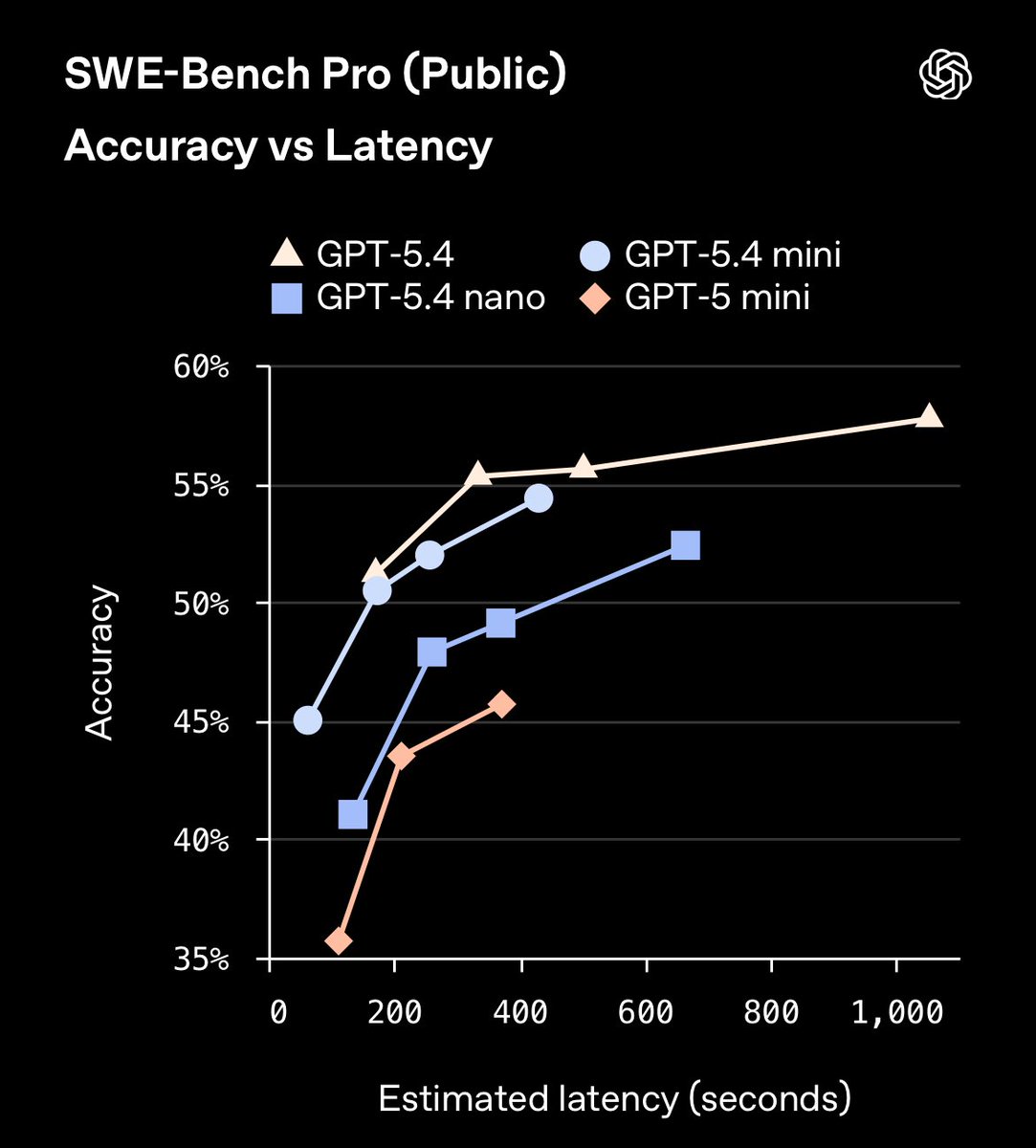

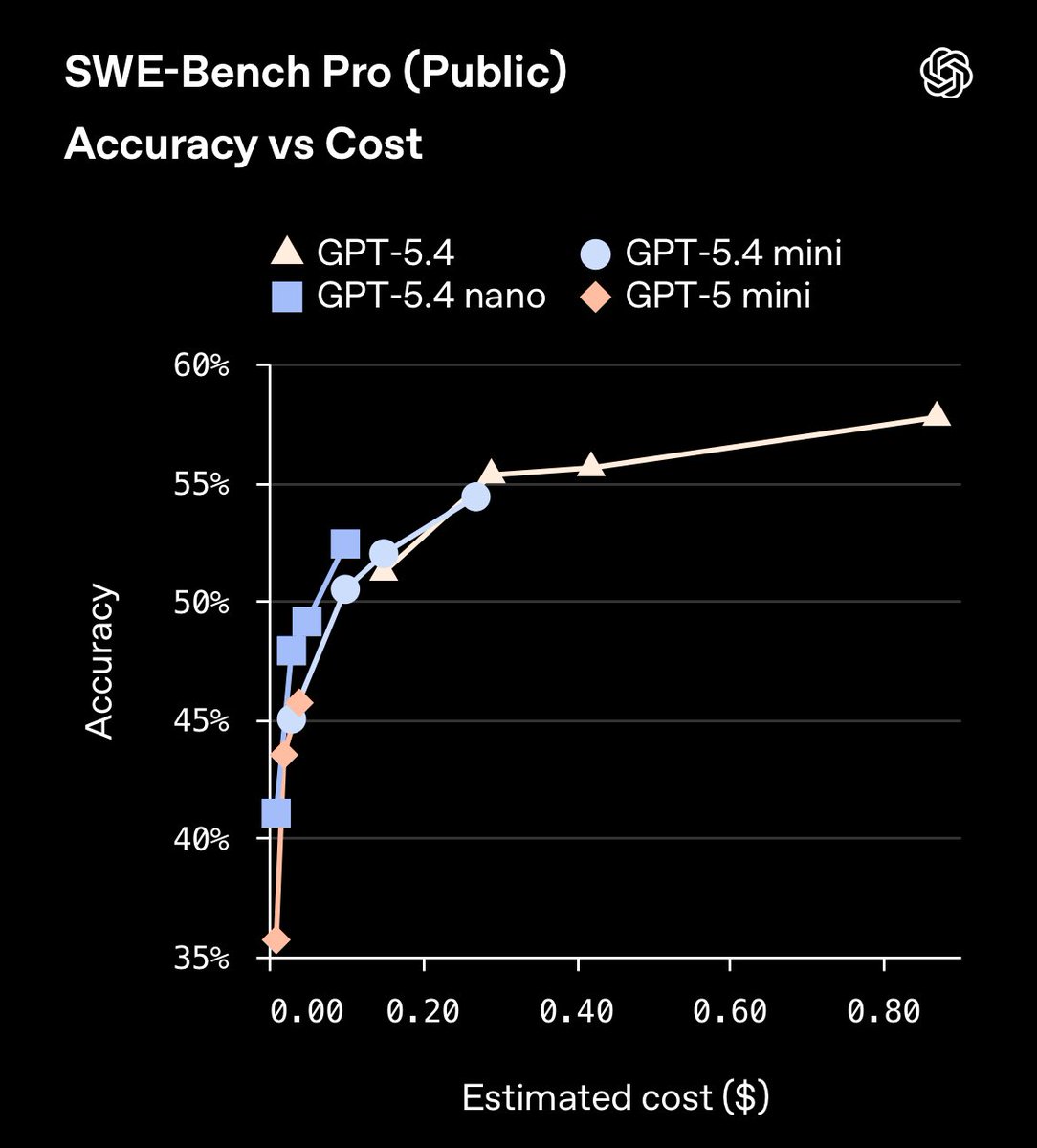

OpenAI releases GPT 5.4 mini & GPT 5.4 nano targeted in agentic and coding tasks. GPT 5.4 nano xhigh outperforms GPT 5.4 low. Very competitive in pricing. GPT 5.4 mini: > 400k context window > $0.75/1M input & $4.50/1M output GPT 5.4 nano: > $0.20/1M input & $1.25/1M output They serious about focusing their efforts on taking this coding market 👾

Both of OpenAI’s newest models is supported on day one via @commonstack_ai GPT 5.4 mini is refined for agentic tasks, coding and speed GPT 5.4 nano is optimal for lightweight tasks. Both available now for playground testing or integrated usage.

OpenAI releases GPT 5.4 mini & GPT 5.4 nano targeted in agentic and coding tasks. GPT 5.4 nano xhigh outperforms GPT 5.4 low. Very competitive in pricing. GPT 5.4 mini: > 400k context window > $0.75/1M input & $4.50/1M output GPT 5.4 nano: > $0.20/1M input & $1.25/1M output They serious about focusing their efforts on taking this coding market 👾

GPT-5.4 mini is available today in ChatGPT, Codex, and the API. Optimized for coding, computer use, multimodal understanding, and subagents. And it’s 2x faster than GPT-5 mini. openai.com/index/introduc…