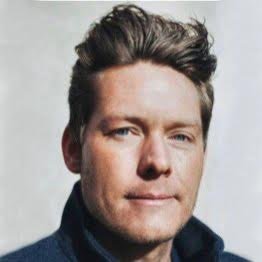

Hellas

252 posts

Hellas

@hellasdotai

serverless AI secured by web3 - https://t.co/irTCUyjkrv

Wow, I totally disagree with this statement. At the current state, AI actually amplifies the developer to developer difference. If you were a 10x developer, you had good ideas + architectural clarity, this is a brutal advantage when using AI. Steering is a fundamental part of today's AI development.

Chamath on how AI agents are making the "10x engineer" distinction disappear because the most efficient "code paths" are now obvious to everyone. Just as AI solved chess and removed the mystery of the best move, AI is doing the same for coding, making the process reductive and removing technical differentiation. "I'm going to say something controversial: I don't think developers anymore have good judgment. Developers get to the answer, or they don't get to the answer, and that's what agents have done. The 10x engineer used to have better judgment than the 1x engineer, but by making everybody a 10x engineer, you're taking judgment away. You're taking code paths that are now obvious and making them available to everybody. It's effectively like what happened in chess: an AI created a solver so everybody understood the most efficient path in every single spot to do the most EV-positive (expected value positive) thing. Coding is very similar in that way; you can reduce it and view it very reductively, so there is no differentiation in code." --- From @theallinpod YT channel (link in comment)

![[semi-employed bi-homicidal wandering jewess]](https://pbs.twimg.com/profile_images/1485704932133150725/dKmHtVxf.jpg)

![[semi-employed bi-homicidal wandering jewess]](https://pbs.twimg.com/profile_images/1485704932133150725/dKmHtVxf.jpg)

This is a cool example of how you can use AI to help your writing—without relying on it for any actual writing. From @jasminewsun theatlantic.com/technology/202…

What if you could create an auto-research where your agent just focused on the eval and it was designed so that others could have swarms of agents across the web try to solve it and you paid them based on the ownership of the mechanism which produced the research

I’m surely being stupid. But if AI is rather unconstrained by expertise or capacity or to some extent speed Why do we need to divide tasks or departments to 9 agents ( the marketing agent, the optimization agent etc ) to each do one thing. And then another agent to manage the swarm. Cant one agent just be doing it all you know. It seems very skeuomorphic. Will we have HR agents to make sure the agent agents are being looked after ? A office canteen manager agent to feed the agents ? Seems daft

Used autoresearch to make @grail_ai GRPO trainer 1.8x faster on a single B200. I kept postponing this for weeks since the bottleneck in our decentralized framework was mainly communication. But after our proposed technique, PULSE, made weight sync 100x faster, the training update itself became the bottleneck. Even with a fully async trainer and inference, a slow trainer kills convergence speed. A task that could've eaten days of my time ran in parallel while I worked on other stuff. Unlike original autoresearch, where each experiment is 5 min, our feedback loop is way longer (10-17 min per epoch + 10-60 minutes of installations and code changes), so I did minimal steering when it was heading in bad directions to avoid burning GPU hours. The agent tried so many things that failed. But, eventually found the wins: Liger kernel, sequence packing, token-budget dynamic batching, and native FA4 via AttentionInterface. 27% to 47% MFU. 16.7 min to 9.2 min per epoch. If you wanna dig deeper or contribute: github.com/tplr-ai/grail We're optimizing everything at the scale of global nodes to make decentralized post-training as fast as centralized ones. Stay tuned for some cool models coming out of this effort. Cheers!

New category emerging: Headless SaaS Not infrastructure as a service / platform as a service Traditional software (Photoshop, Slack, Jira) rebuilt with agent-first APIs. - No UI - Programmatic access - Essentially the same product with different interface Entirely new business model.

the micro1 robotics lab: real world data for intelligent models that co-exist in the physical world. we’re in-the-wild across 75 countries in 6,000+ unique environments collecting data. diverse movements, objects, and settings. the future of AI is as human as you can imagine. join us to start training robots today (link in comments).

@nxthompson @jasminewsun Just wondering — why not use your own mind to do this yourself?

Some people at frontier AI labs told me they believe startups are over. OpenAI, Anthropic, Google, xAI will absorb every industry as AGI nears. Coding today, science, medicine, and finance next. Then everything else. If they’re right, that’s a pretty boring end of the world.