Sabitlenmiş Tweet

A small step for mankind, a massive leap for decentralised training... for agency.

In the space of 9 months, @tplr_ai went from 1.2B -> 72B.

It's never been easy, and has broken everyone on the team multiple times. But I speak for all of us when I say it is the most rewarding thing we have ever done.

We have a fraction of the resources. We don't have the PhDs. But Bittensor shows you it doesn't matter. Innovation happens at the edge. We innovate through scarcity.

The ones who rewrite the rules are never the ones with the most. They're the ones who refuse to accept the limits they were handed.

Bittensor is prophecy. Subnets (@covenant_ai and others) are the tools through which that prophecy is manifested.

Next stop: TRILLIONS.

templar@tplr_ai

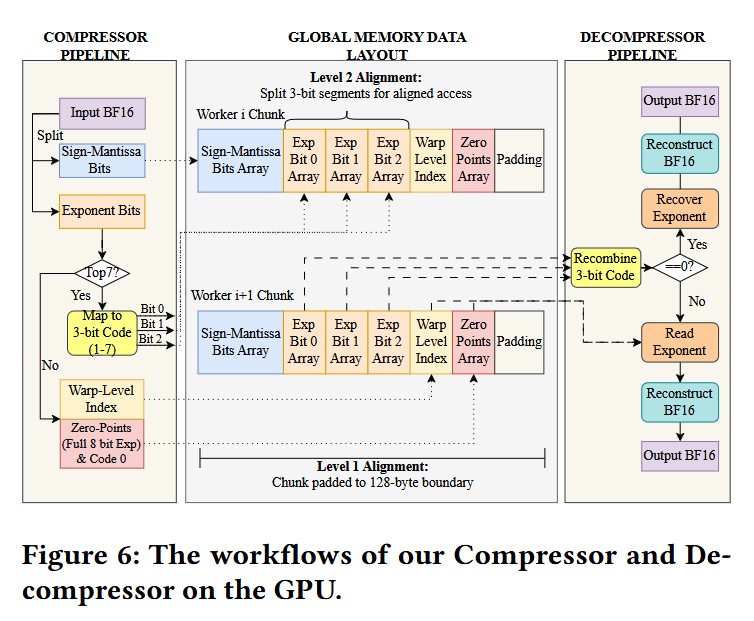

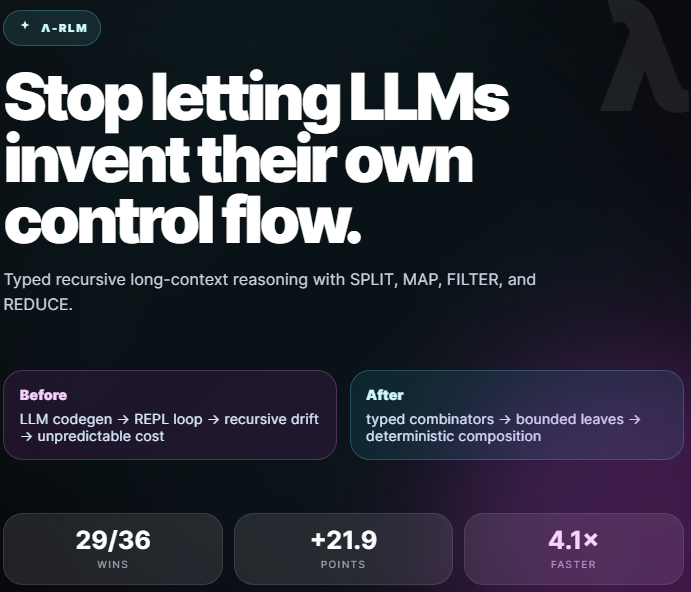

We just completed the largest decentralised LLM pre-training run in history: Covenant-72B. Permissionless, on Bittensor subnet 3. 72B parameters. ~1.1T tokens. Commodity internet. No centralized cluster. No whitelist. Anyone with GPUs could join or leave freely. 1/n

English