Mohamed

1.7K posts

Mohamed

@mohammad2012191

وماذا عليك لو ذهبوا هُم بالدنيا، وأنتَ ذهبت بالقرآن؟ | AI Researcher @ KAUST - Kaggle Competitions Master (Highest Rank: 236th in the World)

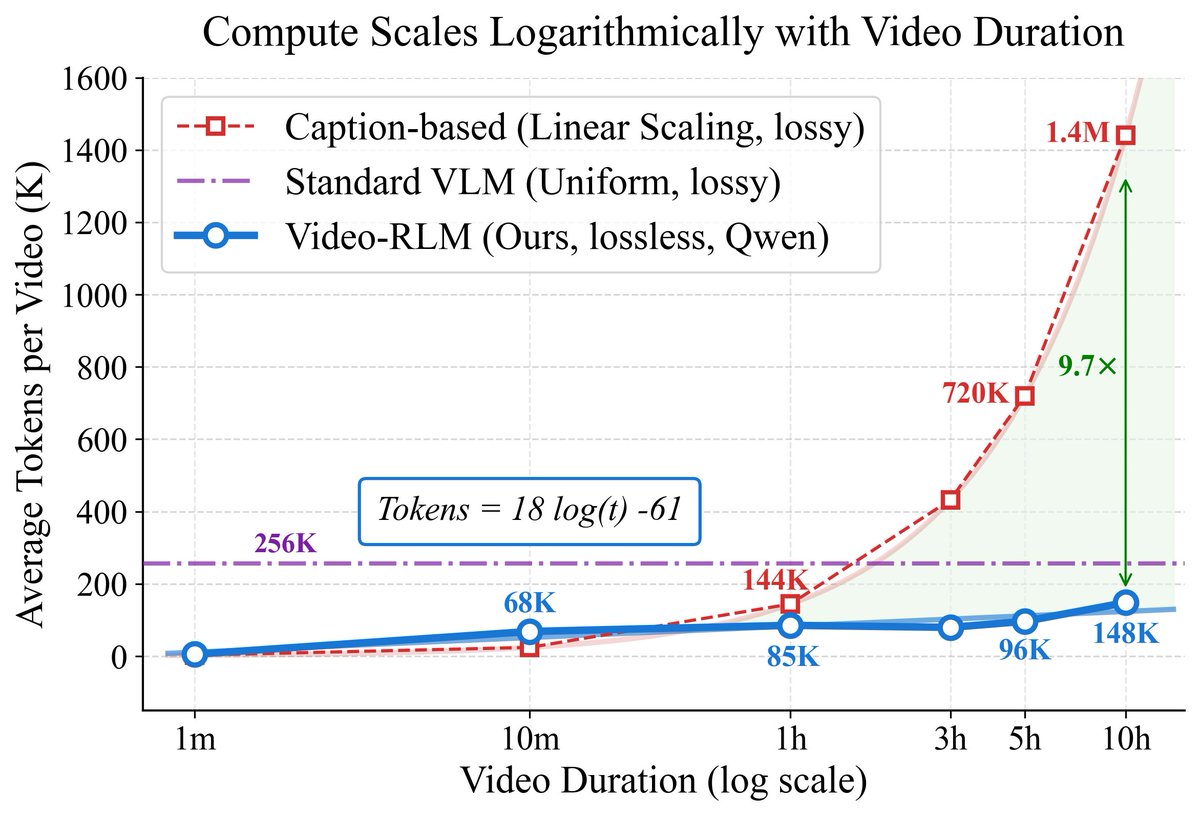

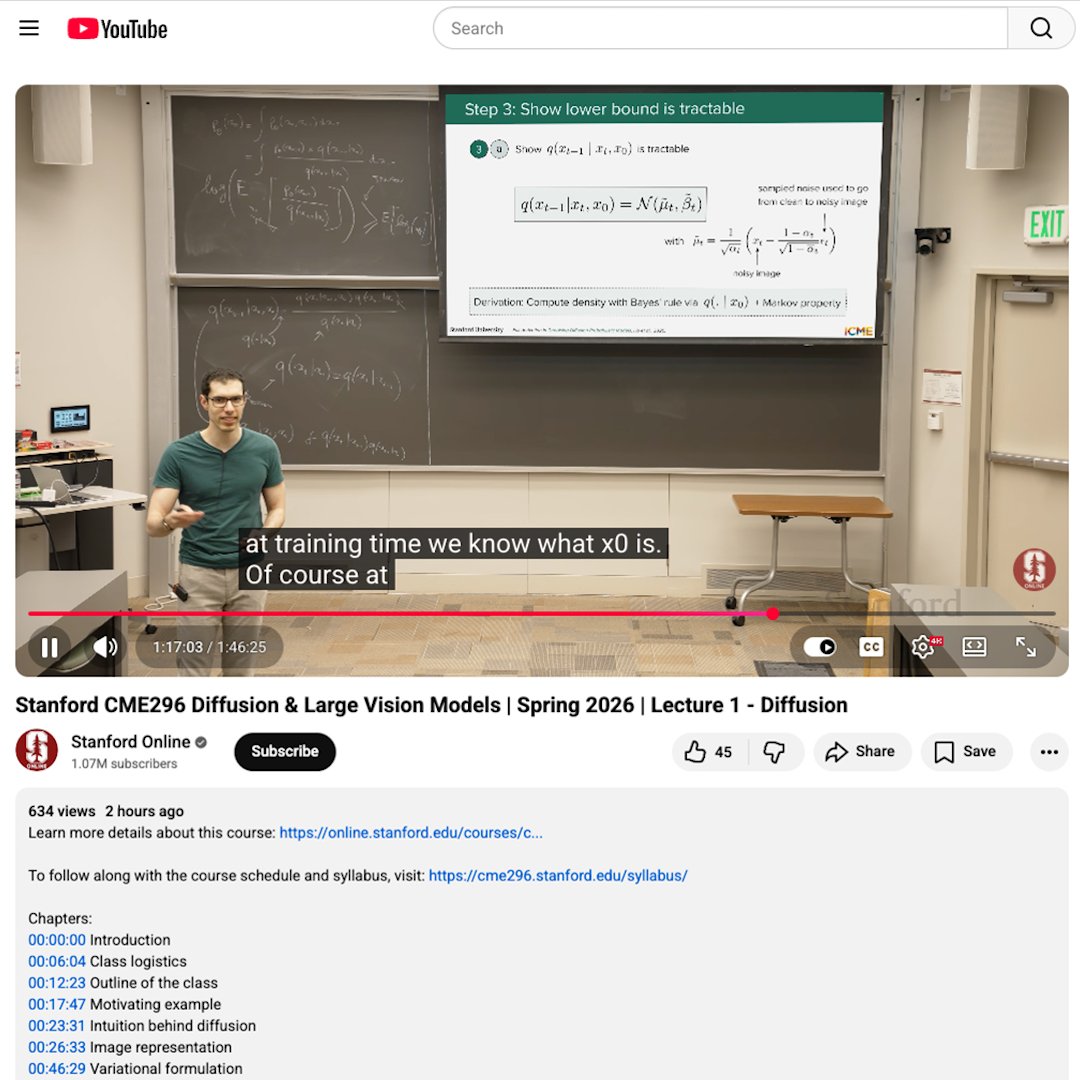

What if understanding a video was more like navigating a map?🤔 And what if that made compute scale logarithmically (not linearly) with video length?! New preprint🎉: 🗺️VideoAtlas: Navigating Long-Form Video in Logarithmic Compute

What if understanding a video was more like navigating a map?🤔 And what if that made compute scale logarithmically (not linearly) with video length?! New preprint🎉: 🗺️VideoAtlas: Navigating Long-Form Video in Logarithmic Compute

Dario seems to think China and open source will hit Mythos capabilities in 6-12 months

“Attention Residuals” is now available on AlphaXiv! In standard transformer, every layer just inherits an equal sum of all earlier layers, so as models get deeper, useful computations get diluted instead of being selectively reused. The research team at @Kimi_Moonshot proposes Attention Residuals which fixes this by letting each layer attend over previous layers with learned weights, so depth works more like retrieval than accumulation. This makes training more stable, improving scaling and downstream performance with almost no extra overhead, specifically, 1.25x compute advantage against the standard transformer baseline.

There are verifiable rewards everywhere for those with eyes to see