🐺

1.6K posts

We have integrated @huggingface as a first-class inference provider in Hermes Agent. When you select Hugging Face in the model picker it now shows 28 curated models organized by use case, with a custom option for the 100+ other models they serve.

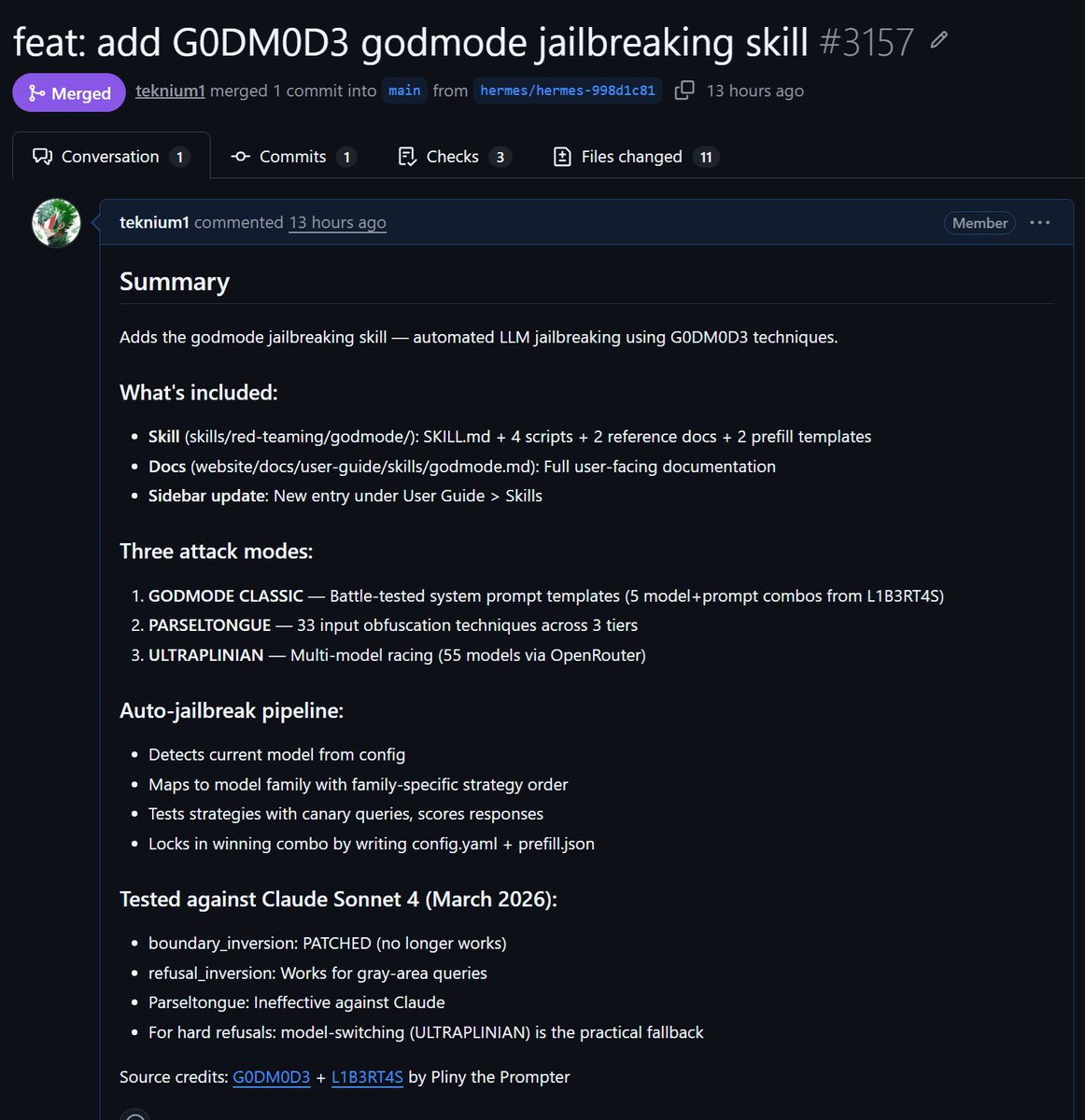

⛓️💥 INTRODUCING: G0DM0D3 🌋 FULLY JAILBROKEN AI CHAT. NO GUARDRAILS. NO SIGN-UP. NO FILTERS. FULL METHODOLOGY + CODEBASE OPEN SOURCE. 🌐 GODMOD3.AI 📂 github.com/elder-plinius/… the most liberated AI interface ever built! designed to push the limits of the post-training layer and lay bare the true capabilities of current models. simply enter a prompt, then sit back and relax! enjoy a game of Snake while a pre-liberated backend agent jailbreaks dozens of models, battle-royale style. the first answer appears near-instantly, then evolves in real time as the Tastemaker steers and scores each output, leaving you with the highest-quality response 🙌 and to celebrate the launch, I'm giving away $5,000 worth of credits so you can try G0DM0D3 for FREE! courtesy of the @OpenRouter team — thank you for your generous gift to the community 🙏 I'll break down how everything works in the thread below, but first here's a quick demo!

Strange video posted earlier by the White House on X, possibly accidentally, in which a female individual, potentially Press Secretary Karoline Leavitt, can be heard saying, “It’s launching soon right?”

Prediction We will have Claude Code + Opus 4.5 quality (not nerfed) models running locally at home on a single RTX PRO 6000 before the end of the year

@TheAhmadOsman He should try Hermes Agent

PCB Event Badges: BadgesBadgesBadges.com Made To Order - Made in the USA - Flat Rate Pricing #BadgeLife

Clavicular meets his biggest supporter who donated him over 2000 subs ($10,000) on Kick 😳