Saurav Muralidharan

252 posts

Saurav Muralidharan

@srv_m

Research Scientist @NVIDIA | Making LLMs More Efficient

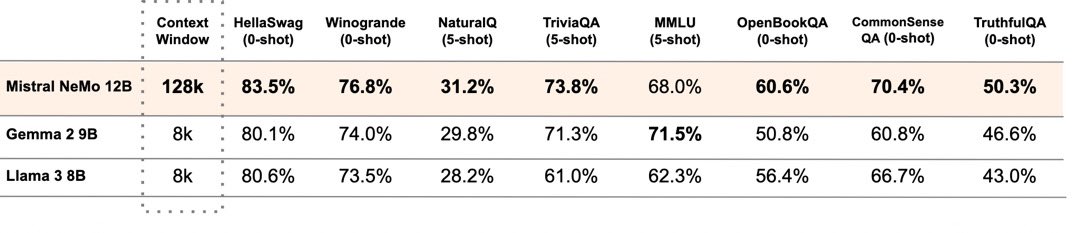

🚀 40x Faster Model Training via Pruning and Distillation! Permissive Minitron-4B and Minitron-8B models! 🔗 Paper: arxiv.org/abs/2407.14679 🔗 GitHub: github.com/NVlabs/Minitron 🔗 Models on HF: bit.ly/4ffjnQj Key highlights of 4B/8B models: 📊 2.6B/6.2B active non-embedding parameters ⚡ Squared ReLU activation in MLP – welcome back, sparsity! 🗜️ Grouped Query Attention with 24/48 heads and 8 queries 🌐 256K vocab size for multilingual support 🔒 Hidden size: 3072/4096 🔧 MLP hidden size: 9216/16384 📈 32 layers 👐 Permissive license! Details below 🧵

Tired of training varying-size LLMs to fit various GPU memory and latency requirements? Check out Flextron! Our new ICML (Oral) paper shows how to train one model deployable across GPU series. Learn more: cairuisi.github.io/Flextron/🚀